This is the fifth and final article in a series that looks at a recent AEI paper by Collin Hitt, Michael Q. McShane, and Patrick J. Wolf, “Do Impacts on Test Scores Even Matter? Lessons from Long-Run Outcomes in School Choice Research.” The first four essays are here, here, here and here.

All week I’ve been digging into a recent AEI paper that reviews the research literature on short-term test-score impacts and long-term student outcomes for school choice programs. Here I’ll summarize the paper and what I believe is wrong with it, and conclude by calling on all parties in this debate to discuss the existing evidence in much more cautious tones.

What the AEI authors did

Hitt, McShane, and Wolf set out to review all of the rigorous studies of school choice programs that have impact estimates for both student achievement attainment—high school graduation, college enrollment, and/or college graduation. To my eye, they did an excellent and comprehensive job scanning the research literature to find any eligible studies, culling the ones lacking the sufficient methodological chops, and then coding each as to whether they found impacts that were statistically significantly positive, insignificantly positive, insignificantly negative, or significantly negative.

Hitt, McShane, and Wolf set out to review all of the rigorous studies of school choice programs that have impact estimates for both student achievement attainment—high school graduation, college enrollment, and/or college graduation. To my eye, they did an excellent and comprehensive job scanning the research literature to find any eligible studies, culling the ones lacking the sufficient methodological chops, and then coding each as to whether they found impacts that were statistically significantly positive, insignificantly positive, insignificantly negative, or significantly negative.

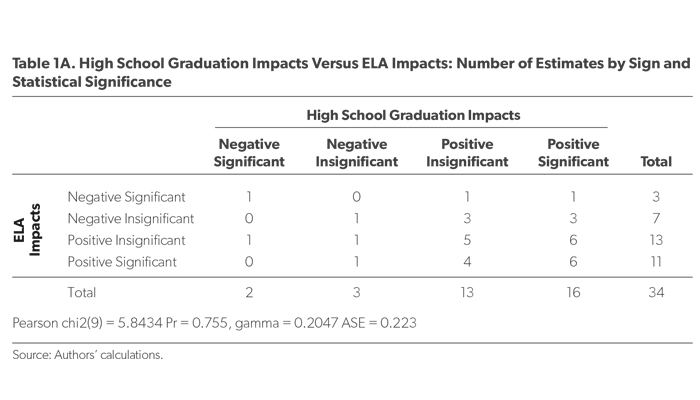

They then counted to see how many studies had findings for short-term test score changes that lined up with their findings for attainment. After running a variety of analyses, Hitt, McShane, and Wolf concluded that “A school choice program’s impact on test scores is a weak predictor of its impacts on longer-term outcomes.”

Where the AEI paper erred

But the authors made two big mistakes, as I argued here and here:

• They included programs in the review that are only tangentially related to school choice and that drove the alleged mismatch, namely early-college high schools, selective-admission exam schools, and career and technical education initiatives.

• Their coding system—which they admit is “rigid”—set an unfairly high standard because it requires both the direction and statistical significance of the short- and long-term findings to line up.

Both of these decisions are subject to debate. There’s an argument for making the choices the authors did, but also for them going the other way. What’s key is that different choices would have resulted in dramatically different findings.

For bona fide school choice programs, short-term test scores and long-term outcomes line up most of the time

If we focus only on the true school choice programs—private school choice, open enrollment, charter schools, STEM schools, and small schools of choice—and we look at the direction of the impacts (positive or negative) regardless of their statistical significance, we find a high degree of alignment between achievement and attainment outcomes. That’s because, for these programs, most of the findings are positive. (That is good news for school choice supporters!)

School Choice Programs’ Impacts On…

|

ELA |

Math |

High School Graduation |

College Enrollment |

College Graduation |

|

| Positive and Significant |

8 (38%) |

9 (43%) |

10 (45%) |

4 (50%) |

1 (50%) |

| Positive and Insignificant |

8 (38%) |

7 (33%) |

7 (32%) |

3 (38%) |

1 (50%) |

| Negative and Insignificant |

4 (19%) |

4 (19%) |

2 (9%) |

1 (12%) |

0 (0%) |

| Negative and Significant |

3 (14%) |

1 (5%) |

3 (14%) |

0 (0%) |

0 (0%) |

Thirty-eight percent of the studies show a statistically significantly positive impact in ELA, 43 percent in math, 45 percent for high school graduation, 50 percent for college enrollment, and 50 percent for college graduation. If we look at all positive findings regardless of whether they are statistically significant, the numbers for school choice programs are 76 percent (ELA), 76 percent (math), 77 percent (high school graduation), 88 percent (college enrollment), and 100 percent (college graduation). Everything points in the same direction, and the outcomes for achievement and high school graduation—the outcomes that most of the studies examine—are almost identical.

We also find significant alignment for individual programs. The impacts on ELA achievement and high school graduation point in the same direction in seventeen out of twenty-two studies, or 77 percent of the time. For math, it’s thirteen out of twenty studies, or 65 percent. For college enrollment, the results point in the same direction 100 percent of the time.

Here’s how that looks for the specific studies:

Impact Estimates on Achievement and Attainment

|

Achievement |

High School Graduation |

College Enrollment |

College Graduation |

|||||

|

Neg. |

Pos. |

Neg. |

Pos. |

Neg. |

Pos. |

Neg. |

Pos. |

|

| Private School Choice | ||||||||

| New York City Vouchers |

ELA/ |

X |

X |

|||||

| Milwaukee Parental Choice |

ELA/ |

X |

||||||

| DC Opportunity Scholarship |

ELA/ |

X |

||||||

| Open Enrollment | ||||||||

| Chicago Open Enrollment |

ELA |

X |

||||||

| Charlotte Open Enrollment |

ELA/ |

X |

X |

X |

||||

| Charter Schools | ||||||||

| NYC Charter High Schools |

ELA/ |

X |

||||||

| Harlem Promise Academies |

ELA/ |

X |

X |

|||||

| Boston Charter Schools |

ELA/ |

X |

X |

|||||

| Chicago Charter High Schools |

ELA/ |

X |

X |

|||||

| Florida Charter Schools |

ELA/ |

X |

X |

|||||

| Seed Charter School DC |

ELA |

Math |

X |

|||||

| High Performing California Charter High Schools |

ELA/ |

X* |

||||||

| Texas Charters |

ELA/ |

X* |

||||||

| Texas Charters “No Excuses” |

ELA/ |

X |

||||||

| Texas Charters “Other” |

ELA/ |

X |

||||||

| KIPP – enrolled for MS & HS |

ELA/ |

X* |

||||||

| KIPP – enrolled for just HS |

ELA/ |

X |

||||||

| Mathematica- “CMO 2” |

ELA |

X |

X |

|||||

| Mathematica- “CMO 5” |

ELA/ |

X |

||||||

| Mathematica- “CMO 6” |

ELA/ |

X |

||||||

| STEM Schools | ||||||||

| Texas I-STEM Schools |

ELA/ |

X |

||||||

| Small Schools of Choice | ||||||||

| Small Schools of Choice – NYC |

ELA/ |

X |

X |

|||||

| Small Schools of Choice – Chicago |

ELA/ |

X |

||||||

*Dropout rate rather than graduation rate.

**Does not mention math or ELA specifically.

Studies found that three school choice programs improved ELA and/or math achievement but not high school graduation: Boston charter schools, the SEED charter school, and the Texas I-STEM School. But there’s an obvious explanation: These are known as high-expectations schools, and such schools tend to have a higher dropout rate than their peers. That hardly means that their test score results are meaningless.

At the same time, there were four programs that “don’t test well”—initiatives that don’t improve achievement but do boost high school graduation rates: Milwaukee Parental Choice, Charlotte Open Enrollment, Non-No Excuses Texas Charter Schools, and Chicago’s Small Schools of Choice. (Charlotte also boosted college graduation rates.) Of these, only the Texas charter schools had statistically significantly negative impacts on achievement (ELA and math) and significantly positive impacts on attainment (high school graduation). That’s a true mismatch, and cause for concern. But it’s just a single study out of twenty-two.

Meanwhile, the eight studies that looked at college enrollment all found that test score impacts and attainment outcomes lined up: seven positive and one negative.

So is it fair to say, as the AEI authors do, that “a school choice program’s impact on test scores is a weak predictor of its impacts on longer-term outcomes”?

Hardly.

Where the debate goes from here

I’ve tried this week to keep my critiques substantive and not personal. I know, like, and respect all three authors of the AEI paper, and believe they are trying in good faith to help the field understand the relationship between short- and long-term outcomes.

What I hope I have demonstrated, though, is that their findings depended on decisions that easily could have gone the other way.

What all of us should acknowledge is that this a new field with limited evidence. We have a few dozen studies of bona fide school choice programs that look at both achievement and attainment. Most of these don’t examine college enrollment or graduation. That’s not a lot to go on, especially considering the many reasons to be skeptical of today’s high school graduation rates.

Given this reality, we should be cautious about making too much of any review of the research literature. I believe, as I did before this exercise, that programs that are making progress in terms of test scores are also helping students long-term. Others believe, as they did before this exercise, that there is a mismatch. Depending on how you look at the evidence, you can argue either side.

What we don’t have, though, is a strong, empirical, persuasive case to ditch test-based accountability, either writ large or within school choice programs.

Do impacts on test scores even matter? Yes, it appears they do. We certainly do not have strong evidence that they don’t.

— Mike Petrilli

Mike Petrilli is president of the Thomas B. Fordham Institute, research fellow at Stanford University’s Hoover Institution, and executive editor of Education Next.

This post originally appeared in Flypaper.