Complete survey results available here.

What do Americans think about their schools? More important, perhaps, what would it take to change their minds? Can a president at the peak of his popularity convince people to rethink their positions on specific education reforms? Might research findings do so? And when do new facts have the potential to alter public thinking? Answers to these questions can be gleaned from surveys conducted over the past three years under the auspices of Education Next and Harvard’s Program on Education Policy and Governance (PEPG). (For full results from the 2009 survey, download the PDF; for the 2007 and 2008 surveys see “What Americans Think about Their Schools,” features, Fall 2007, and “The 2008 Education Next—PEPG Survey of Public Opinion,” features, Fall 2008).

In a series of survey experiments, we find a substantial share of the public willing to reconsider its policy prescriptions for public schools. But this responsiveness is not uniform: presidential appeals are more persuasive to fellow partisans than to those who identify with the opposition party, research findings have the greatest impact when an issue remains unsettled, and learning basic facts has the biggest impact when those facts are not well known. None of this comes as a surprise, until one considers how stable aggregate public opinion has been over time.

Individual Volatility but Collective Stability

Individual Volatility but Collective Stability

The opinions expressed by individuals, when surveyed on political issues to which they have not given much thought, can appear so fragile as to be meaningless. More than one psephologist has shown that it is not uncommon for people, when repeatedly asked the same question, to give a positive response the first time, offer a negative one on the second occasion, and then return to a positive position the third time around. In such situations, opinions seem to be so lightly held they lack any content whatsoever.

Our own data likewise reveal a fair amount of volatility in the views expressed in the three Education Next—PEPG surveys by individual respondents, many of whom participated in multiple years. Of those asked to grade the nation’s public schools in both 2008 and 2009, for example, only 59 percent assigned the same grade both years. Among those who gave a grade of “A” or “B” in 2008, 46 percent awarded a grade of “C” or lower in 2009.

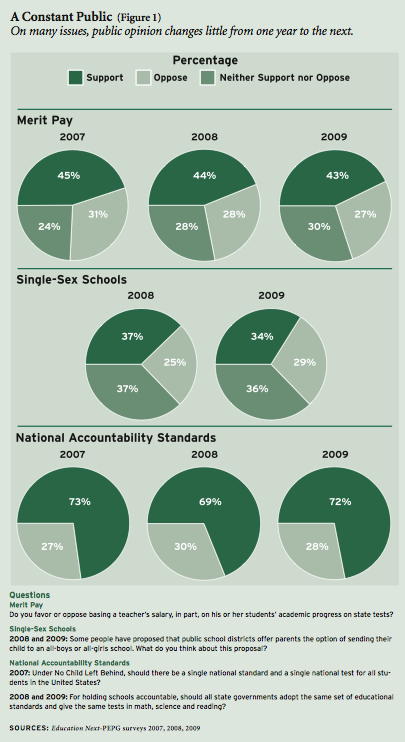

Numerous respondents also expressed different views on controversial policy issues across survey years. Among those who either completely or somewhat supported merit pay in 2008, 34 percent did not give that support one year later. Conversely, 29 percent of respondents who either completely or somewhat opposed the policy in 2008 did not express that opposition the next year. Similar churning is evident in the responses to questions concerning single-sex public schools, charter schools, and national standards.

The flip-flop that characterizes as much as one-third of individual responses does not produce equally large fluctuations in aggregate public opinion, however. On the contrary, the percentage of Americans holding to a particular point of view typically remains stable from one year to the next. On two-thirds of the domestic issues studied by political scientists Benjamin Page and Robert Shapiro, opinion did not change by more than 5 percentage points, despite the fact that years separated the fielding of different surveys. In the aftermath of major events—wars, economic recessions, or a terrorist attack—the views of the public as a whole may change abruptly and dramatically. More commonly, though, public opinion either holds firm or eases slowly in one direction or another.

Thinking on education policy follows the general pattern. In the three years of Education Next—PEPG surveys, we found little change in the responses to many of the questions posed in identical or similar ways across successive years (see Figure 1). Public opinion held steady on such issues as the introduction of merit pay for teachers, setting of uniform educational standards across the country, and the desirability of single-sex education.

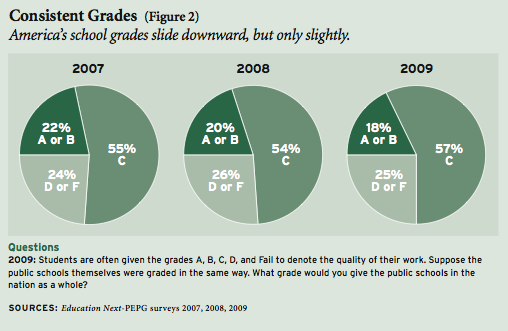

Nor did the public’s evaluation of American schools change much between 2007 and 2009, despite the media drumbeat of negative information about dropout rates and test scores. Indeed, the percentage of those surveyed willing to give the nation’s schools an “A” or a “B” slipped by just four points, from 22 percent in 2007 to 18 percent in 2009. Meanwhile, the share of adults giving schools a “D” or an “F” hovered around 25 percent throughout the three-year period (see Figure 2).

Nor did the public’s evaluation of American schools change much between 2007 and 2009, despite the media drumbeat of negative information about dropout rates and test scores. Indeed, the percentage of those surveyed willing to give the nation’s schools an “A” or a “B” slipped by just four points, from 22 percent in 2007 to 18 percent in 2009. Meanwhile, the share of adults giving schools a “D” or an “F” hovered around 25 percent throughout the three-year period (see Figure 2).

What accounts for the differences between individual and aggregate public opinion? Undoubtedly, part of the explanation is measurement error. Some of those answering our survey questions may have simply misread or misunderstood the questions in one year or the other, so their opinion seems to have changed when in fact it did not. Ordinarily, that kind of error balances itself out, as mistakes by one individual offset opposite errors by another.

But it seems unlikely that a third of our respondents would make such mistakes, and a substantial body of research on political behavior suggests that something else is going on as well. One prominent theory emphasizes the influence of public discourse. When people answer a survey item, they often draw upon a recent media report they have heard or conversation they have had with friends, relatives, or co-workers. Individual responses, then, vary from week to week as people are exposed to different claims. Collective opinion, however, remains constant so long as the general discourse does. If that theory is correct, then opinion in the aggregate changes only when public discourse shifts—either by a major event or with the introduction of a new fact or a new political force.

On some education issues, public discourse has changed since 2007. For instance, support for the federal No Child Left Behind Act has eroded, as evidence accumulated that the federal law was not living up to the promise of its grossly overstated name and politicians in both major parties found it to be an easy target (see Figure 3). Between 2007 and 2008, the share of adults who thought the law should be renewed (with no more than minor changes) fell by 7 percentage points. Support for the law stabilized after 2008, however, and roughly half the population still supports its reenactment with no more than modest revisions. And as we saw in previous years, a randomly selected group of respondents who were asked about “federal accountability policy” rather than “No Child Left Behind” expressed even higher levels of support.

On some education issues, public discourse has changed since 2007. For instance, support for the federal No Child Left Behind Act has eroded, as evidence accumulated that the federal law was not living up to the promise of its grossly overstated name and politicians in both major parties found it to be an easy target (see Figure 3). Between 2007 and 2008, the share of adults who thought the law should be renewed (with no more than minor changes) fell by 7 percentage points. Support for the law stabilized after 2008, however, and roughly half the population still supports its reenactment with no more than modest revisions. And as we saw in previous years, a randomly selected group of respondents who were asked about “federal accountability policy” rather than “No Child Left Behind” expressed even higher levels of support.

Similarly, as the current recession deepens, we see hints of growing taxpayer resistance to the rising cost of education. Support for increased spending on public education fell from 51 to 46 percent between 2007 and 2009. Confidence that spending more on schools would enhance school quality fell by a similar amount, from 59 to 53 percent. Still, these changes remain modest. Facing the most significant economic downturn since the Great Depression, most Americans continue to support increased spending on their local public schools.

What would it take, then, to move aggregate public thinking decisively in one direction or another? Might influential public figures, research findings, or factual knowledge lead at least some portions of the American public to update its thinking? To find out, we divided the more than 3,000 respondents to our 2009 survey into randomly chosen groups. The first group was simply asked its opinion about a policy question, while the second (and often a third or fourth) group was given some additional piece of information, such as the president’s position on the issue, a research finding, or a key fact. By comparing answers given by the different groups, which should be similar in composition, it is possible to gauge the impact of these additional sources of information on the public’s views. (For more methodological details, see sidebar.)

Professors or Politicians: Who Is More Influential?

We fielded our survey in March of 2009, when newly elected president Barack Obama enjoyed public approval ratings above 60 percent. The timing of the survey provided an ideal opportunity to estimate the impact an endorsement by a popular president can have on policy views.

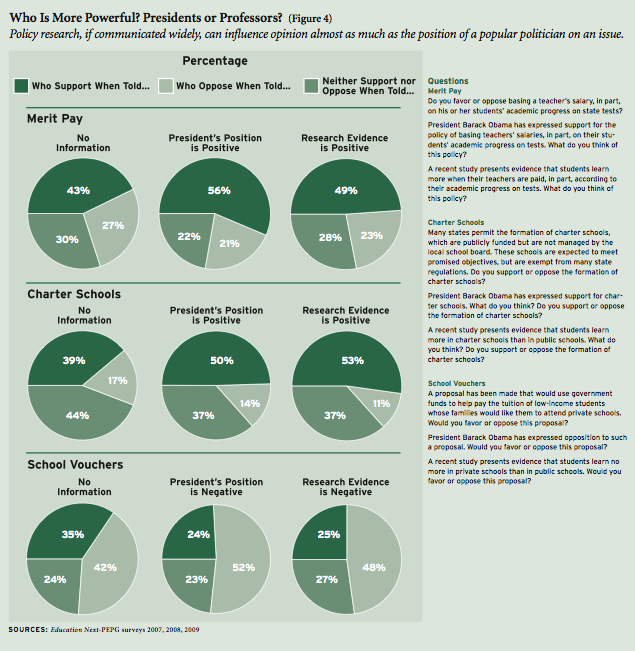

To ascertain the president’s influence, we conducted some simple experiments. On three topics—merit pay, charter schools, and school vouchers—one group of survey respondents was asked its opinion without any special prompt. Another group was first told the president’s position on the issue before being asked for its own. A third group was instead told about evidence from research on the policy’s effects on student learning. We did not specify a specific study, as the point was not to estimate the influence of any particular piece of research but rather the potential impact such evidence might have.

Merit Pay: When asked for an opinion straight out, a slight plurality of Americans sampled—43 percent—supported the idea of “basing a teacher’s salary, in part, on his or her students’ academic progress on state tests.” Twenty-seven percent opposed the idea, with the remaining 30 percent undecided. As noted above, that pattern of opinion has hardly budged since 2007.

Such stability over time, however, masks a propensity of some Americans to alter their views in light of an appeal by a popular political leader. Those informed of President Obama’s support for merit pay favored the idea by 13 percentage points more than those not so informed (see Figure 4). Obama’s backing had a particularly dramatic impact on African Americans, whose support jumped by 23 percentage points. Even many teachers were persuaded. Initially, only 12 percent of those not informed of Obama’s opinion thought merit pay a good idea, but that number jumped to 31 percent among those told of the president’s position. Obama’s endorsement caused support among Democrats to rise from 41 to 56 percent. Among Republicans, too, backing for the idea rose, albeit by a lesser amount (from 48 to 59 percent).

By comparison, policy research on the topic had a modest impact on public thinking. Among those told that “a recent study presents evidence that students learn more when their teachers are paid, in part, according to their students’ academic progress on tests,” support for merit pay climbed by just 6 percentage points above the support given when that information was withheld. The one subgroup to register especially large changes was African Americans, among whom support skyrocketed by 28 percentage points. Democrats were somewhat more responsive to research evidence than other segments of the public, with their support for merit pay increasing by 10 percentage points.

School Vouchers: Public opinion on school vouchers varied somewhat, depending on the way in which the question was worded. To one group of respondents we presented the issue as follows: “A proposal has been made that would give low-income families with children in public schools a wider choice, by allowing them to enroll their children in private schools instead, with government helping to pay the tuition. Would you favor or oppose this proposal?” In this instance, 40 percent of the respondents gave a favorable reply and 34 percent a negative one, with 27 percent taking a middling position. But when we posed the question slightly differently—asking about a “proposal that would use government funds to help pay the tuition of low-income students whose families would like them to attend private schools”—just 35 percent supported the idea. In this instance, a small alteration in wording shifted public opinion by 5 percentage points.

We also find that public support for vouchers declined by 5 percentage points between 2008 and 2009, perhaps as a result of the opposition to vouchers expressed by most Democratic presidential candidates during that party’s extended primary-election campaign, which conceivably could have altered the balance of public discourse. That interpretation is reinforced by the impact that President Obama’s position can have on public opinion. Overall, the percentage favoring vouchers was 11 points lower among those informed of the president’s opposition than among those not so informed (35 percent to 24 percent, see Figure 4). We also observed large partisan differences in the president’s influence on this issue. Whereas just 30 percent of Democrats expressed opposition to vouchers when asked outright, 52 percent did so after hearing of Obama’s opposition. By comparison, opposition among Republicans increased only slightly, from 50 to 54 percent. African Americans expressed higher levels of support for vouchers than did the population as a whole (57 percent), but support also was 12 percentage points lower among those African Americans told of presidential opposition.

A study that “presents evidence that students learn no more in private school than in public schools” depressed support for vouchers by 10 percentage points overall, an impact almost as large as presidential position taking. The same research evidence reduced support among Democrats by 15 percentage points, as compared to 6 percentage points for Republicans.

Charter Schools: Most Americans have yet to make up their minds about charter schools. Though 39 percent expressed support and only 17 percent signaled opposition in 2009, 44 percent remained undecided. These responses look much as they did in both 2007 and 2008, an indication that public discourse on charters has not changed significantly in recent years.

Despite that stability of public opinion about charters, aggregate support increased by 11 percentage points when respondents were told that Obama backed them (see Figure 4). We again found evidence that Obama’s impact has a partisan tinge. Among his fellow Democrats, Obama’s support is an unmitigated asset for charter school advocates, lifting support from 35 to 47 percent. But among Republicans, the percentage favoring charters increased by only 5 points (from 47 to 52 percent) upon learning of Obama’s endorsement. That endorsement actually decreased the proportion of Republicans who “completely” supported charter schools, from 22 to 15 percent.

When it comes to charter schools, research findings appear every bit as influential as a popular president. Told that recent research showed “students learn more in charter schools than in public schools,” support for charter schools rose by 14 percentage points. Among African Americans, the percentage who “completely” supported charter schools climbed by fully 23 percentage points, from 14 to 37 percent. Hispanics, meanwhile, were least persuaded by the evidence; only 5 percent altered their opinions. As they did on the previous items, Democrats appear to be more impressed by research than Republicans. Among those given evidence that charter schools enhance student learning, Democratic support for charter schools shot upward by 18 percentage points to 53 percent (compared to 35 percent among those not so informed), while the percentage of Republicans favoring such schools shifted by just 12 percentage points.

When all three issues—merit pay, vouchers, and charters—are considered together, a case can be made that new policy research, if communicated widely, can have an impact rivaling that of an influential president at the peak of his popularity. Admittedly, evidence from the research community does not have the same consistent impact on opinion as Obama’s position taking, which at the time of our survey could move overall public opinion by anywhere from 11 percentage points (in the case of charters) to 13 percentage points (in the case of merit pay). But the impact of a study is of comparable magnitude, ranging from 6 percentage points (in the case of merit pay) to 10 percentage points (in the case of vouchers) to 14 percentage points (in the case of charters). Research appears particularly influential among Democrats and when the general public’s own views have yet to take shape. That half the public has yet to make up its mind about charter schools may provide researchers with an opportunity to shape the public conversation going forward.

Stubborn Facts

How about raw facts concerning the state of American education? What does the public actually know about the performance of the nation’s public schools and the resources devoted to them? And is the public willing to update its views when told the truth?

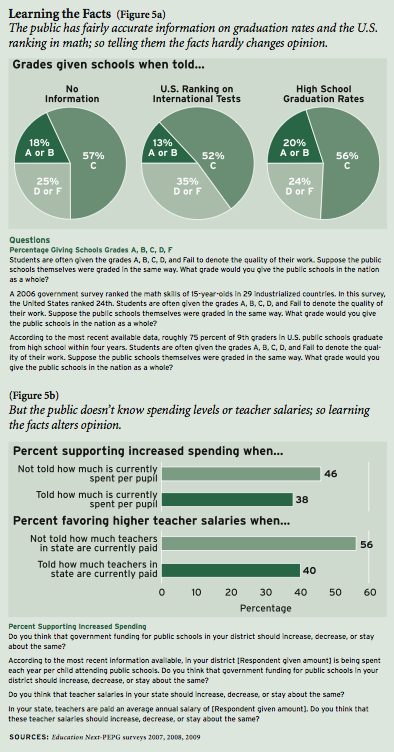

We conducted additional experiments to investigate these issues. In 2007, we asked respondents to estimate average per-pupil expenditures within their local school district and the average teacher salaries in their states. When we discovered that those surveyed, on average, underestimated per-pupil expenditures by more than half and teacher salaries by roughly 30 percent, we wondered whether people had equally poor information about the performance of American high schools (see “Educating the Public,” features, Summer 2009). So in 2009 we asked a random third of our sample to estimate high school graduation rates and another third to estimate the international standing of U.S. 15-year-olds in math. The remaining two-thirds of the sample was told the truth about one or the other of these matters, allowing us to see whether people’s assessments of their schools differed when given accurate information.

To our surprise, the public had a far more accurate understanding of student performance than they had of teacher salaries and per-pupil spending. When it comes to high school graduation rates nationwide, the best available estimates from the U.S. Department of Education suggest that roughly 75 percent of those who enter 9th grade graduate within four years, a far cry from the goal of universal high school completion to which the president of the United States and all 50 governors in 1989 committed themselves to reaching by the year 2000. When asked to give their own estimate, without any hint or help as to what the right answer might be, those surveyed came up with an even more pessimistic estimate of 66 percent, 9 percentage points below actual levels. Excluding those respondents who gave answers of less than 25 percent (on the grounds that they may have misunderstood the question or not taken it seriously) increases the average estimate only slightly to 69 percent. Either estimate is nonetheless a good deal closer to, and a good deal less optimistic about, the truth than the wildly inaccurate estimates that the public offered about teacher salaries and school expenditures.

The public was only slightly less accurate when it came to estimating how well 15-year-olds in the United States do in math, as compared to students in 29 of the leading industrialized countries. Here the correct answer, according to the latest tests administered by the Organisation for Economic Co-operation and Development’s Program on International Student Assessment (PISA), is 24th out of 29th. Both the average and median guess was 18th, a bit more optimistic than actual PISA results but not too far off the mark. Clearly, Americans have not been deceived into believing that our students are outperforming their counterparts abroad.

So what happens when the public is told the truth? Not much, it turns out, if people already have a pretty solid grasp of the relevant facts. When informed that 75 percent of students graduated from high school, the public took that as neutral to mildly good news, as the percentage giving schools an “A” or “B” increased by a trivial 2 points and the percentage getting a “D” or “F” dropped by 1 point (both statistically insignificant changes). Learning the truth about the international standing of American students had a bigger impact, reducing the share of respondents giving a grade of “A” or “B” from 18 to 13 percent and increasing the share of respondents giving a “D” or “F” by 10 percentage points (see Figure 5a).

In the case of spending, however, learning the truth shifted opinion by a larger margin (see Figure 5b). For the nation as a whole, overall support for higher spending levels dropped by 8 percentage points (from 46 to 38 percent) when respondents were informed of actual per-pupil expenditures in their own district. The impacts of this information varied widely across subgroups. Told the truth about per-pupil expenditures, the share of African Americans willing to support additional spending plummeted from 82 to 48 percent. Perhaps not surprisingly, teachers held firm in their commitment to higher spending.

Even larger impacts are observed on support for increased teacher salaries. When informed about actual average teacher salaries in their state, respondents’ support for higher salaries dropped by 16 percentage points (from 56 to 40 percent). In this instance, roughly comparable impacts are observed for all three ethnic groups. But as one might again expect, teachers’ support for high salaries was relatively undiminished, dropping just 6 percentage points (from 77 to 71 percent).

Why does the public have a generally accurate understanding of school performance but a gross misunderstanding of the amount that is spent on education? The answer may have to do with the availability of information on these issues. It is true that the U.S. Department of Education regularly releases information on all four topics in the same document, the Digest of Education Statistics. But student dropout rates and student performance on international tests receive much more extensive attention in the news media than information about per-pupil spending in individual school districts or teacher salaries in specific states. The cost of education is divided among federal, state, and local governments, and the total sums are difficult to assemble until that is done by the federal government several years after the fact.

Why does the public have a generally accurate understanding of school performance but a gross misunderstanding of the amount that is spent on education? The answer may have to do with the availability of information on these issues. It is true that the U.S. Department of Education regularly releases information on all four topics in the same document, the Digest of Education Statistics. But student dropout rates and student performance on international tests receive much more extensive attention in the news media than information about per-pupil spending in individual school districts or teacher salaries in specific states. The cost of education is divided among federal, state, and local governments, and the total sums are difficult to assemble until that is done by the federal government several years after the fact.

It is unlikely that organizations outside of the media are likely to pick up the slack. With a large share of the population convinced that schools and teachers should be given more money, or at least be held harmless, few if any interest groups or politicians have an incentive to dramatize the fact that spending levels and teacher salaries are much higher than most people believe. So school reformers instead focus on low test scores and high dropout rates as justification for merit pay, school accountability initiatives, and other school choice reforms. The public may only learn about the true cost of education when a popular political figure stakes a political career on telling them. That, we suspect, is as likely as the Cubs winning the Super Bowl.

Surveys and Realities

Our experiments only hint at what could happen in the real world of school politics. It is one thing to inform a captive audience of survey respondents about the president’s position, the results from research, or a key fact about American education. Reaching the entire American public is a completely different matter. To change opinions, one must get the public’s attention. And that is no easy task, when jobs, family life, entertainment, and sports command a higher priority in most households. Only 38 percent of the respondents to our survey report paying “a great deal” or “quite a bit” of attention to education issues. And even the power of presidents is limited by the large number of issues to which they must attend. President Obama’s genuine thoughts on such matters as merit pay, charters, and vouchers, however deeply held, necessarily command far less of his time and energy than the multitude of foreign policy, economic, and other domestic problems to which he must devote his attention.

Still, our findings suggest that a well-publicized stance taken by a popular president on an education issue might shift the opinions of large segments of the American public. Similarly, scholarship appears to be a potent weapon for groups with policy agendas they wish to pursue, as the committed can broadcast research findings with great repetition. Indeed, any group that seeks to change public opinion without gathering research to back its positions is leaving a flank unprotected. Finally, advocates are well advised to search for facts the public does not understand, and then to communicate those facts as widely as they can. Just as nothing affects opinion about an ongoing war as quickly as communiqués from the front, so too a better understanding of the facts about the public schools could in the long run shape American education.

William G. Howell is Sydney Stein Professor of American Politics at the Harris School of Public Policy at the University of Chicago. Paul E. Peterson is professor of government at Harvard University, senior fellow at the Hoover Institution, and editor-in-chief of Education Next. Martin R. West is assistant professor of education at the Harvard Graduate School of Education and executive editor of Education Next.

Survey Methods

This survey, sponsored by Education Next and the Program on Education Policy and Governance (PEPG) at Harvard University, was conducted by the polling firm Knowledge Networks (KN) between February 25 and March 13 of 2009. KN maintains a nationally representative panel of adults, obtained via list-assisted random digit—dialing sampling techniques, who agree to participate in a limited number of online surveys. Because KN offers members of its panel free Internet access and a WebTV device that connects to a telephone and television, the sample is not limited to current computer owners or users with Internet access. When recruiting for the panel, KN sends out an advance mailing and follows up with at least 15 dial attempts. The panel, then, is updated quarterly. Detailed information about the maintenance of the KN panel, the protocols used to administer surveys, and the comparability of online and telephone surveys is available online (www.knowledgenetworks.com/quality/).

The main findings from the Education Next—PEPG survey reported in this essay are based on a nationally representative stratified sample of U.S. adults (age 18 years and older) and oversamples of Hispanics and non-Hispanic blacks, public school teachers, and residents of Florida (the last group for supplemental analyses not reported here). The combined sample of 3,251 respondents consists of 2,153 non-Hispanic whites, 434 non-Hispanic blacks, 481 Hispanics, and 183 members of other ethnic groups; 709 public school teachers and 948 residents of Florida; and 1,694 self-identified Democrats and 1,265 self-identified Republicans. We use post-stratification population weights to adjust for survey nonresponse as well as for the oversampling of teachers and Floridians. These weights ensure that the observed demographic characteristics of the analytic sample match the known characteristics of the national adult population.

On many items we conducted experiments to examine the effect of variations in the way questions are posed. The figures and tables present separately the results for the different experimental conditions. In these instances, respondents were randomly assigned to exactly one of at least two possible conditions. Reported effects in the figures and tables reflect differences observed across the baseline and experimental conditions.

In general, survey results based on larger numbers of observations are more precise, that is, less prone to sampling variance than those made across groups with fewer numbers of observations. As a consequence, answers attributed to the national population are more precisely estimated than those attributed to subgroups. With 3,251 total respondents, the margin of error for responses given by the full sample in the Education Next—PEPG survey is 1.7 percentage points (for items on which opinion is evenly split). The results presented for subgroups within the sample have larger margins of error, depending on their actual size. However, any differences in opinions or changes in opinions over time reported in the text are statistically significant unless otherwise noted.

Of the 3,251 respondents surveyed in 2009, approximately 300 had also been interviewed in 2008. For this group, it was possible to identify the consistency of responses to identical questions asked in both years.

Percentage totals do not always add to 100 as a result of rounding to the nearest percentage point.