What do new assessments aligned to the Common Core tell us? Not much more than what we already knew. There are large and persistent achievement gaps. Not enough students score at high levels. Students who performed well on tests in the past continue to perform well today. In short, while the new assessments may re-introduce these conversations in certain places, we’re not seeing dramatically different storylines.

To see how scores differ in the Common Core era, I collected school-level data from Maine. I chose Maine because they’re a small state with a manageable number of schools, they were one of the 18 states using the new Smarter Balanced test this year, and because they have already made data available at the school level from tests given in the spring of 2015.

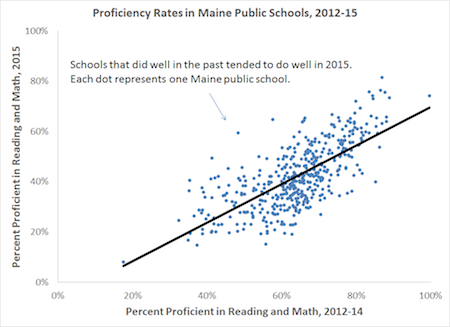

The graph below compares average math and reading proficiency rates over two time periods. The horizontal axis plots average proficiency rates from 2012-14 on Maine’s old assessments, while the vertical axis corresponds to average proficiency rates in Spring 2015 on the new Smarter Balanced assessments.* There are 447 dots, each representing one Maine public school with sufficient data in all four years. The solid black line represents the linear relationship between the two time periods.

There are a couple things to note about the graph. First is that, as has played out in many other places, proficiency rates fell. The average proficiency rate for these schools fell from 64 to 42 percent. While a number of schools saw average proficiency rates from 2012-14 in the 80s and even the 90s, no school scored above 82 percent this year (this shows up as white space at the top of the graph).

Second, there’s a strong linear relationship between the two sets of scores. The correlation between these time periods was .71, a fairly strong relationship. Schools that did well in the past also tended to do well, on a relative basis, in 2015.

So what does all this mean? A few thoughts:

1. We don’t actually know why the scores fell this way. In addition to whatever other changes are playing out in schools (not to mention this year’s test-takers are a slightly different group than last year’s), states are using both new sets of assessments and new cut scores to determine proficiency rates. We don’t know if the tests are actually harder—although there’s good reason to think they are in some states—or if the declines are mostly due to states raising the benchmark for what it means to be “proficient.”

2. There’s no obvious explanation for whatever year-to-year variation we do see. That is, it could be because some groups of students performed better or worse on the old versus the new test. Maybe they were better at bubble tests or worse at open response questions (although logic suggests the same students might struggle in either format). Or it could just be random noise that’s an artifact of year-to-year fluctuations. That’s especially true given that we’re talking about individual schools, which might have small sample sizes that are more susceptible to random movements up or down.

3. It’s not clear how much correlation we should have expected between old and new assessments. Is it a good thing that the correlation is strong? Is anyone surprised that schools with stronger performance in the past still perform well, or vice versa? As Jersey Jazzman notes in his excellent (but snarky) deep dive into New York, school proficiency rates tend to be closely related year-to-year, over much longer time spans, and even when the state sets new proficiency targets.

4. What does the strong correlation between testing years tell us about school accountability? For example, why did Maine (and many other states) need to pause accountability if the new test scores are in line with the old test scores? States with waivers from NCLB like Maine are now using normative comparisons to make accountability decisions, meaning the absolute proficiency levels have little bearing on a school’s accountability. What matters is how each school compares to other schools. In a world where statewide proficiency rates fall 22 percentage points, a school that sees its proficiency fall by 21 percent is actually better off. Moreover, if states are worried about year-to-year fluctuations introducing noise into their accountability systems, they should be thinking about how to average over multiple years in order to increase the precision of their determinations. Too many places see year-to-year changes and assume they’re real rather than an artifact of small sample sizes.

5. The new test results may mean very different things to different actors. Even though proficiency cut points don’t really matter for accountability decisions (see point #4 above), they do send a signal to parents and schools. But if all we cared about were more honest cut scores, states could have just kept their old assessments and raised the benchmark for what constituted “proficiency.” The logic behind the Common Core was not just that we’d be getting lower scores; it was that we’d be getting common and more accurate measures of student progress. Some states and colleges have seized on the Common Core as an opportunity to give clearer guidance to students about what it means to be “college-ready,” but it’s still too early to tell if the promise of Common Core is becoming a reality.

*Update: Per a reader’s note, I flipped the graph’s axes from my original version. I made a few subsequent edits in the text but the substance doesn’t change.

—Chad Aldeman

This post originally appeared on Ahead of the Heard.