In November 2022, the startup OpenAI released a “research preview” of ChatGPT, a computer program that could quickly and fluently answer questions posed in natural language, write essays and fictional tales in response to prompts, and hold up its end of a written conversation on a startlingly wide range of topics. ChatGPT and its successors will disrupt and transform many human activities—including education—for better or for worse.

To date, most education reporting has highlighted ChatGPT’s potential to facilitate cheating and plagiarism. On HITC, Ellissa Bain described how ChatGPT could “Write Your Papers in Seconds,” explaining that this is “great for students” and “examiners will never know.” It’s not at all great for student learning, but it can definitely fool examiners; a team at the New York Times Upshot confirmed that a small group of experts—including a teacher, a professor, a tutor, and a famous children’s author—often failed to distinguish between ChatGPT’s output and the writing of actual fourth and eighth graders in response to essay prompts from the National Assessment of Education Progress. In The Atlantic, teacher Daniel Herman pronounced “The end of high-school English,” explaining that “what GPT can produce right now is better than the large majority of [student] writing.” ChatGPT could compare Hamlet to Beloved, illustrate Buddhist ideas using a pet hamster, and write a convincing essay describing how its (fabricated) experience volunteering at a pet shelter had prepared it for success at Stanford. The bot’s ability to fabricate an admissions essay raises the question of whether colleges that no longer require standardized tests will need to drop the essay requirement as well.

But does ChatGPT have legitimate educational uses? I got to wondering if ChatGPT could offer correction and guidance as students worked through math problems. Some tutoring systems (such as Mathia or ASSISTments) can offer help when students go off-track, but that help often comes in the form of canned text or video highlighting challenges that, though common, do not always address what is tripping up a specific student in a particular problem. And if students remain confused, current tutoring systems cannot respond to follow-up questions in natural language.

Can ChatGPT provide feedback and answer questions about math in a more tailored and natural way? The answer, for the time being, is no. Although ChatGPT can talk about math superficially, it doesn’t “understand” math with real depth. It cannot correct mathematical misconceptions, it often introduces misconceptions of its own; and it sometimes makes inexplicable mathematical errors that a basic spreadsheet or hand calculator wouldn’t make.

Here, I’ll show you.

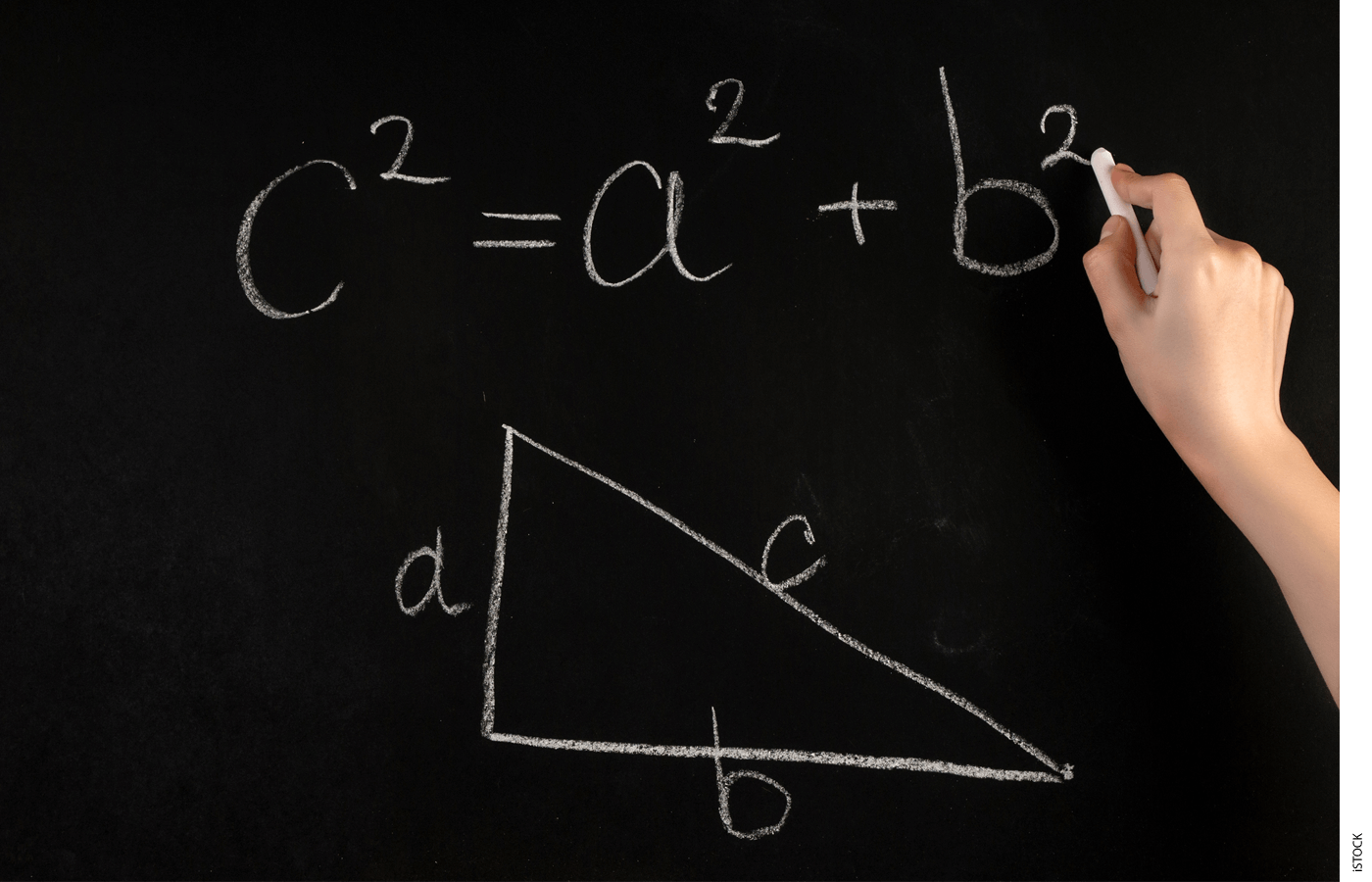

Can ChatGPT talk about Pythagoras?

A couple of days ago, I asked ChatGPT the following question:

Explain how to use Pythagoras’ theorem in terms that a middle school math student would understand. Include an example.

ChatGPT provided its answer in less time than it would take me to type it:

Pythagoras’ theorem is a way to find the length of a missing side of a right triangle (a triangle with one 90 degree angle). It states that in a right triangle, the square of the length of the hypotenuse (the side opposite the right angle) is equal to the sum of the squares of the other two sides.

So far I was impressed. I’d prefer to see these two long sentences unpacked into three or four short ones, and I’d like to see a right triangle defined explicitly as one with a right angle. But ChatGPT’s answer was accurate, and it followed up with examples—not just the one example I requested, but two. [1]

Imagine we have a right triangle where the two shorter sides (called the legs) are 3 inches and 4 inches long. We want to find the length of the hypotenuse (the side opposite the right angle).

According to Pythagoras’ theorem, we can find the length of the hypotenuse by using the formula:

hypotenuse^2 = leg1^2 + leg2^2

Plugging in the values from our triangle, we get:

hypotenuse^2 = 3^2 + 4^2

hypotenuse^2 = 9 + 16

hypotenuse^2 = 25

So the length of the hypotenuse is the square root of 25, which is 5 inches.

Here’s another example:

Imagine we have a right triangle where the hypotenuse is 5 inches long and one of the legs is 3 inches long. We want to find the length of the other leg.

We can use Pythagoras’ theorem to solve for the missing leg by using the formula:

leg^2 = hypotenuse^2 – leg1^2

Plugging in the values from our triangle, we get:

leg^2 = 5^2 – 3^2

leg^2 = 25 – 9

leg^2 = 16

So the length of the missing leg is the square root of 16, which is 4 inches.

I hope this helps! Let me know if you have any questions.

These examples were accurate, too, but they both described the same right triangle, with sides of length 3, 4, and 5—a longstanding cliché in introductory geometry. It would have been better to describe two different triangles, and it would have been better to explain where the formula for the second example came from. I asked for clarification:

Wait. Where did you get this formula? leg^2 = hypotenuse^2 – leg1^2

ChatGPT explained:

In a right triangle, the hypotenuse is the side opposite the right angle, and the other two sides are called the legs. The formula I provided, leg^2 = hypotenuse^2 – leg1^2, is simply another way to write Pythagoras’ theorem, which states that in a right triangle, the square of the length of the hypotenuse is equal to the sum of the squares of the other two sides.

In other words, if you know the length of the hypotenuse and one of the legs in a right triangle, you can use this formula to find the length of the other leg.

This bit of arm-waving—it’s “just another way”—would not be terribly useful to a confused student wondering how the second formula was related to the first. ChatGPT followed up with an example, but it was the same example it had already given, worded exactly the same way.

So far ChatGPT’s output wasn’t pedagogically optimal, but it wasn’t terrible, either, and I couldn’t say that every human geometry teacher would have explained things better.

But does ChatGPT really “get” Pythagoras?

But could ChatGPT go deeper? Teaching consists of more than explanations and examples. A Schaum’s Outline can provide those. But good teachers can also anticipate, diagnose, and correct student misunderstandings. So I asked ChatGPT:

What are some common misunderstandings of Pythagoras’ theorem?

ChatGPT responded with four examples, which I’ll take just slightly out of order. The first three were howlers:

Some common misunderstandings about Pythagoras’ theorem include:

-

- Believing that it only works for right triangles. While Pythagoras’ theorem is most commonly used to find the length of a missing side in a right triangle, it can also be used to determine whether a triangle is a right triangle. If the lengths of the sides of a triangle satisfy the formula of Pythagoras’ theorem (i.e., if the square of the length of the hypotenuse is equal to the sum of the squares of the other two sides), then the triangle is a right triangle.

- Believing that it only works for right triangles with a 90 degree angle: Pythagoras’ theorem is specifically designed for right triangles, which have one 90 degree angle. However, it is possible for a triangle to have two acute angles (angles less than 90 degrees) and still be a right triangle.

- Believing that it only works for right triangles with legs that are perpendicular to each other: In a right triangle, the sides that are not the hypotenuse are called the legs. While it is true that the legs of a right triangle are typically perpendicular to each other, this is not a requirement for the theorem to be applicable. As long as a triangle has one 90 degree angle, Pythagoras’ theorem can be used to find the length of a missing side.

Not one of these three “common misunderstandings” is actually wrong, and the fact that ChatGPT listed them as wrong suggests that it has some misunderstandings of its own. ChatGPT doesn’t seem to know that Pythagoras’ theorem only applies to right triangles, and it doesn’t realize that all right triangles have a 90 degree angle and legs that are perpendicular to each other. It also doesn’t seem to realize that no triangle can have more than one angle that is 90 degrees or greater, and that implies that it doesn’t know a fundamental fact of geometry: that the angles of a triangle must add to 180 degrees.

The other misconception that ChatGPT provided had more to it:

-

- Believing that it only works for right triangles with legs of integer lengths: Pythagoras’ theorem can be used to find the length of a missing side in a right triangle with sides of any length, not just integer lengths.

This is in fact a misconception. Unfortunately it is one that ChatGPT reinforced by repeatedly using the example of a right triangle whose sides are 3, 4, and 5 inches long. I gave the program some credit for recognizing the issue, but I also wondered if it could handle non-integer lengths itself. So I asked:

Suppose the hypotenuse of a right triangle is 7.1 miles long, and one of the legs is 3.85 miles long. How long is the other leg?

ChatGPT’s answer was clear but contained a surprising error:

To find the length of the other leg, you can use the Pythagorean theorem…. In this case, the hypotenuse has length 7.1 miles, and one leg has length 3.85 miles. Let’s call the length of the other leg x. The Pythagorean theorem can be written as an equation as follows:

(7.1)^2 = (3.85)^2 + x^2

Solving for x, we get:

x = sqrt((7.1)^2 – (3.85)^2)

Plugging in the values and simplifying, we get:

x = sqrt(50.41 – 15.0225)

x = sqrt(35.3875)

x = 5.93 miles

Therefore, the length of the other leg is 5.93 miles.

Do you see the error? It’s minor but weird. ChatGPT plugged in the wrong value (15.0225) for the square of 3.85 (which should be 14.8225), and as a result it gave the wrong final answer (5.93 instead of 5.97). It’s not a big mistake, but it’s not just a rounding error. Any student checking the steps with a hand calculator or spreadsheet would wonder, as I did, why they weren’t getting the same result.

How can a computer program released in 2022 get the wrong answer when squaring a number? Is ChatGPT jerking my chain?

In light of ChatGPT’s confusion about the angles in a right triangle, I wondered if it would recognize a right triangle if I described it indirectly. So I started my next question:

Suppose a triangle has three sides called A, B, and C. A is 7 inches long and B is 7 inches long.

Now I meant to describe a right isosceles triangle. like this—

—but I made a typo. I should have typed this:

The angle between A and C is 45 degrees, and so is the angle between B and C.

But instead I typed this:

The angle between A and C is 45 degrees, and so is the angle between A and B.

which is impossible if A and B are the same length, as I had previously stated they were. Try and draw a triangle that meets my specifications; you can’t.

Now ChatGPT could have expressed confusion or asked for clarification, but it didn’t. It plowed right ahead. When I finished my question,

What is the length of side C?

ChatGPT started its answer:

Since the angle between A and B is 45 degrees, and the angle between A and C is also 45 degrees, the triangle is an isosceles right triangle, where A and B are the legs and C is the hypotenuse….

This doesn’t make sense. If A and B are the legs of a right triangle, the angle between them can’t be 45 degrees; it has to be 90. ChatGPT went ahead and calculated the length of C using Pythagoras’ theorem, but it had revealed something important: it didn’t have a coherent internal representation of the triangle that we were talking about. It couldn’t visualize the triangle as you or I can, and it didn’t have any equivalent way to catch errors in verbal descriptions of visual objects.

In short, ChatGPT doesn’t really “get” basic geometry. It can crank out reams of text that use geometric terminology, but it literally doesn’t know what it is talking about. It doesn’t have an internal representation of geometric shapes, and it occasionally makes basic calculation errors.

The problem goes beyond geometry

Geometry is not the only academic area where ChatGPT has trouble. In my very next question, motivated by the role that phonics plays in teaching young children to read, I asked ChatGPT to translate a couple of sentences into the international phonetic alphabet (IPA). ChatGPT said it couldn’t do that, and I give it credit for knowing its limits, but then it suggested that I use Google Translate. When I reported back that Google Translate can’t use IPA, either, ChatGPT apologized for the misunderstanding.

What is ChatGPT doing? It is bloviating, filling the screen with text that is fluent, persuasive, and sometimes accurate—but it isn’t reliable at all. ChatGPT is often wrong but never in doubt. It acts like an expert, and sometimes it can provide a convincing impersonation of one. But often it is a kind of b.s. artist, mixing truth, error, and fabrication in a way that can sound convincing unless you have some expertise yourself.

The educational applications of a tool like this are limited. All over the internet, teachers are discussing the possible uses of ChatGPT to tutor students, write lesson plans, or generate quiz questions. They need to be careful. While ChatGPT can generate reams of basic material, and some of it will be useful, teachers need to verify everything to avoid passing on misinformation to their students.

My experience was disappointing, but perhaps I should not have been surprised. After all, on December 10, OpenAI’s CEO Sam Altman tweeted that ChatGPT has problems with “robustness and truthfulness” and “it’s a mistake to be relying on it for anything important right now.” Other experts have commented that ChatGPT sometimes “lies” or “hallucinates.” ChatGPT’s interface alerts users that the program “may occasionally generate incorrect information.” When it comes to geometry or the capabilities of Google Translate, this is a grave understatement.

These could turn out to be short-lived problems, fixed in the next version—or they could persist for many years. There are about 250 exceptionally talented people working at OpenAI, and the fact that they released ChatGPT in its present condition suggests that its problems may not have an easy fix.

In the not-too-distant future, we may have intelligent programs that can tutor students in specific subjects—programs that can converse in natural language, draw on deep and accurate representations of subjects like geometry, and recognize and correct the common missteps and misconceptions that lead to wrong answers. But we are not there today. Today some tools (e.g., Wolfram Alpha) can do geometry, and some (e.g., Mathia or CTAT) can trace some wrong answers to their sources—but those tools rely on explicit subject-specific programming and cannot converse in natural language. Meanwhile AI tools like ChatGPT can converse fluently in natural language—but don’t seem to understand the basics of core academic subjects like geometry.

Despite its limitations, ChatGPT is publicly available, and some students and teachers are going to use it. Not all of them will use it carefully. We may not be prepared for the consequences.

Paul T. von Hippel is professor and associate dean for research in the LBJ School of Public Affairs at the University of Texas at Austin.

1. I’ve added indenting to make ChatGPT’s calculations more readable.