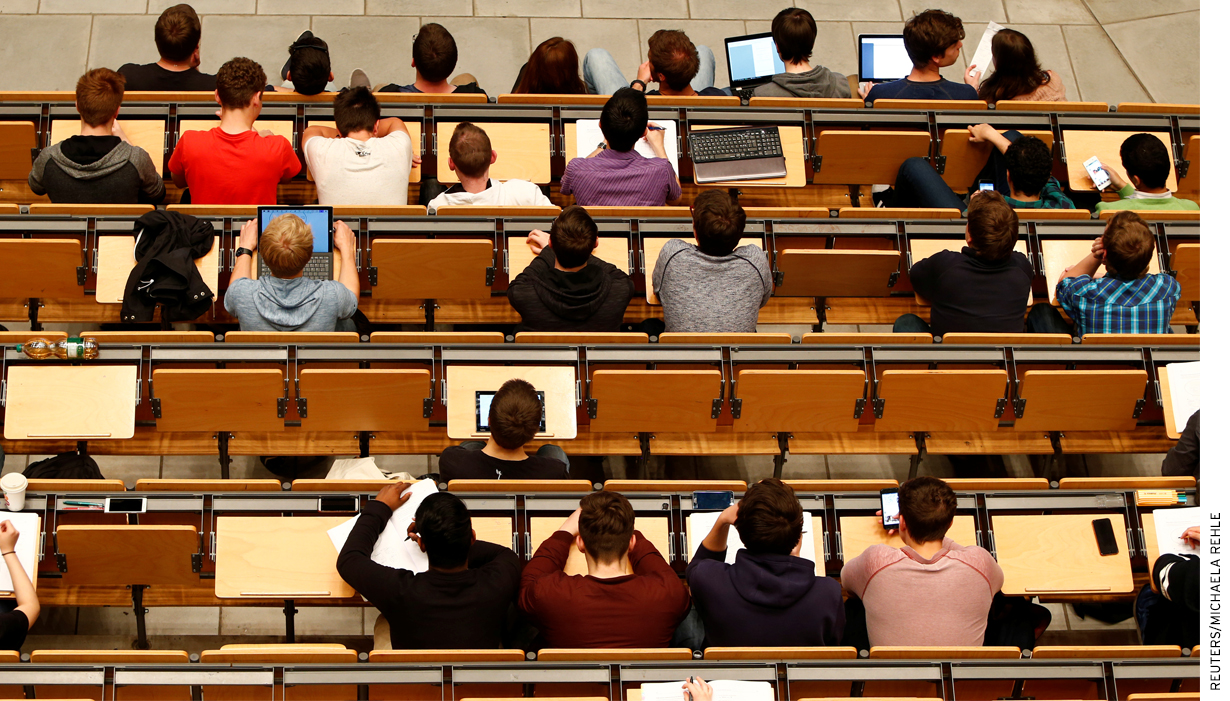

Do computers help or hinder classroom learning in college? Step into any college lecture and you’ll find a sea of students with laptops and tablets open, typing as the professor speaks.

With their enhanced ability to transcribe content and look up concepts on the fly, are students learning more from lecture than they were in the days of paper and pen?

A growing body of evidence says “No.” When college students use computers or tablets during lecture, they learn less and earn worse grades. The evidence consists of a series of randomized trials, in both college classrooms and controlled laboratory settings.

Students who use laptops in class are likely different from those who don’t. They may be more easily distracted or less interested in the course material. Alternatively, they may be the most serious (or wealthiest) students who have invested in technology to support their learning.

Randomization assures us that, on average, the students using electronics in a study are comparable at baseline to those who do not. That means that any comparison we make of students at the end of the study is caused by the “treatment,” which in this case is laptop use.

In a series of laboratory experiments, researchers at Princeton and the University of California, Los Angeles had students watch a lecture, randomly assigning them either laptops or pen and paper for their note-taking. [1] Understanding of the lecture, measured by a standardized test, was substantially worse for those who had used laptops.

Learning researchers hypothesize that, because students can type faster than they can write, a lecturer’s words flow straight from the students’ ears through their typing fingers, without stopping in the brain for substantive processing. Students writing by hand, by contrast, have to process and condense the material if their pens are to keep up with the lecture. Indeed, in this experiment, the notes of the laptop users more closely resembled transcripts than summaries of the lectures.

Taking notes can serve two learning functions: the physical storage of content (ideally, for later review) and the cognitive encoding of that content. These lab experiments suggest that laptops improve storage, but undermine encoding. On net, those who use laptops do worse, with any benefit of better storage swamped by worse encoding.

We could try to teach students to use their laptops better, nudging them to think about the material as they type. The researchers tried this in a second experiment, advising the laptop users that summarizing and condensing leads to more learning than transcription. This instruction had no effect on the results.

Students using laptops can also distract their classmates from their learning, another lab experiment suggests. [2] Researchers at York and McMaster recruited students to watch a lecture and then tested their comprehension. Some students were randomly assigned to do some short tasks on their laptops during the lecture (e.g., look up movie times). Others were allowed to focus on the lecture. All seats were randomly assigned.

As expected, the multitasking students learned less than those focused on the lecture, scoring about 11 percent lower on a test. What is more surprising: the learning of students near the multitaskers also suffered. Students who could see the screen of a multitasker’s laptop (but were not multitasking themselves) scored 17 percent lower on comprehension than those who had no distracting view. It’s hard to stay focused when a field of laptops open to Facebook, Snapchat, and email lies between you and the lecturer.

These studies, like all lab experiments, took place under artificial circumstances. Students were paid to participate, lectures were unrelated to actual coursework, and performance on tests had no bearing on college grades. This controlled setting allowed researchers to carefully manipulate conditions and thereby try to tease out the mechanisms underlying the effect of laptop use on learning.

But what happens in a real classroom, over multiple lectures? Perhaps laptop-using students review and encode their notes later, after class. They might even perform better on assessments, since they have more accurate notes for review. Further, students might work harder to stay focused on the lecture, even in the face of distractions, when their grades are at stake.

To capture these real-world dynamics requires randomly assigning hundreds of college students to different classroom conditions. At the United States Military Academy (USMA), a team of researchers took on this task. [3]

The USMA is a selective, liberal-arts college whose graduates go on to become officers in the US military. All students at the USMA take a semester-long, introductory economics class. The class is taught by professors in sections of no more than twenty students. Students in this introductory class all take the same multiple-choice and short-answer tests, which are administered online and graded automatically. This provides a consistent measure for comparisons of learning across sections.

The researchers randomly assigned these sections to one of three conditions: 1) electronics allowed, 2) electronics banned, and 3) tablet computers allowed, but only if laid flat on desks where professors could observe their use. Because professors at USMA teach multiple sections of the same class in a given semester, the researchers assigned each professor to more than one treatment condition.

At the end of the semester, students in the classrooms where electronics were allowed had performed substantially worse, with scores 0.2 standard deviations below those of the sections where electronics were banned. There was no discernible difference between sections where tablets were allowed (but restricted) and those where electronics were unrestricted.

In real-world education settings, a fifth of a standard deviation is a large effect. For example, the Tennessee STAR experiment found that children who were randomly assigned to smaller classrooms between kindergarten and third grade scored a fifth of a standard deviation higher than children in standard classrooms.

We can criticize the external validity of any of these studies. How relevant, after all, is the experience of cadets learning economics to community college students learning Shakespeare? But the evidence-based strategy is not to therefore ignore the studies but to consider the specific reasons that their results would or would not extrapolate to other settings.

The USMA authors argue compellingly that we would expect effects at other colleges to be, if anything, larger than those in their study. USMA courses are taught in small sections, where it is difficult for students to hide distracted computer use from their professors. Further, USMA students have strong incentives to perform, since class rank determines who gets the first pick of jobs after graduation.

The best way to settle this question of external validity, of course, is to replicate this experiment in more colleges. Until then, I find the existing evidence sufficiently compelling that I ban electronics in my classrooms.

Students with learning disabilities may need a laptop or tablet in order to participate in class. I (and every teacher I know) solicit and accommodate such requests. There is a loss of privacy, in that a student using a laptop is revealed as having a learning disability. This loss of privacy has to be weighed against the deterioration in learning that the other students suffer if laptop use is freely allowed.

Students may object that a laptop ban prevents them from storing notes on their computers. But free smartphone apps can quickly snap pictures of handwritten pages and convert them to PDF format. Even better: typing and synthesizing handwritten notes is a terrific way to review and check one’s understanding of a class.

There may well be particular classroom settings in which laptops improve learning. Perhaps a coding class, in which students collaborate on solving a programming problem. But for the typical lecture setting, the best evidence suggests students should lay down their laptops and pick up a pen.

— Susan Dynarski

Susan Dynarski is a professor of public policy, education and economics at the University of Michigan.

Susan Dynarski is a professor of public policy, education and economics at the University of Michigan.

This post originally appeared as part of Evidence Speaks, a weekly series of reports and notes by a standing panel of researchers under the editorship of Russ Whitehurst.

The author(s) were not paid by any entity outside of Brookings to write this particular article and did not receive financial support from or serve in a leadership position with any entity whose political or financial interests could be affected by this article.

Notes:

1. Pam A. Mueller and Daniel M. Oppenheimer, “The Pen Is Mightier Than the Keyboard,” Psychological Science, Vol 25, Issue 6, pp. 1159 – 1168. First published date: April-23-2014. http://journals.sagepub.com/doi/abs/10.1177/0956797614524581

2. Faria Sana, Tina Weston, Nicholas J. Cepeda, “Laptop Multitasking Hinders Classroom Learning for Both Users and Nearby Peers,” Computers & Education, Volume 62, 2013, Pages 24-31. http://www.sciencedirect.com/science/article/pii/S0360131512002254?via%3Dihub

3. Susan Payne Carter, Kyle Greenberg, Michael S. Walker, “The Impact of Computer Usage on Academic Performance: Evidence from a Randomized Trial at the United States Military Academy,” Economics of Education Review, Volume 56, 2017, Pages 118-132, http://www.sciencedirect.com/science/article/pii/S0272775716303454. The What Works Clearinghouse has reviewed this study and given it its highest rating: https://ies.ed.gov/ncee/wwc/Docs/SingleStudyReviews/wwc_carter_022217.pdf.