School choice is on the march. Even before Trump’s election and his selection of Betsy DeVos as Secretary of Education, charter and private school choice have been steadily expanding every year. Expanding school choice, like almost all of education reform, occurs in the states, so who is in charge in DC will not make too much of a difference other than turning a headwind into a tailwind.

But there is a certain crowd whose lives and thoughts revolve around what is happening in DC, so they have become all aflutter with either hope or dread about the prospects of a Trump presidency for school choice. Those motivated by dread have fired first in the looming Regulation War, arguing that choice only works — if it works at all — within a heavy regulatory framework to ensure quality. If choice can’t be stopped at least it can be controlled and that control can improve the choices parents will make.

The problem with the pro-regulation argument is that you can’t ensure quality if you don’t have the ability to predict quality. It’s true that parents have a hard time anticipating how different schools might affect long-term outcomes and are quite likely to make mistakes in choosing schools. And some emphasize this fact to justify a strong role for regulators in controlling the range and type of options from which parents can choose. But what they rarely consider is whether regulators are any better at anticipating how schools will affect long-term outcomes. After all, regulators can’t protect families against making mistakes if they are equally or more likely to make those mistakes themselves.

I’ve written previously about the disconnect between near-term test score gains and changes in later life outcomes. If we can’t reliably use rigorously identified test score gains to predict later life outcomes, then on what basis will regulators be able to judge quality to protect families against making bad choices? And the situation is even worse because most regulators making decisions about what choice schools should be opened, expanded, or closed are not relying on rigorously identified gains in test scores — they just look primarily at the levels of test scores and call those with low scores bad. Of course, all this does is punish and discourage schools from attempting to serve the most challenging students since the level of scores is more a reflection of student background than school quality.

But let’s say you don’t believe me about the weak predictive power of test score gains and are determined to use tests as the main indicator of school quality. We are still left with the question of whether regulators are any good at identifying which schools will contribute to test score gains. Fortunately, we have a recent study that examined whether the criteria used by regulators in New Orleans are predictive of test score growth — even if we accept test gains as a reliable indicator of quality. The bottom line is that none of the factors used by authorizers to open or renew charter schools in New Orleans were predictive of how much test score growth these schools could produce later on.

In particular, the study examines ratings derived from criteria favored by the National Association of Charter School Authorizers (NACSA) to see if they are predictive of test score growth or enrollment growth. Neither the NACSA aggregate rating nor individual factors, like school governance features, educational plans, or financial and enrollment characteristics were predictive. As the study concluded, “None of the factors, however, predict the main dependent variables of interest: SPS, value-added, enrollment, and enrollment growth, as direct measures of future school performance. ”

If regulators are unable to predict which schools will be good (assuming, falsely, that test score gains are a reliable indicator of good schools), how are they supposed to “protect” parents from making bad choices about schools? The case for regulation is often expressed as a tautology: people will make better choices if they only have good schools from which to choose. But this presupposes that regulators can identify and eliminate bad choices. And if regulators really could do this, why have school choice at all? Why not just ensure that every student succeeds by forbidding any school from being bad?

No one should be surprised that NACSA’s criteria have no relationship to their own metric for school quality — test score growth — given how well Arizona charter schools appear to be doing even while NACSA gives the state a very low score for charter quality. What might be more surprising is that the recent study I’ve been referencing that finds no connection between the criteria regulators use and future test score growth was co-authored by none other than Doug Harris. Yes, that’s the same Doug Harris who wrote the NYT op-ed claiming that the lack of regulation in Detroit contributed to “the biggest school reform disaster in the country,” while New Orleans’ more heavily regulated approach produced “impressive” results.

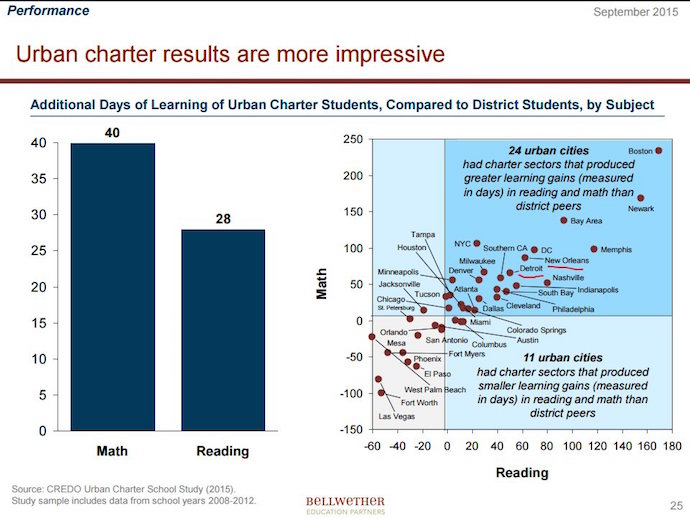

As Ramesh Ponnuru has noted, using the same CREDO research that Doug cites, Detroit charter schools produce significantly better outcomes than the traditional public school alternatives. In fact, the test score gains achieved by Detroit charters exceed those in heavily regulated Denver and only slightly lag those in New Orleans. (See the table above for a graphic presentation of results across cities).

Mind you, I do not believe the CREDO results because that research only matches on observed characteristics and therefore does not have a rigorous identification of causal effects. But Doug seems to believe that research even though it undermines the very argument he was making. And one can only assume that Doug believes his own research showing that regulators lack effective tools to identify and predict good schools. Doug accuses DeVos of being guilty of “a triumph of ideology over evidence,” but it seems like there is a lot of that going around.

—Jay P. Greene

Jay P. Greene is endowed chair and head of the Department of Education Reform at the University of Arkansas.