Fundamental information that is the basis for evaluating the performance of our K-12 education system is sending different signals. Measures of student achievement point to low levels and meager improvement. Measures of teaching indicate nearly every teacher is effective. But teachers are the most important input to learning—something’s amiss.

What is amiss is that the information is not based on equally sound measurements. Student achievement is soundly measured; teacher effectiveness is not. The system is spending time and effort rating teachers using criteria that do not have a basis in research showing how teaching practices improve student learning.

Achievement is not high and not improving

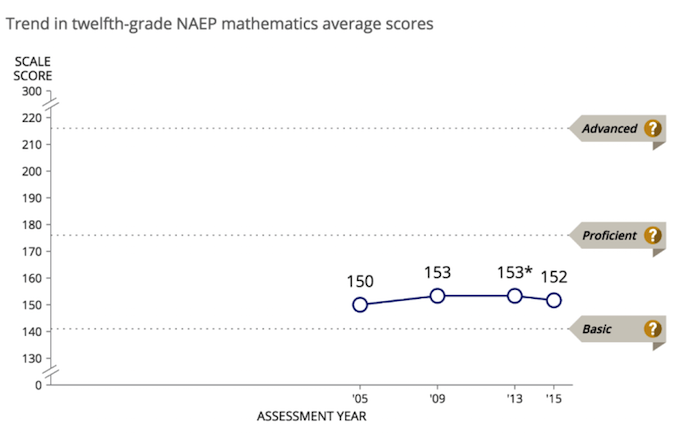

Recent data from the National Assessment of Educational Progress (NAEP) show that most of America’s fourth, eighth, and twelfth grade students are not proficient in math and reading. Results from testing in 2015 showed that 60 percent of fourth graders, 57 percent of eighth graders, and 75 percent of twelfth graders are not proficient in math. Nor are they close to being proficient—for example, 37 percent of twelfth graders scored at the basic level of proficiency and 38 percent scored below the basic level.

Not much has changed in the last decade. The first figure shows the average 17-year-old scoring 150 in math in 2005 and 152 in 2015, which is down from 2013.

Source: The Nation’s Report Card, Mathematics and Reading at Grade 12, http://www.nationsreportcard.gov/reading_math_g12_2015/#mathematics/scores.

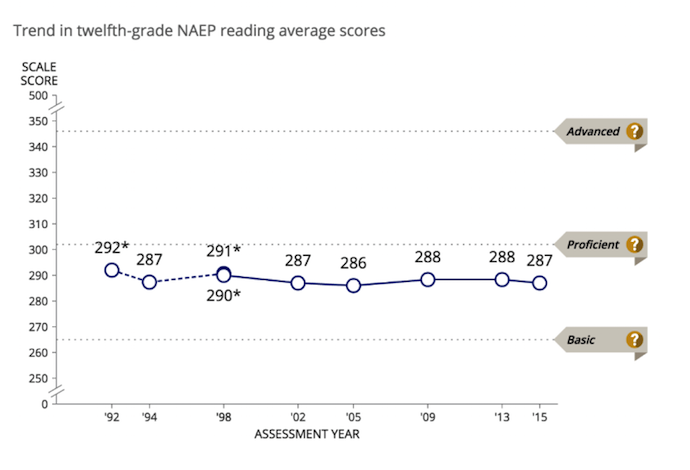

The story is about the same for reading.[i] The second figure shows the average 17-year-old scored 286 in reading in 2005 and 287 in 2015. From a statistical perspective, scores have stayed within the margin of sampling error since 1998, almost 20 years.

Source: The Nation’s Report Card, Mathematics and Reading at Grade 12, http://www.nationsreportcard.gov/reading_math_g12_2015/#reading/scores.

Whatever one thinks about how NAEP sets proficiency levels, and I return to this below, it is uncomfortable that even today nearly 40 percent of high school seniors do not meet its basic level. And 20 percent of students leave high school before graduating, which inflates scores of twelfth graders unless we believe high school dropouts are among the higher-scoring students, which is implausible.[ii]

Teacher ratings are about as high as they could be

Measures of teacher effectiveness vary state by state but results are consistent—nearly every teacher is effective. This consistency was named the ‘widget effect’ in a 2009 report (all widgets are the same).[iii] A number of states implemented new teacher evaluation systems in the last ten years, and there is still a widget effect. In Florida, 98 percent of teachers are effective; New York: 95 percent; Tennessee: 98 percent; Michigan: 98 percent.[iv] New Jersey implemented a new evaluation system in 2014 and 97 percent of teachers were ‘effective’ or ’highly effective.’[v] That 3 percent of teachers were rated as ineffective was a significant increase from the evaluation system it replaced, which had rated 0.8 percent of teachers as ineffective. But it still implies nearly universal effectiveness.

It’s nearly impossible to raise these rates. In states that have multiple designations, teachers can possibly move from ‘effective’ to highly effective.’ But unless there are financial or professional incentives to do so, such as pay increases or certification by the National Board of Professional Teaching Standards, there’s little reason for teachers rated as effective to change what they already are doing.[vi]

Effective is defined as “producing a decided, decisive, or desired effect.” Education is cumulative in nature, so, a twelfth grader who is not achieving at the basic level has had a dozen or more teachers contributing to their education. Nearly all of them will have been rated as effective. What effect did they produce?

The issue is how teachers are evaluated

Most students are not proficient in reading and math, but teachers are teaching effectively. Perhaps measures of proficiency set the bar too high. Alternately, systems for rating teachers might set the bar too low.

Is achieving proficiency according to NAEP a high bar, so high that we should be satisfied if students scored at a lower level? For example, if students scoring ‘basic’ on NAEP had high grade-point-averages in college, admittedly an extreme example, we might conclude that ‘proficient’ is a high bar. A report from the National Center for Education Statistics compared NAEP math proficiency levels with other student outcomes.[vii] It found that NAEP math proficiency correlated reasonably with other outcomes—for example, students who scored at the proficient level as high school seniors were very likely to score highly on a math test in eighth grade, 68 percent of high school students who had taken calculus scored proficient or better, 56 percent of students who had A grade-point-averages in high school math scored proficient or better, and 84 percent of students at the proficient level had gone on to four-year colleges. A student who was proficient on the NAEP math test was skilled in math, but not superhumanly so.

Teacher rating systems are more of an issue.[viii] The bulk of the rating, typically more than 50 percent of it, is based on observing teachers in classrooms. Other factors that may be considered include student test scores, growth of scores, collegiality or professionalism, or findings from surveys of students. But observing teachers is the centerpiece of most rating systems.

Observations are straightforward. A principal (or district administrator) comes into a teacher’s classroom with a measurement tool in hand (now more often on a laptop), and checks off whether he or she observes various things in the classroom. For example, does the teacher demonstrate knowledge of the curriculum? Does the teacher ask open-ended questions that cause students to think at a higher level in formulating answers? Do students appear engaged?

But what principals observe is whether teachers are teaching. The crucial question is whether students are learning. To answer that, we need some measure of learning: a test. Using test scores to evaluate teachers has been controversial, to put it mildly.[ix] Various commentators have argued that test scores are problematic for evaluating individual teachers. Neither the number of observations of a teacher or students in a classroom is large, so average classroom scores can be expected to vary from year to year because of expected measurement error even if the teacher is the same and student characteristics are the same.[x]

If what is found in observations mirrors what is found in tests, there’s little reason to do both and the issue of evaluating teachers with test scores becomes moot. Do observations discriminate between teachers whose students learn a lot and teachers whose students do not?

They don’t. Teacher observation scores and student test scores show little correlation. This evidence was recently reviewed by the Institute of Education Sciences, which concluded that “teacher knowledge and practice, as measured in existing studies, do not appear to be strongly and consistently related to student achievement.”[xi] The Measures of Effective Teaching study found that overall observation scores had small and mostly insignificant correlations with test scores, and correlations with scores on individual observation items likewise were small.[xii] Another study investigated correlations between observations of math instruction and math achievement. Correlations between practices observed in classrooms and math scores were small, and some were negative.[xiii] Another recent study using the same data set found that teacher observation scores also were not correlated with so-called ‘noncognitive’ outcomes such as ‘grit’ and ‘growth mindset.’[xiv]

Alternately stated, evidence about teacher knowledge and practice is weakly and inconsistently related to student achievement. Observations are fundamentally about teacher practice. The finding is saying observations and test scores are measuring different things.

Of course, research is rarely monolithic and some study in some journal will find some practice to be correlated with some test score. For example, researchers found that student test scores rose in Cincinnati after the district implemented a teacher-observation program that provided intensive feedback and coaching.[xv] The point is that the preponderance of evidence does not find links. And what is being implemented in states is not the high-intensity system tested in Cincinnati.

In addition to not being correlated with test scores or measures of soft skills, teacher observations costs money. The cost is not easy to see because it’s time spent by administrators, which is not explicit in school budgets. We can guesstimate it. Suppose school administrators spend 10 hours a year to observe each teacher. This includes their time in training to conduct observations, doing the observations, writing up the results, meeting with teachers to debrief, and revising the observation if need be. For untenured teachers, the time commitment probably is higher because most systems call for them to be observed more frequently.

In 2015 there were 3.1 million K-12 public school teachers, which means 31 million hours spent annually on observations. The average school principal salary is $45 an hour. Applying this hourly rate to the number of hours, the system is spending $1.4 billion a year to observe teachers. This is spending a lot of money to find that nearly all teachers are effective and to generate teacher feedback that does not improve student learning.[xvi]

Teacher evaluation systems need a stronger scientific basis

We have invested massively in measuring student achievement. For more than 40 years, reading and math tests have been administered to hundreds of thousands of students. The tests are administered in each state, and, more recently, in a set of cities and in other subjects such as science and social studies. Researchers and policy experts have pored over every aspect of NAEP and continue to do so. It continues to evolve but it meets a high standard for measurement. State tests also meet high standards. Gregory Cizek noted, “The typical statewide NCLB tests administered today in every American state are far and away the most accurate, free-of-bias, dependable, and efficient tests that a student will encounter in his or her schooling.”[xvii]

We have not invested anywhere near as much to understand effective teaching and to measure teacher effectiveness. We need more research to identify teaching practices that link directly to learning, rather than using notions of what effective teachers should be doing. Researchers always seem to call for more research—but in this instance, the need for it is compelling. We are spending billions to little effect because we do not know enough about what we are looking for.

Many states have passed laws that require teacher evaluation systems. In those states, evaluations must happen. But the observation component could be structured more as checks that teachers have their classrooms under control and are teaching in a responsible way. This kind of minimal observation—analogous to car inspections—would be less taxing but still yield useful information. Teachers would not be labeled ‘effective,’ because these kinds of observations would not purport to assess effectiveness. A term such as ‘meets standards’ would be appropriate.[xviii]

New teacher evaluation systems were spurred by the Obama “Race to The Top” grant programs and by requirements imposed on states to receive federal waivers to No Child Left Behind. In terms of evidence, the cart may have been in front of the horse that new teacher-evaluation systems combining observation scores and student test scores would improve teaching and learning. Instead, we ended up in the same place, with systems that rate nearly all teachers as effective, and test scores about where they were ten years ago. But now we have more research telling us that what we are looking for in teaching doesn’t matter for student achievement anyway. The Obama policy experiment may not have yielded the answer that was desired, but, like many experiments, it yielded information that helps plan what to do next.

The Every Student Succeeds Act (ESSA) does not include a Race to the Top program and its passage obviated the need for waivers, but the idea of understanding and promoting effective teaching is powerful and worth retaining. In shepherding ESSA through Congress, Senator Alexander said he did not want the U.S. Department of Education in the role of a national school board, and responsibility for education should be with states. But the federal government is in the right position to develop and disseminate tools to identify and promote effective teaching practices. It’s a research task that benefits all states.

Evaluation systems that tell us all teachers are effective are misleading. We need a more solid research and measurement foundation about what aspects of teaching improve learning. Until we build that foundation, observing teachers and rating them is pointless, or worse—the ratings signal that all is fine, but it’s not.

—Mark Dynarski

Mark Dynarski is a Nonresident Senior Fellow at Economic Studies, Center on Children and Families, at Brookings.

This post originally appeared as part of Evidence Speaks, a weekly series of reports and notes by a standing panel of researchers under the editorship of Russ Whitehurst.

Mark Dynarski was not paid by any entity outside of Brookings to write this particular article and did not receive financial support from or serve in a leadership position with any entity whose political or financial interests could be affected by this article.

Notes:

[i] Recent results from the Program For International Assessment (PISA) also show a lack of improvement, in this case for 15 year old students. See http://nces.ed.gov/pubs2017/2017048.pdf. NAEP math and reading scores for fourth graders and eighth graders show improvement. For example, in 2005, 30 percent of eighth graders were proficient in math, and in 2015 that number had risen to 33 percent (http://www.nationsreportcard.gov/reading_math_2015/#mathematics/acl?grade=8).Why more learning in those grades has not yielded more learning in twelfth grade is a puzzle, but for purposes here, I focus on twelfth graders because of the cumulative nature of education.

[ii] https://nces.ed.gov/pubsearch/pubsinfo.asp?pubid=2016117. Media reports about recent increases in high school graduation rates notwithstanding (http://www.npr.org/sections/ed/2016/10/17/498246451/the-high-school-graduation-reaches-a-record-high-again), one in five students not graduating is problematic. A related point is that with more students staying in high school, the pool of students being tested may include more students who have low academic achievement. Blagg and Chingos (2016) investigated this hypothesis and concluded it did not explain the lack of score improvement (http://www.urban.org/sites/default/files/alfresco/publication-pdfs/2000773-Varsity-Blues-Are-High-School-Students-Being-Left-Behind.pdf).

[iii] http://tntp.org/publications/view/the-widget-effect-failure-to-act-on-differences-in-teacher-effectiveness

[iv] http://www.nctq.org/dmsView/StateofStates2015

[v] http://www.nj.gov/education/AchieveNJ/resources/201314AchieveNJImplementationReport.pdf.

[vi] Dee and Wyckoff found that DC teachers who were close to the rating at which they would receive substantial bonuses (two consecutive years of a ‘highly effective’ rating) improved their performance. http://curry.virginia.edu/uploads/resourceLibrary/16_Dee-Impact.pdf.

[vii] http://nces.ed.gov/pubs2007/2007328.pdf

[viii] A broad-brush description of these systems is found at http://www.edweek.org/ew/section/multimedia/teacher-performance-evaluation-issue-overview.html. Details of systems vary state by state.

[ix] There is too much literature to cite about using test scores for evaluating teachers. Henry Braun’s primer is a useful starting point. http://eric.ed.gov/?id=ED529977.

[x] This criticism lets perfect be the enemy of good. Any performance measure is imperfect and subject to chance variation, including teacher observations. A principal is distracted, a teacher stumbles in a lesson, a student disrupts class—these are chance events that can affect observation scores.

[xi] http://ies.ed.gov/ncee/pubs/20174010/pdf/20174010.pdf

[xii] Estimates for overall observation scores are found at http://files.eric.ed.gov/fulltext/ED540959.pdf. Estimates for items used to observe math instruction are found in Markington and Marder, “Classroom Observation and Value-Added Models Give Complementary Information about quality of Mathematics Teaching,” Chapter 8 in Designing Teacher Evaluation Systems, edited by Tom Kane, Kerri Kerr, and Robert Pianta (Jossey-Bass, 2014).

[xiii] D. Blazar, “Effective Teaching in Elementary Mathematica: Identifying Classroom Practices That Support Student Achievement,” Economics of Education Review, 48, 2015, pp. 16-29.

[xiv] http://scholar.harvard.edu/files/mkraft/files/teaching_for_tomorrows_economy_-_final_public.pdf.

[xv] https://www.educationnext.org/can-teacher-evaluation-improve-teaching/.

[xvi] This cost estimate does not include spending by states and districts on teacher professional development, which is also related to improving teaching. One estimate put the bottom line for professional development at about $7,000 a teacher each year (http://www.edweek.org/ew/articles/2010/11/10/11pd_costs.h30.html). That equals $21 billion a year—not a large proportion of the $600 billion or more spent on K-12 public education, but a lot to spend to not raise achievement.

[xvii] http://www.economist.com/blogs/democracyinamerica/2010/03/testing_and_assessment

[xviii] England’s system of school inspections explicitly cautions inspectors not to grade the quality of teaching based on observing individual lessons. Instead, inspectors gather “first-hand evidence gained from observing pupils in lessons, talking to them about their work, scrutinizing their work and assessing how well leaders are securing continual improvements in teaching.” https://www.gov.uk/government/publications/school-inspection-handbook-from-september-2015.