In his summer 2019 essay “Is Summer Learning Loss Real?,” Paul von Hippel tells us that his belief in summer slide – the pattern that has low-income and disadvantaged minority youth losing ground academically to their more advantaged peers over the long summer break — “has been shaken.” Von Hippel is a respected education researcher whom I hold in high regard, but I do not share his position on summer learning loss. This rejoinder explains why.

Two considerations appear to weigh on von Hippel. The first is that results from the Baltimore-based Beginning School Study, which he cites as one of best-known and most influential studies of summer learning loss, are not sustained when evaluated using recent advances in the psychometrics of achievement testing. The second is evidence that achievement gaps across social lines originate substantially over the preschool years, not during the summer months (or once children are in school, for that matter).

If von Hippel’s critique were limited to the second consideration, I would have little reason to comment. He acknowledges, and I agree, that strong summer programming can help mitigate the achievement gap, regardless of where those gaps originate—whether over the preschool years, as von Hippel has come to believe, in the hours after school, or over the long summer break, our focus at the National Summer Learning Association. But von Hippel’s first consideration does not hold upon close inspection. A corrective is in order.

On the Failure to Replicate

In the section of his essay entitled “A Classic Result Fails to Replicate,” von Hippel contends that when the California Achievement Test data used in the Beginning School Study are examined by way of contemporary adaptive testing rather than the Thurstone scaling that was available in the 1980s, there is no evidence of differential summer learning across social lines. The Beginning School Study finding in question is referenced in the introduction to his essay: By the ninth grade, “summer learning loss during elementary school accounts for two-thirds of the achievement gap between low-income children and their middle-income peers.”

“Replication,” as commonly understood, means doing an analysis as closely as possible to what was done in some other study. But instead of replication, von Hippel’s essay presents three graphs that address a fundamentally different question and references an old technical report on the California Achievement Test data that also addresses a fundamentally different question.

One graph reports Beginning School Study data that track reading comprehension scores across school years and summers separately from the fall of first grade through the end of sixth grade, followed by end-of-year spring data for seventh and eighth grade. It shows increases in the achievement gap across social lines most summers and a large overall increase in the gap over the entire span of coverage.

The other graphs report achievement data from studies that use adaptive scaling, a methodology that has largely replaced the Thurstone scaling available to the Beginning School Study researchers: the Early Childhood Longitudinal Study, Kindergarten Class of 2010-11 and the Northwest Evaluation Association Measures of Academic Progress. Both date to around 2010 at baseline and afford national coverage. The Early Childhood Longitudinal Study graph extends from kindergarten through second grade and the summers between; the Northwest Evaluation Association graph extends from kindergarten through eighth grade. Neither graph shows the familiar pattern of differential summer learning loss, although the achievement gap does increase over the span of years covered in the Northwest Evaluation Association graph, a pattern not evident in the Early Childhood Longitudinal Study data.

What is most notable about these displays, including von Hippel’s rendering of the Beginning School Study data, is that they do not address the question they are presumed to replicate: do children in lower-income families fall behind their more advantaged peers over the summer months? Instead, they ask whether test scores comparing high and low poverty schools diverge during the summer and over time. The high-poverty schools versus low-poverty schools labeling in these displays makes that quite clear, yet this goes unremarked in von Hippel’s essay.

The Northwest Evaluation Association did not gather data on students’ individual family circumstances, so the question addressed by the Beginning School Study cannot be informed with those data. I suppose that is why von Hippel chose to present graphs the way he did, but in doing so they inform a very different issue. In fact, the achievement scores of lower-income and higher-income children can diverge even if school averages do not.

The second “failure to replicate” is from a technical report on the California Achievement Test data published in 1993. Adaptive scaling was introduced into the California Achievement Test program shortly after Beginning School Study studies of summer learning concluded and “… when the test switched to item response theory scaling, high and low scores converged during the summer – the opposite of the famous finding.” But the “high and low scores“ that converged in this report pertain to children scoring at the 98th percentile as against children scoring at the second percentile — that is to say, children scoring at the very extremes, not lower-income and higher-income children as in the Beginning School Study studies (as well as most other studies of summer learning loss).

What, then, should be made of von Hippel’s “A Classic Result Fails to Replicate”? It probably would be more proper to read “A Classic Result that No One Has Tried to Replicate,” although other types of data that address other types of questions do not look quite the same. In sum, this section of von Hippel’s essay neither presents re-analyses of the original Beginning School Study data nor more contemporary data that address the fundamental issue of summer learning loss.

On Summer learning Loss in Modern Data

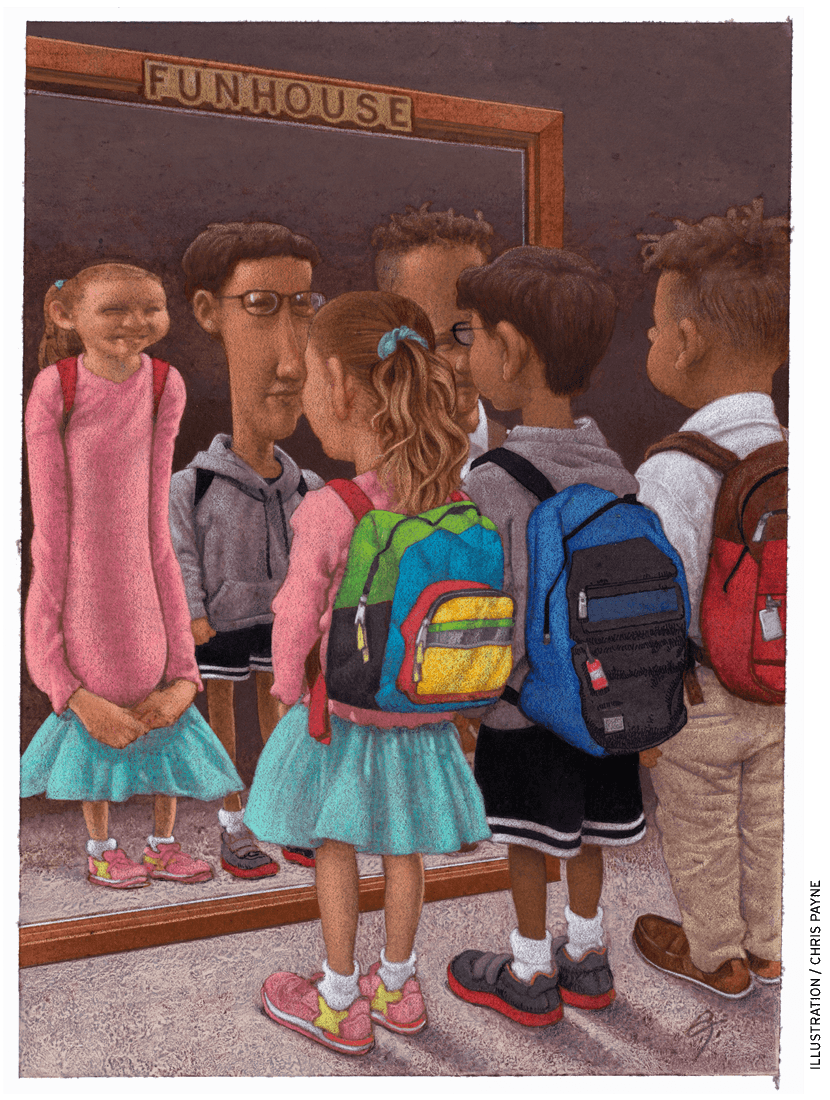

The section is subtitled “Are we still in the fun house?” That seems an odd allusion, but it is von Hippel’s, not mine. Here von Hippel returns to the Early Childhood Longitudinal Study and Northwest Evaluation Association data. The figures mentioned above simply show trends; the analyses referenced in this section are intended to nail down root causes. Keeping in mind that Northwest Evaluation Association data do not speak to the fundamental question of summer learning loss, it nevertheless is worth considering these analyses, as von Hippel deems them more credible than those conducted on the Beginning School Study data.

Here is his summary statement: “… they don’t agree when it comes to summer learning” …. “According to the Measures of Academic Progress tests …. summer learning loss is much more serious [as against the ECLS data]. On average, children lose about a month of reading and math skills during their first summer vacation. And during their second summer vacation, they lose three full months of skills in reading and math.” Continuing, von Hippel says: “How can students lose three months on one test, when they are barely losing, or even gaining, on another? It’s hard to explain.”

This disagreement across analyses that von Hippel finds hard to explain in fact is a bona fide failure to replicate. In dismissing the reality of summer learning loss von Hippel elevates one set of these conflicting results over the other. That he does so without an accompanying rationale is another “hard to explain” detail.

Reaching Back and Looking Ahead

von Hippel begins his essay citing two of the most powerful statistics in the summer slide literature: the Beginning School Study conclusion already referenced and, from a 1996 meta-analysis of the then extant research published by Harris Cooper and several colleagues, the statistic that most students lose two months of math achievement during the summer and, for low income children, two to three months of reading achievement. Though von Hippel’s evidence and argument hardly warrant dismissing conclusions from the Beginning School Study, Cooper’s review, and the Northwest Evaluation Association data, he does make a point with which I can agree: why should we have to reach back 30 or more years to makes the case for summer learning loss? I too would welcome new and better data on this important topic. Still, though old, these statistics are quite powerful, and this is not easy work to do: no other study since the Beginning School Study has undertaken to track the academic progress of a well-defined cohort of students over the entirely of their schooling.

But what is more relevant, I think, is that the field has moved on. I, and many others, are persuaded of the reality of summer learning loss and that it is one of the most pressing issues in education today. For that reason, research largely has turned to two compelling agenda items: identifying and evaluating best practices in summer programming and addressing inequities in access to programs that pass the best practice test. von Hippel is sympathetic to this agenda as well, although for him it is a matter of using the summer space to mitigate achievement differences that originate over the preschool years. As he puts it: “every summer offers children who are behind a chance to catch up.”

After acknowledging that “summer learning programs … can take a bite out of achievement gaps,” von Hippel devotes a full paragraph to charter school reforms that add days to the school year. This seems an odd emphasis, as in this literature it is impossible to isolate the efficacy of added days from within a bundle of other reforms that typically are implemented at the same time (e.g., adding hours to the school day, shortening breaks within the school schedule, optional Saturday classes, and one-on-one tutoring).

In any event, adding days to the school calendar has gained little traction nationally. In my view, a more attractive and viable option is to grow the availability of high quality summer programs like those documented in a 2019 RAND report, programs that are demonstrably effective in boosting not just academic performance, but also a range of other critical student outcomes, including socioemotional learning.

In sum, von Hippel dismisses Beginning School Study results based on “replications” that do not really replicate. He shows charts that compare school averages not the growth trajectories of children based on their individual family circumstances. He ignores one of the two modern data sets that use his preferred adaptive scaling. And he manages to slough over the large body of evidence that high-quality summer programs can boost children’s achievement and mitigate disparities across social lines. Summer slide, sadly, has not gone away, and many disadvantaged children are held back by it. Allowing von Hippel’s “shaken belief” to go unchallenged risks undermining efforts to help these children.

We know a great deal about effective interventions that can push back against the drag of circumstances outside school that burden needy children—academically in the short term and their later-life well-being over the longer term. I invite the readers of Education Next to join my colleagues and I at the National Summer Learning Association in helping increase knowledge of best practices in summer programming and broadening access to programs we know can work.

Karl Alexander is John Dewey Professor Emeritus of Sociology at Johns Hopkins University and was co-director of the Beginning School Study. He is a board member of the National Summer Learning Association.

Paul T. von Hippel responds to this piece in “Summer Learning: Key Findings Fail to Replicate, but Programs Still Have Promise.”