“[S]cientific findings are not singular events or historical facts. In concrete terms, reproducibility—and the related terms repeatability and replicability—refers to whether research findings recur.”

— the Open Science Collaboration (2018)

Failure to replicate is a common problem in research. In recent years, major replication efforts have shown that 40 to 60 percent of the studies published by top journals in psychology, experimental economics, clinical medicine, and other fields fail to produce similar results when conducted again with new participants. There is no reason to think that education research is exempt from the replication crisis. In fact, one of the striking features of education research is that its practitioners don’t seem to agree on much of anything. It is a cliché among journalists and education leaders that on almost any subject—from school choice to class size reduction—research findings can be described as “mixed,” and you can find an expert to argue for either side.

Summer learning—a subject that I’ve studied for the past 15 years—is in the throes of its own replication crisis. Many of the summer learning patterns that seemed apparent in older work—most notably in the Beginning School Study of 838 Baltimore schoolchildren in the 1980s—simply do not replicate in modern data.

To be sure, the simplest finding—that nearly all children learn reading and math more slowly during summer than during the school year—shows up in every data set that’s ever been studied. But beyond that, things get shaky. Here are some common claims about summer learning:

1. Children typically lose one to three months of reading and math skills over the summer.

2. The losses are much greater among poor or otherwise disadvantaged groups.

3. The score gaps between advantaged and disadvantaged children grow much more quickly during summer than during the school year.

4. Summer learning accounts for most of the achievement gap by the end of eighth grade.

All of these points accurately describe the findings of the Beginning School Study, and we often cite them as though they’re true in general. But in fact, none of them replicates consistently in modern data.

In a recent Education Next article, I demonstrated the failure to replicate by examining the gap between high-poverty and low-poverty schools in two large, modern datasets. One was the nationally representative Early Childhood Longitudinal Study of 17,733 children who started kindergarten in 2010; the other was an extract from the Growth Research Database, describing 177,549 children who took the Northwest Evaluation Association’s Measures of Academic Progress tests in nine grade levels and 14 states during 2010-2012.

Here is a summary of the results:

1. On the Measures of Academic Progress tests, children did indeed appear to lose an average of one to three months’ reading and math skills over the summer. But in the Early Childhood Longitudinal Study, they did not. In the Early Childhood Longitudinal Study, average scores changed very little over the summer. On average, children lost just two weeks’ skills over their first summer vacation, and gained a week of math skills over their second summer vacation.

2. In neither dataset did summer learning contribute much to math and reading gaps between high-poverty and low-poverty schools. In the Early Childhood Longitudinal Study, those gaps didn’t grow at all—not during the school year and not during summer. On the Measures of Academic Progress tests, gaps did grow over the summer—but no faster than they grew during the school year.

In short, neither of these modern datasets agreed with the Beginning School Study—and in some ways they didn’t agree with each other, either. This lack of replicability should leave us with a lot of uncertainty about whether summer learning loss is a serious problem, and what summer learning really looks like. That’s why my article’s title ended with a question mark: “Is summer learning loss real?”

Can Anything Reconcile Modern Results with the Beginning School Study?

In a new comment, Karl Alexander, who conducted the Beginning School Study with Doris Entwisle and Linda Steffel Olson, expresses hope that these discrepant findings can be reconciled. I had the same hope when I first saw some of these results. Unfortunately, further data and analysis have not yielded results that look like the Beginning School Study.

Alexander’s main concern is that the results I published in Education Next summarized a school-level analysis that compared high-poverty and low-poverty schools. Perhaps the results would look more like the Beginning School Study, Alexander suggests, if I conducted child-level analyses that compared low-income and high-income children.

But my colleagues and I have already published child-level analyses, and they didn’t change the story. In addition to comparing high-income and low-income schools, we compared children with low and high incomes. We compared children of more and less educated mothers. We compared black, white, Hispanic, Asian American, and Native American children. We summarized our results in two research articles that I cited in my Education Next piece. One article, written with Joe Workman and Doug Downey, appeared in Sociology of Education in October 2018; the other, written with Caitlin Hamrock, appeared in Sociological Science in January 2019. I encourage you to read them both.

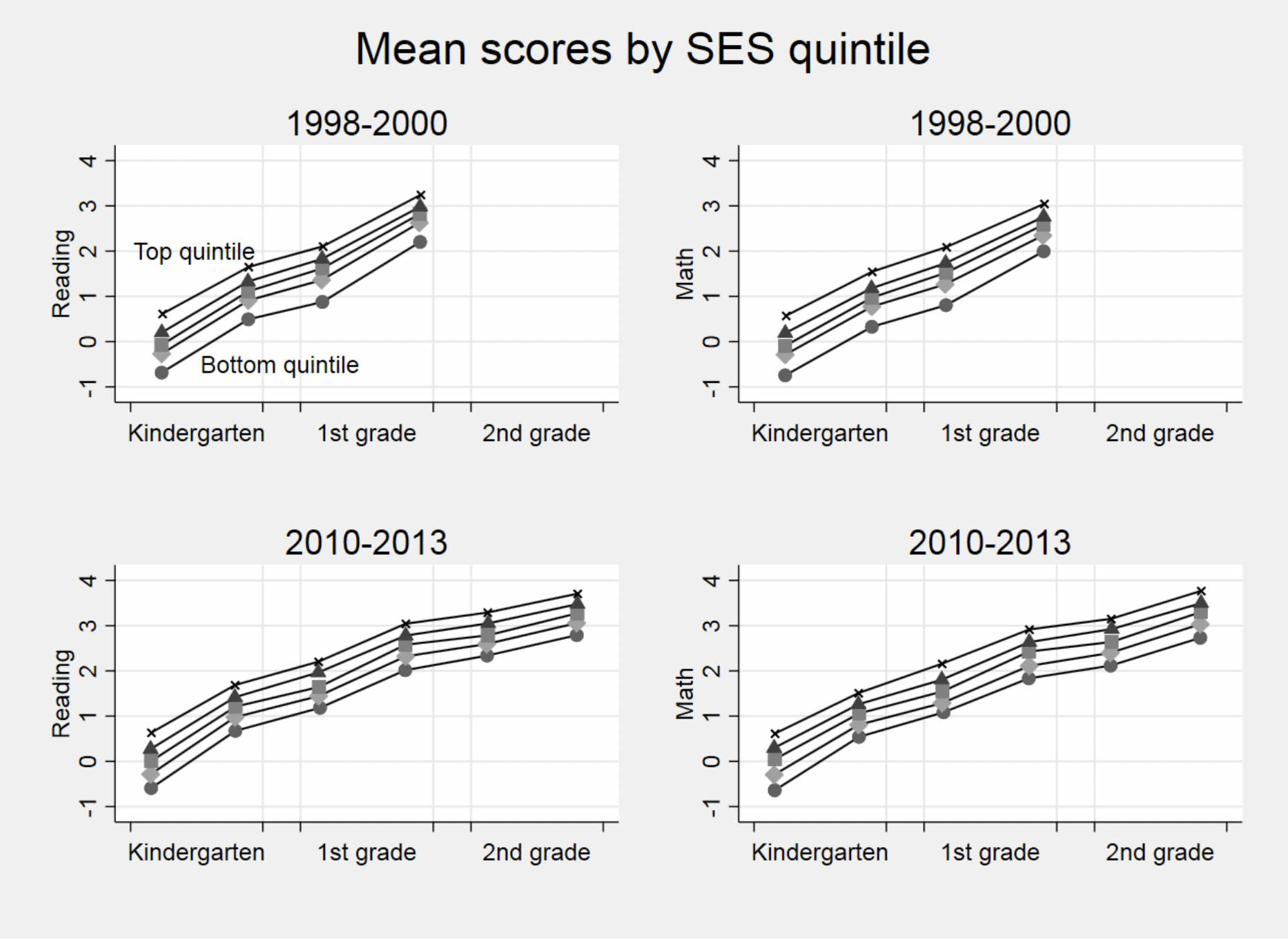

Here’s a child-level graph from our Sociology of Education article. It shows the gaps between children in different quintiles of socioeconomic status, or SES, defined using a composite of parents’ income, education, and occupational status. The data come from two different cohorts of the Early Childhood Longitudinal Study, one that started kindergarten in 1998 and one that started kindergarten in 2010.

Y-axis represents standard deviations at the start of Kindergarten.

It doesn’t appear that children from any SES quintile lose much over the summer, and it doesn’t appear that the gaps between quintiles grow much over the summer, either. In fact, gaps don’t grow at all between the start of kindergarten and the end of second grade. They actually shrink a little.

The Early Childhood Longitudinal Studies stopped measuring summer learning after second grade, but they have continued to assess students through eighth grade. Eighth grade data have been released for the old cohort, and they show that SES-related achievement gaps are no larger at the end of eighth grade than they were at the start of kindergarten. So it’s hard to see how summer learning could account for much of the eighth grade achievement gap. The gap comes mainly from early childhood.

No Study Is Definitive. That’s Why Replication Is Important

All studies are limited. The Beginning School Study was limited to Baltimore in the 1980s, when the city used a poorly scaled test that was phased out by the time the study ended. The Early Childhood Longitudinal Studies were nationally representative and used a better test to follow children through the end of eighth grade—but they didn’t measure summer learning after second grade. The Growth Research Database covers every summer for hundreds of thousands of students—but it doesn’t include data on family income or free lunch status. Every study used a different test, and we’ve shown that the properties of a test can affect results as much as anything that children actually learn or forget over the summer. In addition, none of these studies actually tested children on the first and last day of summer. On average, they tested children around October and May, so researchers like me needed to extrapolate to isolate the gains or losses that were attributable to summer learning.

None of these limitations justifies citing findings selectively, or acting as though the oldest study is definitive. No study is definitive. That’s why replication is important.

The only way to deal with a set of discrepant findings, each imperfect or incomplete, is to look for results that replicate. And the only result that’s replicated in the summer learning literature is that nearly all children learn more slowly over the summer than during the school year.

Policy Implications

In his comment, Alexander suggests that presenting these results, and raising doubts about how much we know about summer learning, is somehow harmful to summer learning programs and the children who participate in them.

This kind of comment isn’t uncommon, but it makes me uncomfortable because it blurs the line between advocacy and research. We shouldn’t start with a set of favored policies and programs and then squelch research that seems inconvenient. Instead, we should start with the research, review it thoroughly, openly, and impartially, and then decide what implications it has for programs and policies. Children should benefit from that process.

I’m generally supportive of efforts to increase summer learning. I serve on the research advisory board of the National Summer Learning Association, and I’m also a board member of a large nonprofit that runs a summer reading program. I encourage both organizations to take a broad view of the summer learning literature, acknowledge uncertainties, and subject summer programs and policies to rigorous evaluation.

Although my research hasn’t changed my support for summer learning programs, it has changed how I think about what they can do. I’m no longer sure that children fall off a cliff in the summer. But I’m pretty sure that most children make little progress uphill. And that’s enough. Because it means that the summer offers an opportunity—an opportunity for children who are behind to catch up, and an opportunity for children who are on track to learn something new.

Back when I believed that summer learning was the main cause of the achievement gap, I thought that summer learning programs should have dramatic effects. So I was puzzled and disappointed that the effects of most summer learning programs are rather modest. That makes more sense to me now. If summer learning programs aren’t preventing achievement gaps from widening, but instead trying to narrow gaps that have persisted since early childhood, then summer learning programs are going to have many of the same challenges as other attempts to shrink the achievement gap.

Since most summer programs last six weeks or less, it’s going to take several summers for even an effective program to make a noticeable and lasting dent in the achievement gap. But programs should try, and I hope the best ones succeed.

Paul T. von Hippel is an associate professor in the LBJ School of Public Affairs at the University of Texas at Austin.