Following are my responses to issues raised by Alan Ginsburg concerning my Education Next article, “The Case Against Rhee: How Persuasive Is It?”

Adjusting for National Trends

Ginsburg: “Crucial to Peterson’s claims is that the DC score improvement should be computed only as the excess above the national average NAEP gain….This criticism makes little sense.”

Reply: Should we adjust for national trends when assessing how well a particular district is doing over a specific period of time? In Ginsburg’s view, the nation is too heterogeneous to be commonly affected by the financial disaster of 2007 or the impact of NCLB, or some other broad, national trend. Generally speaking, trends within districts more often parallel national trends than diverge from them, and it is for that reason that the adjustment I made is routinely undertaken when estimating impacts.

But in this case there is a more specific reason for adjusting for national trends—the variability in NAEP’s own test. Although NAEP attempts to standardize its test from one administration to the next, its efforts in this regard are more strenuous when administering the Long-Term Trend version of NAEP (LTT) than when administering the main NAEP (MAIN), upon which Ginsburg depends for his conclusions.

MAIN measures student performance in grades 4 and 8, while LTT measures student performance at ages 9, 13 and 17. It is the preferred measure for estimating trends, because age-dependent developmental factors affect student performance and one cannot be sure that the ages of students in 4th and 8th grade remain constant over time.

None of this would matter much, were it not for the fact that the two tests have been yielding divergent results. As Brookings scholar Tom Loveless has pointed out to me, students in 4th grade are making spectacular gains on MAIN, but those gains have not been duplicated on the LTT. Between 1990 and 2009, 4th graders gained 27 points on the MAIN math test (just 7 points less than the size of the gap between DC and U. S. performance in 2000), but they gained only 13 points on the LTT test. For 8th graders, the gains in math were 21 points on the MAIN but only 11 points on the LTT. We don’t know if the difficulty is that MAIN was simplified during this period, or whether LTT was made more challenging (though, as I said, the LTT test is designed especially to get a stable measure over time). But any look at trends over time needs to adjust for likely variation in the design and administration of MAIN.

The best available solution is to examine the extent to which changes in district performance close the gap between the district and the nation, as I have done. Ginsburg argues against such an adjustment, saying it “makes no sense” but, then, within the same paragraph, engages in an analysis similar to what I have recommended, saying, to wit: “For math…DC gains at grade 4 were higher than any state” over the full 2000-07 period.

Such comparisons need to be carried out, not anecdotally, as in Ginsburg’s comment, but systematically, as I have done, by looking at the extent to which DC closed the gap between its performance and that of the nation.

Annual Gains

Ginsburg says he did not fail to adjust for the fact that “Rhee was in office for only two years.”

Reply: It is true that Ginsburg’s tables report annual gains, but his summary material does not. On page 8 of his paper, in the first statement of the findings in the main text of the paper, under the heading “overall results,” one encounters the following words:

“For math between 2009-09, Vance accounted for 46%, of the share of the total gain in NAEP scores for both grades 4 and 8, Janey 30%, and Rhee 24%…..For reading between 2003-09, Janey accounted for 65% of the total gain in NAEP scores over grades 4 and 8, and Rhee accounted for 35%.”

Beyond any shadow of a doubt, those prominent statements in his report constitute a misleading comparison that fails to adjust for the fact that “Rhee was in office for only two years.

Accurate Data

Ginsburg defends the accuracy of his data as follows: “My report clearly specifies that I used the state NAEP series because of its consistent treatment of charter schools over the full 2000-2009 period.”

Reply: Ginsburg’s “consistent treatment of charter schools” is to include the students attending them in his assessment of Rhee’s performance without informing his readers of that fact. This is no trivial matter, as nearly a third of the DC students are attending charter schools, which operate autonomously of the district.

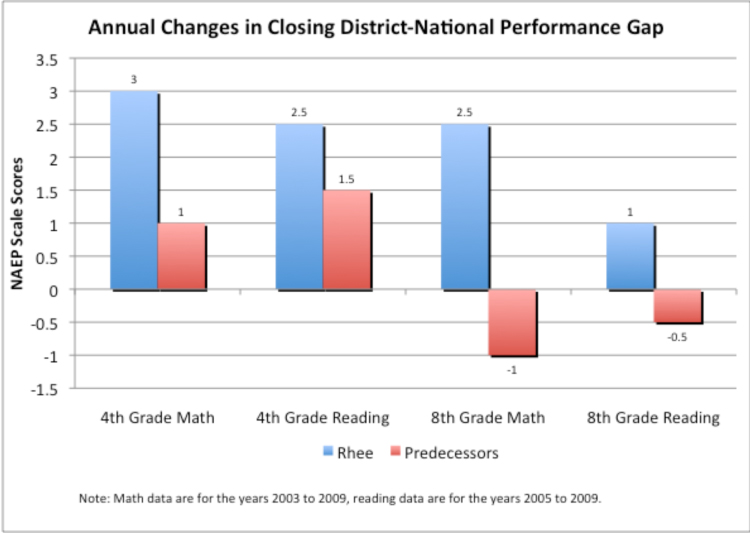

In my essay, I did my best to put to one side data for those students who were attending charter schools not authorized by the district. Another way to proceed is to remove all students attending any charter schools in the District of Columbia (no matter what entity is the authorizer). That solution, I have now learned, can be followed for the years 2003 to 2009 in math and 2005 to 2009 in reading.

The chart below displays results when all students attending charter schools are excluded from the analysis for all years for which information on charter schools is available. The chart shows the extent to which students closed the District-National performance gap annually during the years when the District was under Rhee’s Chancellorship and that of her predecessors. As can be seen, students did better under Rhee’s reign in both 4th and 8th grade reading and math.

Retraction

Ginsburg now seems prepared to agree with me that the case against Rhee has yet to be established. He says that “longitudinal tracking of students is essential to estimating DC gains.” Inasmuch as Ginsburg never had the data to do the “longitudinal tracking” that he now admits is “essential,” he, in essence, has retracted his original claims.

– Paul E. Peterson