In its release of SIG data, the U.S. Department of Education only provided comparisons between SIG schools and statewide averages. As I mentioned in Friday’s post, that’s not exactly a revealing comparison since SIG schools are, by definition, extremely low-performing and have much more room for improvement than the average school in the state.

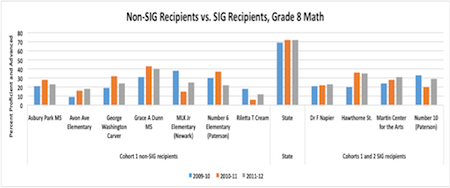

Since Secretary Duncan visited New Jersey for the data release, we decided to do a quick New Jersey analysis of our own—something we thought might be edifying. We compared the performance of SIG schools to the performance of schools that applied for SIG (and were eligible) but didn’t receive awards. In other words, we compared schools that were similarly low performing at the start, while one set received the intervention and the other did not. We looked at math scores and focused on schools with an eighth grade (high schools take a much different test in New Jersey).

As you can see, at least based on a quick analysis of one state’s data, it’s hard to make the case that this massive program had a transformative influence on the state’s most troubled schools. There’s just not all that much difference in the changes between schools that were SIG-eligible but lost and schools that were SIG-eligible and won. And we certainly don’t see any major turnarounds.

The Department’s research arm is going to do a more sophisticated analysis along these lines at some point. But based on what we’re seeing here, those findings may not be all that more heartening than what we saw on Friday.

—Andy Smarick

This post originally appeared on the Fordham Institute’s Flypaper blog.