On Tuesday, May 22, 2018, EdNext hosted “Are State Proficiency Standards Falling?” at the The Johnson Center at the Hoover Institution in Washington, D.C. Watch the livestream of the discussion here.

The Every Student Succeeds Act (ESSA), passed into law in 2015, explicitly prohibits the federal government from creating incentives to set national standards. The law represents a major departure from recent federal initiatives, such as Race to the Top, which beginning in 2009 encouraged the adoption of uniform content standards and expectations for performance. At one point, 46 states had committed themselves to implementing Common Core standards designed to ensure consistent benchmarks for student learning across the country. But when public opinion turned against the Common Core brand, numerous states moved to revise the standards or withdraw from them.

Although early indications are that most state revisions of Common Core have been minimal, the retreat from the standards carries with it the possibility of a “race to the bottom,” as one state after another lowers the bar that students must clear in order to qualify as academically proficient. The political advantages of a lower hurdle are obvious: when it is easier for students to meet a state’s performance standards, a higher percentage of them will be deemed “proficient” in math and reading. Schools will appear to be succeeding, and state and local school administrators may experience less pressure to improve outcomes. The ultimate scenario was lampooned by comedian Stephen Colbert: “Here’s what I suggest: instead of passing the test, just have kids pass a test … Eventually, we’ll reach a point when ‘math proficiency’ means, ‘you move when poked with a stick,’ and ‘reading proficiency’ means, ‘your breath will fog a mirror.’” A reader of the Dallas Morning News saw nothing funny about the situation: “Tougher standards for students and teachers are a must if the U.S. is to avoid becoming a Third World economy.”

So, has the starting gun been fired on a race to the bottom? Have the bars for reaching academic proficiency fallen as many states have loosened their commitment to Common Core? And, is there any evidence that the states that have raised their proficiency bars since 2009 have seen greater growth in student learning?

In a nutshell, the answers to these three questions are no, no, and, so far, none.

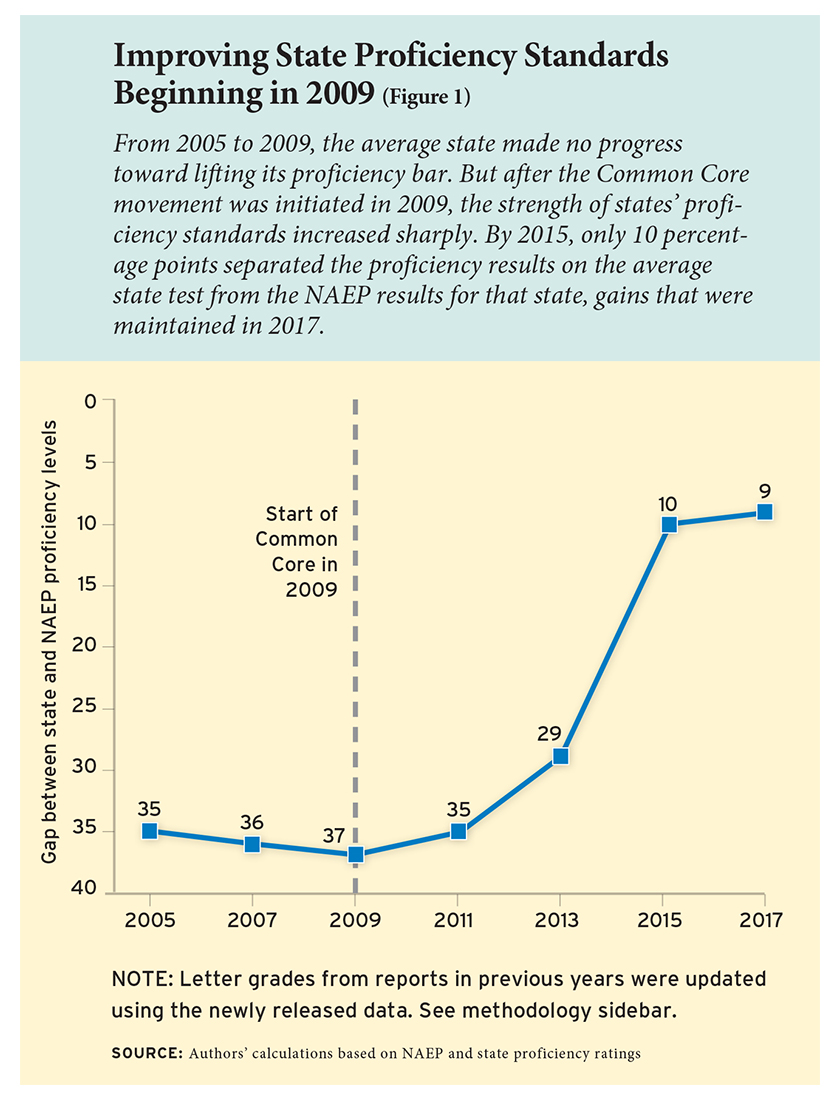

On average, state proficiency standards have remained as high as they were in 2015. And they are much higher today than they were in 2009 when the Common Core movement began. That year, the percentage of students found to be proficient in math and reading on state exams was 37 percentage points higher than on the National Assessment of Educational Progress (NAEP), an exam that is widely recognized as maintaining a high bar for academic proficiency. By 2015, that gap had narrowed to just 10 percent. Now, recently released data for 2017 reveal a difference of only 9 percent.

The news is not all good. Even though states have raised their standards, they have not found a way to translate these new benchmarks into higher levels of student test performance. We find no correlation at all between a lift in state standards and a rise in student performance, which is the central objective of higher proficiency bars. While higher proficiency standards may still serve to boost academic performance, our evidence suggests that day has not yet arrived.

Initiatives to Raise State Standards

Differences among the states in their expectations for students became apparent in 2002 with the enactment of No Child Left Behind (NCLB). The law required states to administer examinations to students in grades 3 to 8 (and once during high school) in both math and reading. It also asked each state to set a performance bar for its tests that defined student proficiency at each grade level. This achievement level varied widely from one state to the next. Little Roberto and young Kaitlin could become “proficient” simply by moving from New Mexico, a state with high standards, to Arizona, a state with mediocre ones. By 2009, Massachusetts, Missouri, Hawaii, and Washington State had also set their proficiency bars at levels approaching established national benchmarks. But numerous states, including New York, Illinois, Texas, and, most especially, Tennessee, Alabama and Nebraska, had set much lower targets.

That same year, the National Governors Association and the Council of Chief State School Officers received funding from the Bill and Melinda Gates Foundation to set national education standards at each grade level. This effort to establish uniform learning aspirations and goals came to be known as the Common Core State Standards. The standards prescribed the math and reading content that students should master at each grade level, not the level required to demonstrate proficiency. Even so, a major goal was to raise expectations for proficiency in math and reading across the nation—the reasoning being that once states defined what all students should learn at a given grade level, they could devise rigorous assessments to test how well they have learned the material. In other words, content standards and proficiency standards go hand in hand.

The effort to develop national content standards was given a boost by the Obama administration’s Race to the Top initiative, which offered a total of $4.35 billion in grants through a competitive process that gave an edge to states proposing to implement a variety of reforms, including the adoption of standards akin to the ones promoted by Common Core.

Race to the Top is generally thought to have motivated many states to implement the Common Core, though the Obama administration denied direct involvement, maintaining that the enterprise was strictly state-driven. What is certain is that all but four states (Texas, Virginia, Nebraska, and Alaska) ended up adopting the standards. Yet growing criticisms of the Common Core standards by an unusual alliance of teachers’ organizations and Tea Party enthusiasts greatly weakened political support for the standards. The federal government, under ESSA, now prohibits federal incentives that could facilitate the adoption of national standards. In addition, many states either formally withdrew or announced their intention to revise them. Just what constitutes a withdrawal or a revision has become a matter of contention even among apparently neutral observers. According to Abt Associates, three states had withdrawn and another 23 either revised or were reported to have expressed an intention to revise the standards as of January 2017. But according to a September 2017 account in Education Week, only 10 states had withdrawn or undertaken a “major revision” of the standards.

Listen to Paul E. Peterson and Dan Hamlin discuss this article on The Education Exchange podcast.

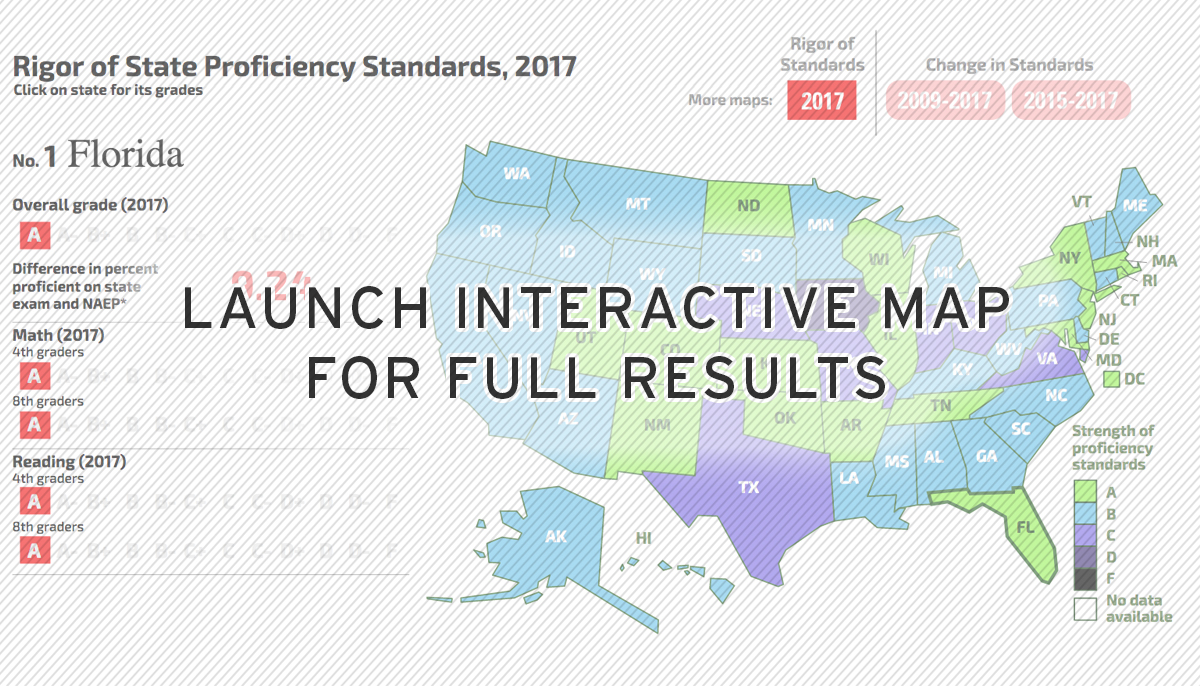

Grading States on Proficiency Standards

Given the controversy over the number of states that have moved away from the content standards that comprise the Common Core, it is all the more important to observe empirically what is happening to state proficiency standards. Since 2005, researchers at Education Next have graded state proficiency standards on an A–F scale. To generate these letter grades, we compare the percentage of students identified as proficient in reading and math on state assessments to the percentage of students so labeled on the more-rigorous NAEP. Administered by the U.S. Department of Education, NAEP is widely considered to have a high bar for proficiency in math and reading. Because representative samples of students in every state take the same set of examinations, NAEP provides a robust common metric for gauging student performance across the nation and for evaluating the strength of state-level measures of proficiency. The higher the percentage of students found proficient on the exam of a particular state, compared to the percentage so identified by NAEP, the lower the state’s proficiency standard is judged to be. In 2017, nine states had set such a high bar that they reported a slightly lower percentage of proficient students than was reported by NAEP, earning these states an A in our grading system. We also give an A grade to states whose proficiency levels are closely aligned with NAEP’s. When a much higher percentage of students are found proficient on a state exam than on the NAEP test, then the grade falls—sometimes so dramatically that some states in previous years have received an F. Our analysis looks at only the percentage of students who are deemed proficient on the state exams, not the content of the exams or the courses taught at each grade level.

NAEP is administered to representative samples of students in each state every two years in grades 4 and 8 in math and reading. In these years, comparison data for state and NAEP tests are available for participating students. The 2017 results were released in April of this year. After computing percentage differences between state and NAEP proficiency levels, we determine how much each state’s difference is above or below the average difference for all states over eight years (2003, 2005, 2007, 2009, 2011, 2013, 2015, and 2017) for which both state and NAEP data are available. When assigning letter grades, we use a curve for the current year and also update previous years’ letter grades to reflect the current status of state standards indicated by newly released data. Since new data are used to calculate grades from both the contemporary period and for prior years, a state’s grade in one year may differ from the grade given in our previous reports. For example, the grades for 2009 reported here differ from those Education Next researchers reported in 2010 because average state standards have risen since then. (See sidebar for further details on methodology.)

NAEP is administered to representative samples of students in each state every two years in grades 4 and 8 in math and reading. In these years, comparison data for state and NAEP tests are available for participating students. The 2017 results were released in April of this year. After computing percentage differences between state and NAEP proficiency levels, we determine how much each state’s difference is above or below the average difference for all states over eight years (2003, 2005, 2007, 2009, 2011, 2013, 2015, and 2017) for which both state and NAEP data are available. When assigning letter grades, we use a curve for the current year and also update previous years’ letter grades to reflect the current status of state standards indicated by newly released data. Since new data are used to calculate grades from both the contemporary period and for prior years, a state’s grade in one year may differ from the grade given in our previous reports. For example, the grades for 2009 reported here differ from those Education Next researchers reported in 2010 because average state standards have risen since then. (See sidebar for further details on methodology.)

Maintaining High Standards

Comparing state exams to NAEP, we are able to identify changes in states’ proficiency bars over time. Figure 1 displays the change in the average state proficiency level between 2005 and 2017 relative to NAEP. From 2005 to 2009, the states, on average, made no progress toward lifting their proficiency bars. While there was no “race to the bottom,” neither was there any trend toward setting higher expectations. But in 2009, when the Common Core movement was initiated, and shortly thereafter, when Race to the Top nudged states to adopt the standards, many states began using exams more closely aligned with the Common Core. The strength of states’ proficiency standards increased sharply so that by 2015 only 10 percentage points separated the average state proficiency bar from the NAEP standard.

In 2017, the large leap forward endured. State proficiency standards not only avoided the expected slip many had feared in the wake of ESSA’s passage in 2015, but they improved slightly between 2015 and 2017 and now show only an average lag of 9 percentage points relative to NAEP.

In 2017, the large leap forward endured. State proficiency standards not only avoided the expected slip many had feared in the wake of ESSA’s passage in 2015, but they improved slightly between 2015 and 2017 and now show only an average lag of 9 percentage points relative to NAEP.

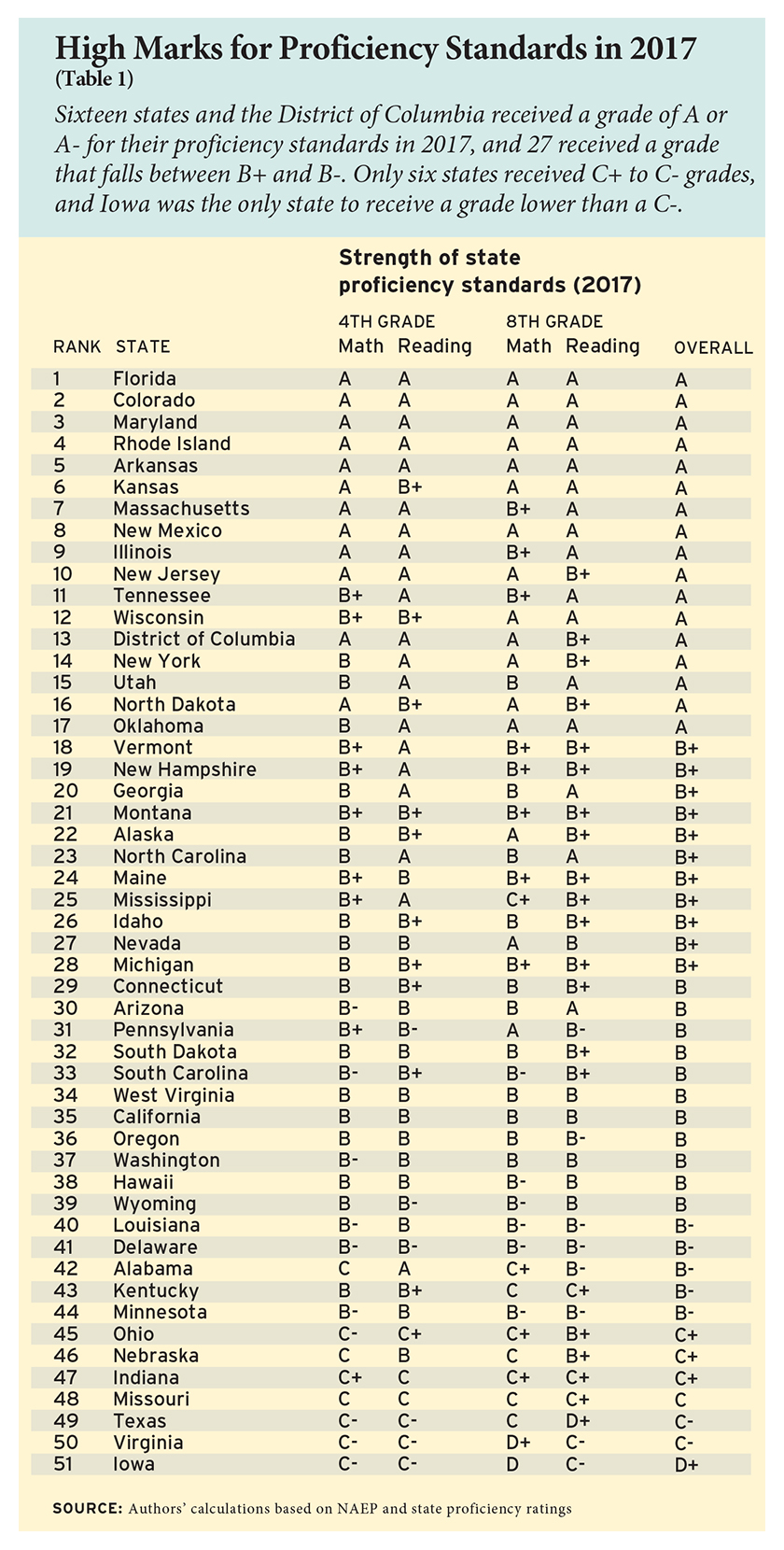

When the Common Core initiative began in 2009, not a single state achieved, by today’s standards, an A for having a proficiency bar tightly aligned with NAEP, and only Massachusetts and Missouri received B+ or B grades. Six years later, dramatic progress had taken place, with 16 states receiving A grades and 27 others receiving grades in the range of B+ to B-. That trend has held up and has even drifted slightly upward by 2017. Table 1 shows these latest results. Sixteen states and the District of Columbia receive a grade of A or A-, and 27 receive a grade that falls between B+ and B-. Only six states received C+ to C- grades, while Iowa was the only state to receive a grade lower than a C-. By contrast, 29 states were awarded a D+ grade or lower for the proficiency bar they set in 2009.

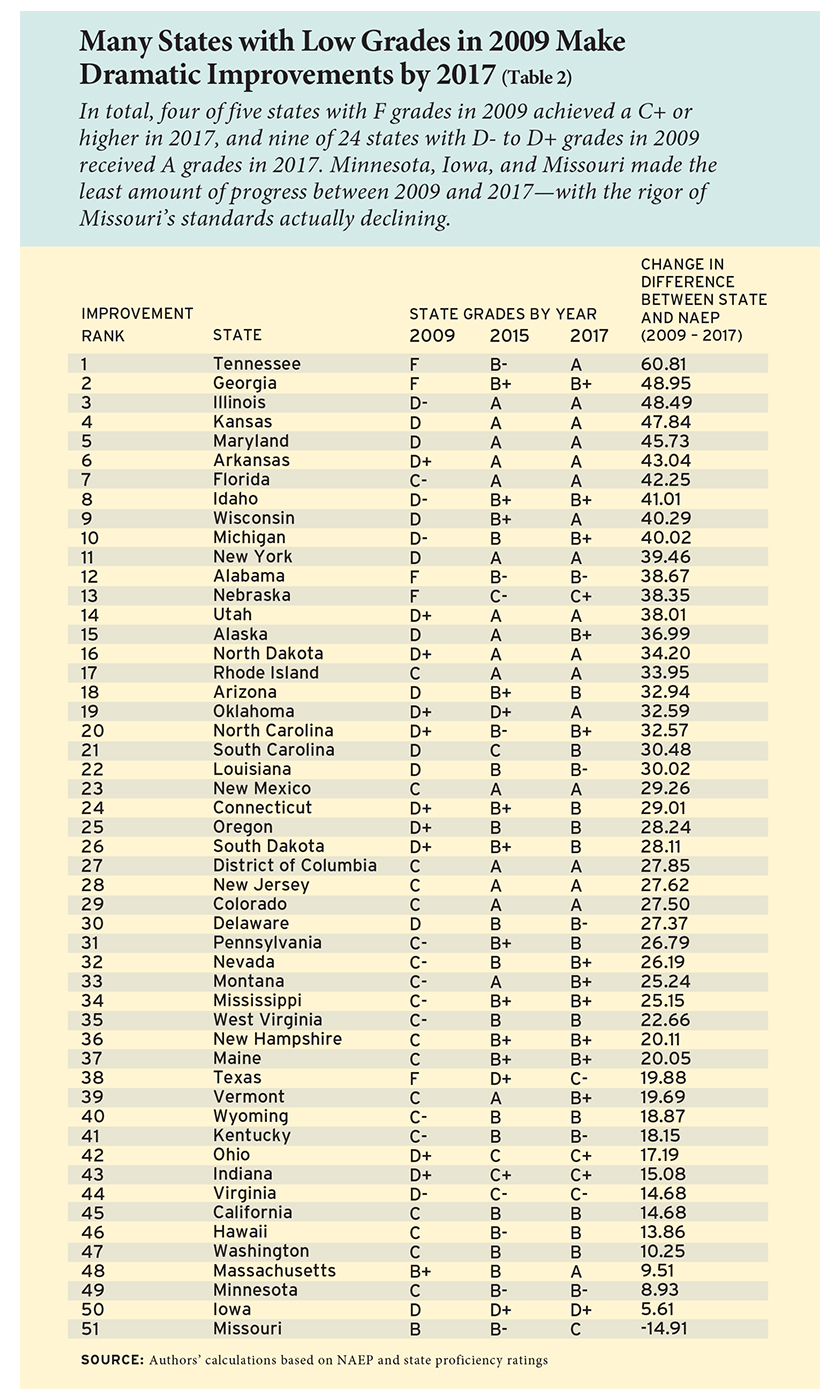

Table 2 shows that a number of states have made particularly dramatic improvements. Tennessee, for instance, skyrocketed from an F grade in 2009 to an A in 2017. Illinois went from a D- to an A and Georgia from an F to a B+. In total, 4 of 5 states with F grades in 2009 achieved a C+ or higher in 2017, and 9 of 24 states with D- to D+ grades in 2009 received A grades in 2017. Three Midwestern states—Missouri, Iowa, and Minnesota—made the least amount of progress, with Missouri being the one state in the nation to see its proficiency gap with NAEP widen between 2009 and 2017.

Improved Proficiency Standards and Test-Score Growth

Improved Proficiency Standards and Test-Score Growth

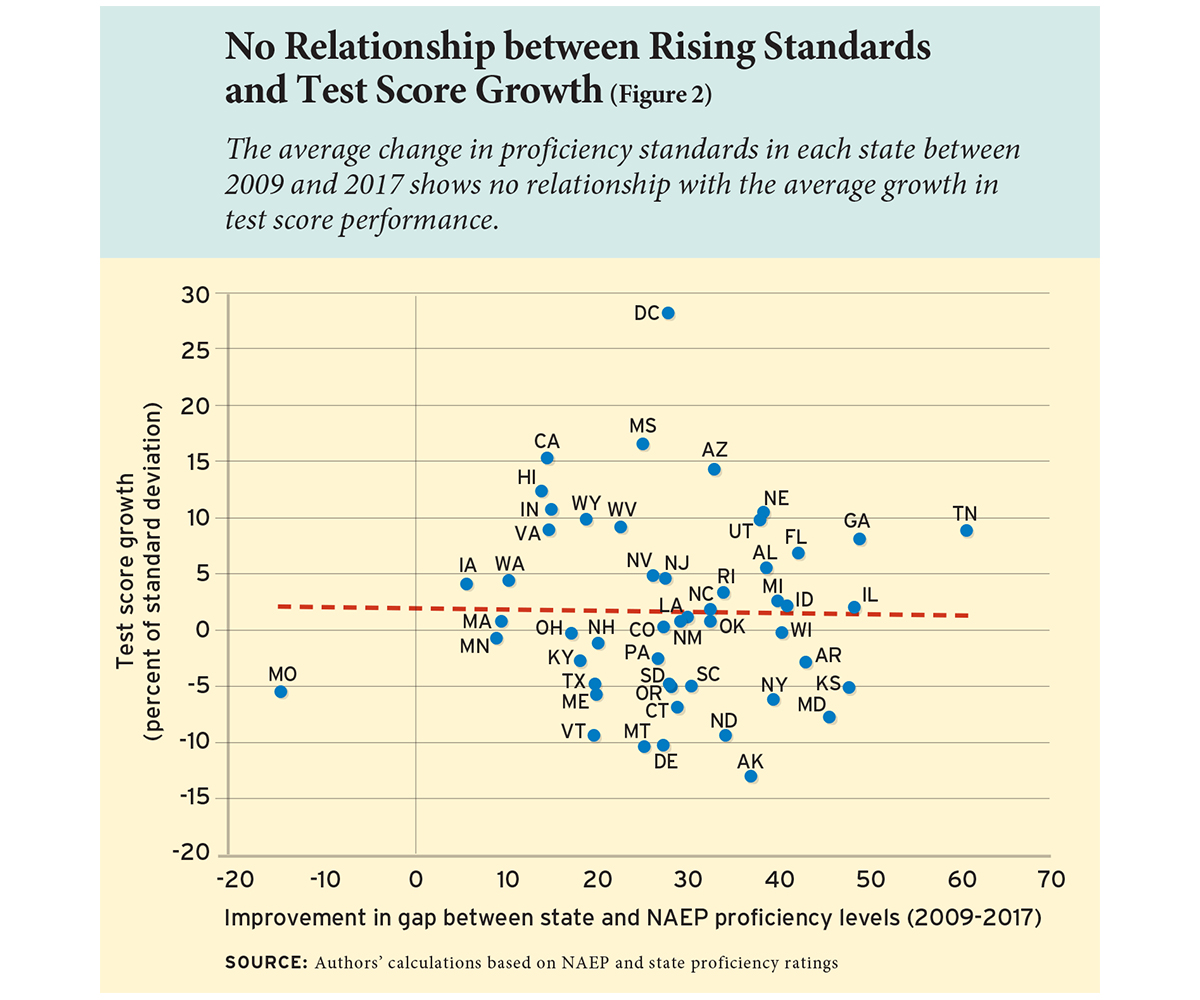

Supporters of higher proficiency standards expect them to lead to improved student achievement. As the Common Core website puts it: “State school chiefs and governors recognized the value of consistent, real-world learning goals and launched this effort to ensure all students, regardless of where they live, are graduating high school prepared for college, career, and life.” To determine whether the rise in state proficiency standards between 2009 and 2017 has translated into improvements in student learning, we looked at the relationship between changes in standards and changes in NAEP performance (test-score growth) over this time period. We calculated growth in student performance for each state between 2009 and 2017 on the NAEP reading and math exams administered to students in 4th and 8th grade. (To make comparisons across the four exams, we calculate growth in standard deviations.)

Figure 2 displays the relationship between the average change in proficiency standards in each state between 2009 and 2017 and the average amount of growth in test-score performance. The nearly flat line in the figure reveals virtually no relationship between rising proficiency standards and test-score growth over this time period. These results, while disheartening, do not prove that state standards are ineffective. Test-score growth could have been impeded by the Great Recession of 2008–09 and concomitant declining school expenditures, or rising pension and medical costs that deflected financial resources from the classroom, or the end of the NCLB accountability system, or any one (or combination) of many other factors that may impinge upon student learning. It is also possible that the impact of rising standards is not yet visible. After all, it took years to design and implement the complex Common Core standards, and it may take still more time for high standards to have measurable impacts on student learning. For Common Core supporters, the most hopeful element in Figure 2 is the placement of the state of Tennessee. Tennessee has been touted for its faithful implementation of higher standards (even though the state revised its Common Core State Standards), and it has also experienced both rising standards and moderately improved student performance over the past eight years. Although no firm conclusions can be drawn from any one state’s results, neither should the data presented here be treated as a signal that the campaign for higher standards is a failure. The final ending to this tale remains to be written.

The Direction of Proficiency Standards

At present, student proficiency standards in most states are closely aligned with rigorous national proficiency standards as set by NAEP. The relatively close alignment between state and national assessments represents a major improvement from 2009 when the Common Core initiative began. Although 46 states adopted the standards, the introduction of ESSA has given states more freedom to determine how to test students and it prevents the federal government from encouraging national standards. While some states have withdrawn from Common Core or revised the standards, thus far these moves do not appear to have weakened state proficiency standards. Even so, the primary driving force behind raising the bar for academic proficiency is to increase academic achievement, and it appears that education leaders have not figured out how to translate high expectations into greater student learning.

Daniel Hamlin is a postdoctoral fellow at the Program on Education Policy and Governance (PEPG) at the Harvard Kennedy School. Paul E. Peterson is professor of government at Harvard University, PEPG director, and senior editor of Education Next.

|

Method for Grading the States Since 2003, a representative state-level sample of 4th- and 8th-grade students has taken the National Assessment of Educational Progress (NAEP) every other year (2003, 2005, 2007, 2009, 2011, 2013, 2015, and 2017). To grade states on the rigor of their proficiency levels, we compare the percentage of a state’s students labeled “proficient” on NAEP to the percentage of students identified as “proficient” on state examinations in math and reading for 4th- and 8th-grade students over the eight years for which data are available for the two tests. Education Next has graded the states every other year beginning in 2005. For each report, we calculate state grades using previous year’s data as well as newly released data from the most recent year. After computing the percentage difference between the NAEP and state exams, we calculate the standard deviation of this difference for each year. We then determine how many standard deviations each state’s difference is above or below the average difference for all states for all available years. In applying new data to the grading scale, we not only determine state grades for the current year of analysis but also update state grades from previous years to reflect the present status of standards indicated by newly released data. We also updated data for several states, collecting previously unreported state-level student proficiency rates from prior years. The grading scale for state grades is set so that if marks were randomly assigned in a normal distribution for all eight years, 10 percent of the states would earn an A, 20 percent a B, 40 percent a C, 20 percent a D, and 10 percent an F. We do not require the meeting of any stipulated cutoff in differences with the NAEP standard to award a specific grade. Instead, we rank states against each other in accordance with their current position in the distribution of state and NAEP differences for all eight years. When the U.S. Department of Education used an alternative method to estimate 2007 state proficiency standards, its results were highly correlated with the EdNext results at the 0.85 level (see Paul E. Peterson, “A Year Late and a Million (?) Dollars Long—the U.S. Proficiency Standards Report,” Education Next Blog, August 22, 2011). A Sample Calculation To illustrate how we calculate state grades, consider Idaho. In 2017, the state reported that 47 percent of its 4th-grade students were proficient on the state examination in math. However, only 40 percent of Idaho’s 4th-grade students scored as proficient on NAEP. The percentage difference of 7 points between the Idaho and NAEP exams is better than the average difference of 24 percentage points observed for all states over eight years on 4th-grade math. As a result, Idaho’s scores are approximately 1 standard deviation higher than the average difference between state and NAEP 4th-grade math exams for all states over eight years, earning the state a letter grade of B for the strength of its proficiency standards for 4th-grade math. |

This article appeared in the Fall 2018 issue of Education Next. Suggested citation format:

Hamlin, D., and Peterson, P.E. (2018). Have States Maintained High Expectations for Student Performance? An analysis of 2017 state proficiency standards. Education Next, 18(4), 42-49.