The House of Representatives voted yesterday on the reauthorization of the Opportunity Scholarship Program (OSP), which has paid the private-school tuition of low-income students in the District of Columbia since 2004. As the only federally funded school voucher program in the country and a policy important to outgoing Speaker John Boehner, OSP has engendered significant political controversy over the years. But what does the evidence say about OSP?

The limited number of OSP scholarships is distributed to eligible families via lottery. Applicants during the early years of the program were included in a federally mandated evaluation that compared students who won the voucher lottery to those who applied but lost. By comparing only students who entered the voucher lottery, researchers controlled for differences between families that apply for vouchers and those who don’t. The final report from the initial evaluation, which was released in 2010, has something in it for both OSP supporters and opponents.

Supporters can credit the voucher program with improved reading scores and high school graduation rates: 82 percent of students offered a voucher graduated from high school, compared to 70 percent of those who lost the lottery. But critics can point to the lack of an impact on math scores, the failure of the reading impact to meet the government’s standard for statistical significance (it was significant at the 90 percent confidence level but not the 95 percent level), and the fact that high school graduation information was collected from a survey of parents rather than administrative records.

Where should OSP research go from here? We offer three recommendations for future voucher research in the national’s capital, some but not all of which are embodied in the House bill. Many of these recommendations are relevant regardless of whether the voucher program itself is continued.

First, the original study should be extended to examine administrative records on high school graduation and college enrollment rates. The initial government evaluation gathered data through 2008-09, so the graduation rate analysis is only based on about 300 students (as compared to 1,300 students from multiple grades included in the test-score analysis). Many more students are now old enough that the voucher program’s effects on their educational attainment can be tracked.

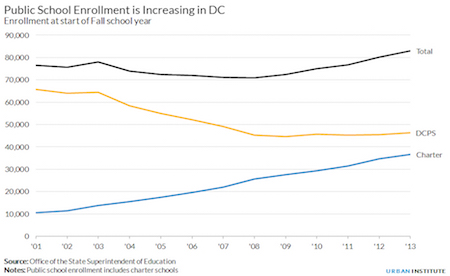

Second, more recent OSP participants should be studied. The effect of the OSP today may be different than it was in 2004 because of the expansion in educational choices in DC over the last decade, as one of us argued in Congressional testimony earlier this year. The number of charter schools has doubled and children can apply to attend almost any public school in the city. Public school enrollment has increased by 12 percent and private school enrollment in elementary and middle schools decreased by half. Even if the outcomes of today’s OSP participants are similar to those of a decade ago, the outcomes of non-participants in public schools may have changed significantly as test scores and graduation rates have trended upward.

Finally, DC should better collect and organize data on other programs to compare the cost-effectiveness of OSP to other interventions aimed at low-income students. Even with high-quality evidence on OSP in hand, this comparison is made difficult by the lack of system-wide capacity to produce high-quality research on DC students.

Earlier this year, a National Academy of Sciences committee charged with evaluating education reforms in DC issued a report that included scathing comments on the difficulty of obtaining education data: “The lack of readily available data presented a significant challenge for our committee, and it is a source of frustration for some senior DCPS officials who would like to rely more heavily on data to support their decision making. More important, it is a significant gap for education governance in the city… Valuable information the city may have is either not made public or is difficult to find in education-related websites that are not coordinated.” Without a rich repository of data to draw from (such as those maintained by research consortia in cities such as Chicago and New York), DC will not be in a strong position to assess the relative effectiveness of different community, school, and classroom policies and practices.

No single study will settle the political debate over an issue as contentious as school vouchers. But as public funding of private schools expands in states such as Arizona, Florida, and Nevada, more high-quality evidence on the nation’s most prominent voucher program has the potential to inform education policymaking in the capital and across the country.

—Matthew M. Chingos and Megan Gallagher

This post originally appeared on Urban Wire