The US Department of Education’s decision to revisit the gainful employment regulations that would cut off federal aid to career training programs where students take on large debts relative to their income has been generally cheered by the right and criticized by the left. But if policymakers can look beyond the politics, the gainful employment data provide evidence that Congress may want to rethink its approach to holding all colleges accountable—a goal both sides claim to share.

The Obama administration’s Department of Education promulgated gainful employment (GE) to protect students and taxpayers from low-quality programs, largely in the for-profit sector. Under current regulations, a program’s eligibility for federal grants and loans is tied to its graduates’ debt-to-earnings ratio. A high ratio indicates the typical program graduate is not earning enough upon graduation to justify their loan.

No programs have lost access to federal funding under GE, and the regulations may never fully take effect. But the GE data gathered in the three years since the regulation’s introduction provide useful insight for policymakers looking to address accountability more generally. Although the federal government’s main accountability lever—eligibility for federal grants and loans—is only implemented at the institution level for most of higher education, the GE data show the value of targeting individual programs, rather than entire institutions.

We analyzed program-level versus institution-level accountability by constructing GE measures for for-profit institutions, the only sector in which most students are in GE programs. We calculated an average of the program-level debt-to-earnings metrics, weighted by the number of graduates of each program. We used the same rules as the program-level metrics to determine whether an institution receives a grade of pass, zone (a rating between pass and fail), or fail. (We describe the details of the data and methodology in an appendix.)

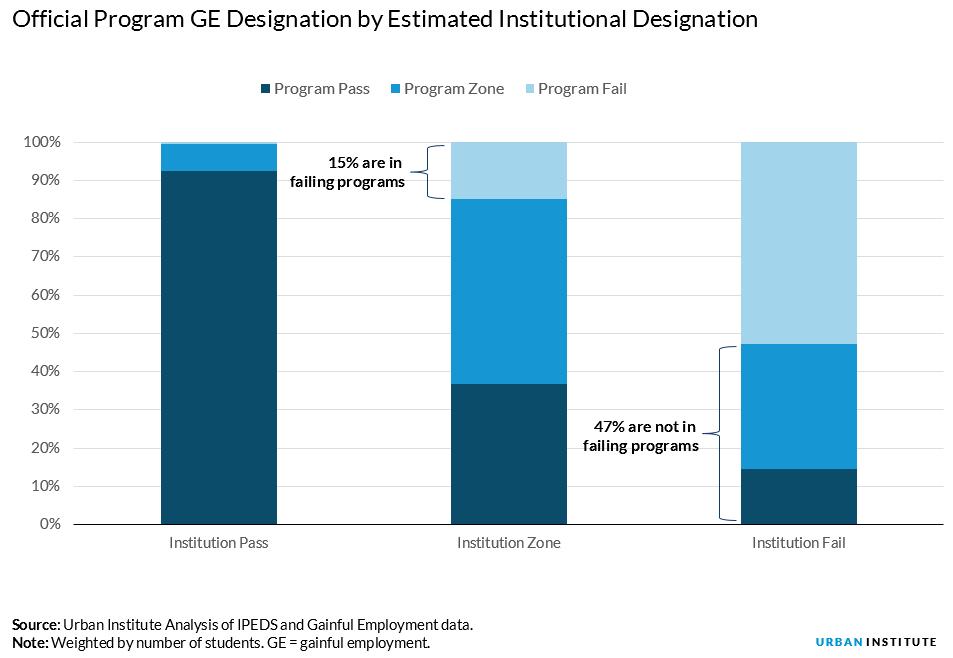

Many programs that passed scrutiny under the program-level metric would be tarred by an institution-level approach. Nearly half of students who attend institutions that would fail by an institution-level metric are in nonfailing programs. Conversely, some failing programs could escape the chopping block by being at institutions with otherwise passing programs. At institutions that would pass a GE test, less than 1 percent of students are in failing programs, but 7 percent are in programs in the at-risk zone category.

A program-level approach to accountability is consistent with a growing body of evidence indicating that earnings vary dramatically by program of study; in fact, many have argued that program of study matters more than institution. This is vividly illustrated in the gainful employment data. Nearly half of the GE programs in the visual and performing arts fail, for example, while only 6 percent of health professions and related programs fail.

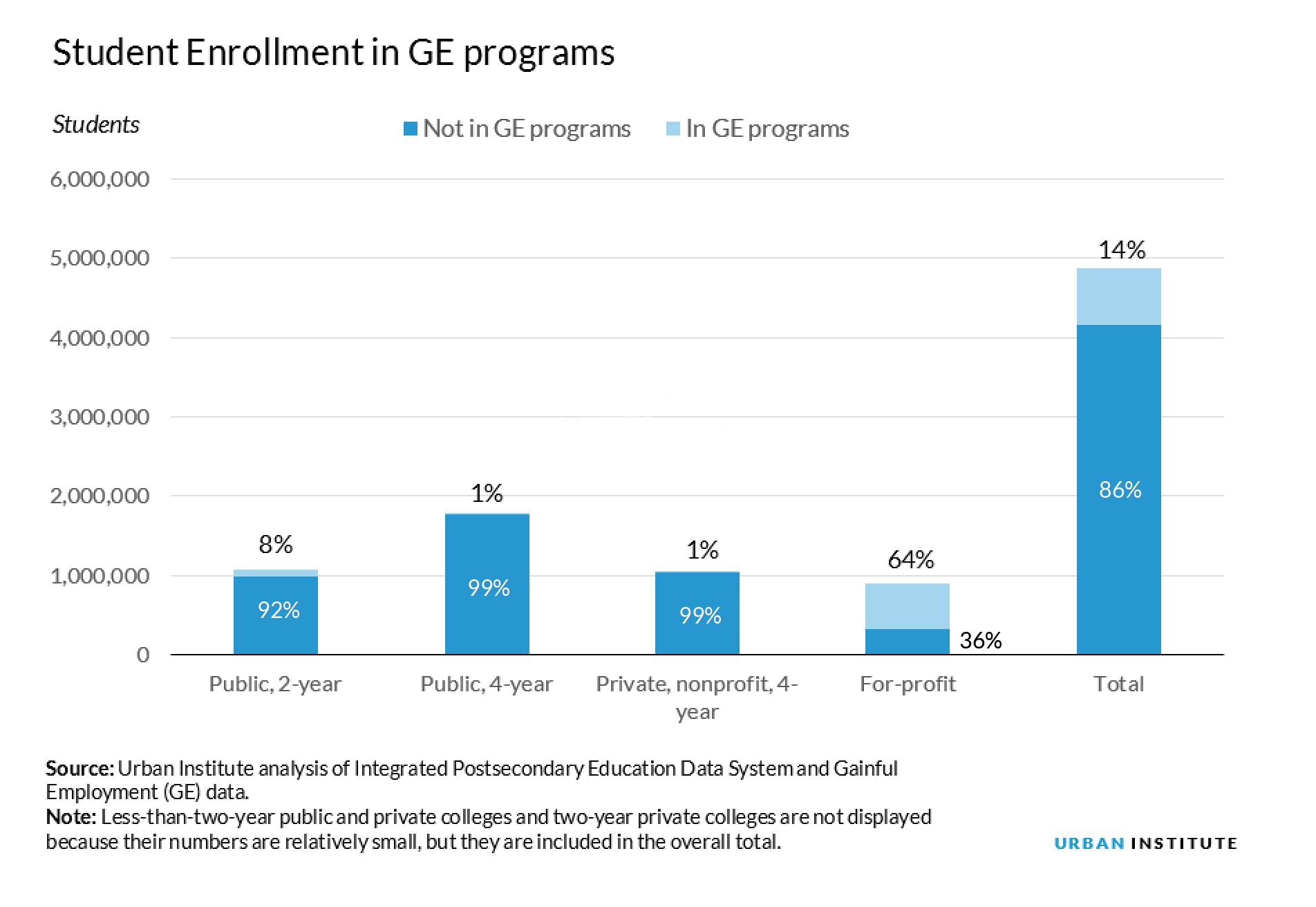

Nevertheless, the federal government still relies on institution-level accountability outside the for-profit sector. We estimate that only 14 percent of postsecondary students are enrolled in programs subject to GE’s program-level regulations. Only 8 percent of community college students and 1 percent of students at public and private, non-profit four-year colleges are subject to GE. Meanwhile, most for-profit students are enrolled in programs that appear in the GE data.

As Congress turns to the reauthorization of the Higher Education Act, policymakers from both sides of the aisle are calling for stronger accountability for colleges. A workable version of program-level accountability applied to all institutions would likely require additional innovations, including a high-quality data system. But the GE experiment so far provides some early evidence of the value of taking a scalpel to individual programs rather than a sledgehammer to entire institutions.

Download the data and methodology here.

— Erica Blom, Matthew M. Chingos and Kristin Blagg

Erica Blom is a research associate in the Education Policy Program at the Urban Institute. Matthew M. Chingos is director of the Urban Institute’s Education Policy Program. Kristin Blagg is a research associate in the Income and Benefits Policy Center at the Urban Institute.

This post originally appeared on UrbanWire.