California’s proposed math curriculum framework has ignited a ferocious debate, touching off a revival of the 1990s math wars and attracting national media attention. Early drafts of the new framework faced a firestorm of criticism, with opponents charging that the guidelines sacrificed accelerated learning for high achievers in a misconceived attempt to promote equity.

The new framework, first released for public comment in 2021, called for all students to take the same math courses through 10th grade, a “detracking” policy that would effectively end the option of 8th graders taking algebra. A petition signed by nearly 6,000 STEM leaders argued that the framework “will have a significant adverse effect on gifted and advanced learners.” Rejecting the framework’s notions of social justice, an open letter with over 1,200 signatories, organized by the Independent Institute, accused the framework of “politicizing K–12 math in a potentially disastrous way” by trying “to build a mathless Brave New World on a foundation of unsound ideology.”

About once every eight years, the state of California convenes a group of math educators to revisit the framework that recommends how math will be taught in the public schools. The current proposal calls for a more conceptual approach toward math instruction, deemphasizing memorization and stressing problem solving and collaboration. After several delays, the framework is undergoing additional edits by the state department of education and is scheduled for consideration by the state board of education for approval sometime in 2023.

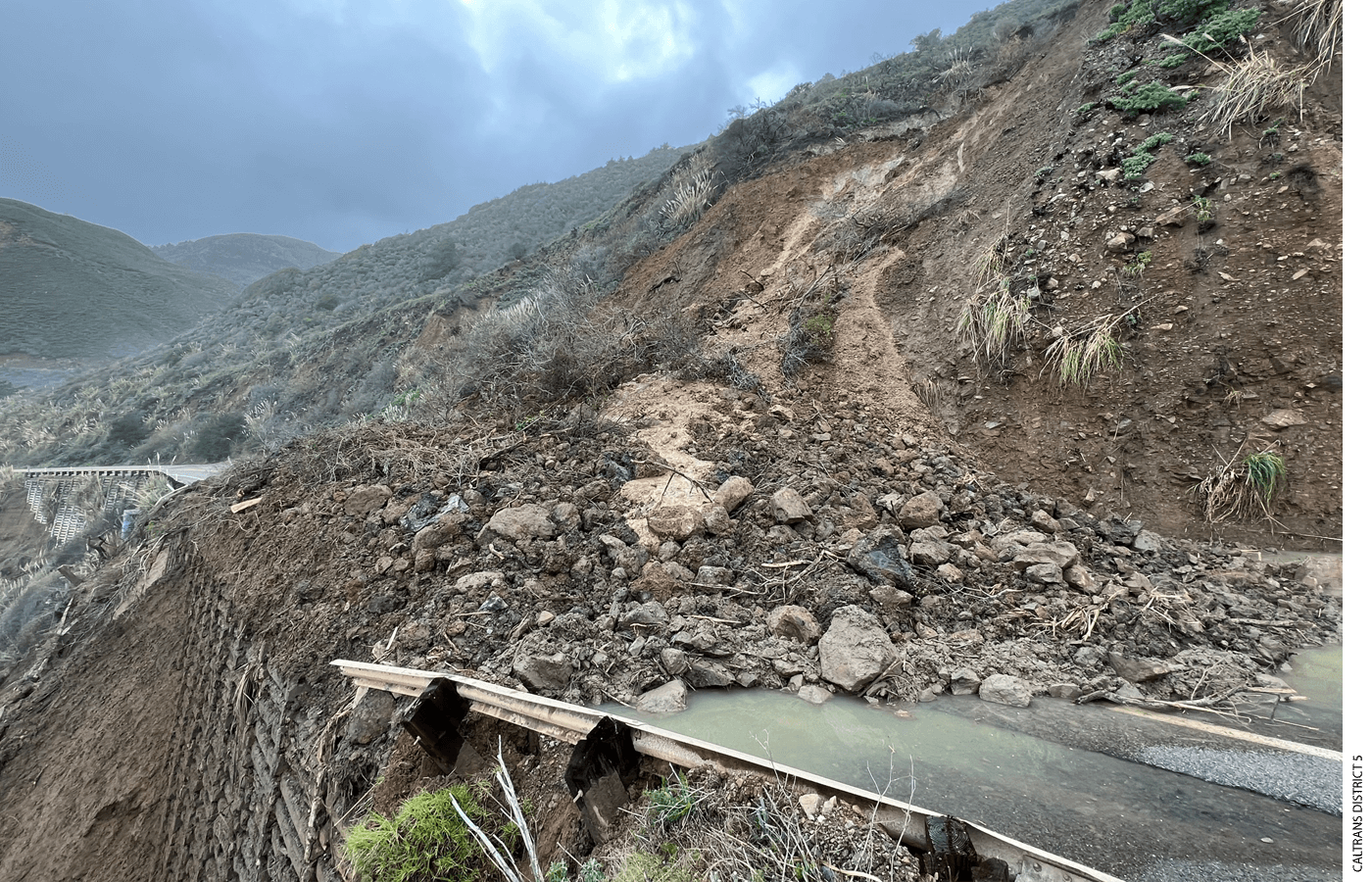

Why should anyone outside of California care? With almost six million public school students, the state constitutes the largest textbook market in the United States. Publishers are likely to cater to that market by producing instructional materials in accord with the state’s preferences. California was ground zero in the debate over K–12 math curriculum in the 1990s, a conflict that eventually spread coast to coast and around the world. A brief history will help set the stage.

Historical Context

Standards define what students are expected to learn—the knowledge, skills, and concepts that every student should master at a given grade level. Frameworks provide guidance for meeting the standards—including advice on curriculum, instruction, and assessments. The battle over the 1992 California state framework, a document admired by math reformers nationwide, started slowly, smoldered for a few years, and then burst into a full-scale, media-enthralling conflict by the end of the decade. That battle ended in 1997 when the math reformers’ opponents, often called math traditionalists, convinced state officials to adopt math standards that rejected the inquiry-based, constructivist philosophy of existing state math policy.

The traditionalists featured a unique coalition of parents and professional mathematicians—scholars in university mathematics departments, not education schools—who were organized via a new tool of political advocacy: the Internet.

The traditionalist standards lasted about a decade. By the end of the aughts, the standards were tarnished by their association with the unpopular No Child Left Behind Act, which mandated that schools show all students scoring at the “proficient” level on state tests by 2014 or face consequences. It was clear that virtually every school in the country would be deemed a failure, No Child Left Behind had plummeted in the public’s favor, and policymakers needed something new. Enter the Common Core State Standards.

The Common Core authors wanted to avoid a repeat of the 1990s math wars, and that meant compromise. Math reformers were satisfied by the standards’ recommendation that procedures (computation), conceptual understanding, and problem solving receive “equal emphasis.” Traditionalists were satisfied with the Common Core requirement that students had to master basic math facts for addition and multiplication and the standard algorithms (step-by-step computational procedures) for all four operations—addition, subtraction, multiplication, and division.

California is a Common Core state and, for the most part, has avoided the political backlash that many states experienced a few years after the standards’ widespread adoption. The first Common Core–oriented framework, published in 2013, was noncontroversial; however, compromises reflected in the careful wording of some learning objectives led to an unraveling when the framework was revised and presented for public comment in 2021.

Unlike most of the existing commentary on the revised framework, my analysis here focuses on the elementary grades and how the framework addresses two aspects of math: basic facts and standard algorithms. The two topics are longstanding sources of disagreement between math reformers and traditionalists. They were flashpoints in the 1990s math wars, and they are familiar to most parents from the kitchen-table math that comes home from school. In the case of the California framework, these two topics illustrate how reformers have diverged from the state’s content standards, ignored the best research on teaching and learning, and relied on questionable research to justify the framework’s approach.

Addition and Multiplication Facts

Fluency in mathematics usually refers to students’ ability to perform calculations quickly and accurately. The Common Core mathematics standards call for students to know addition and multiplication facts “from memory,” and the California math standards expect the same. The task of knowing basic facts in subtraction and division is made easier by those operations being the inverse, respectively, of addition and multiplication. If one knows that 5 + 6 = 11, then it logically follows that 11 – 6 = 5; and if 8 × 9 = 72, then surely 72 ÷ 9 = 8.

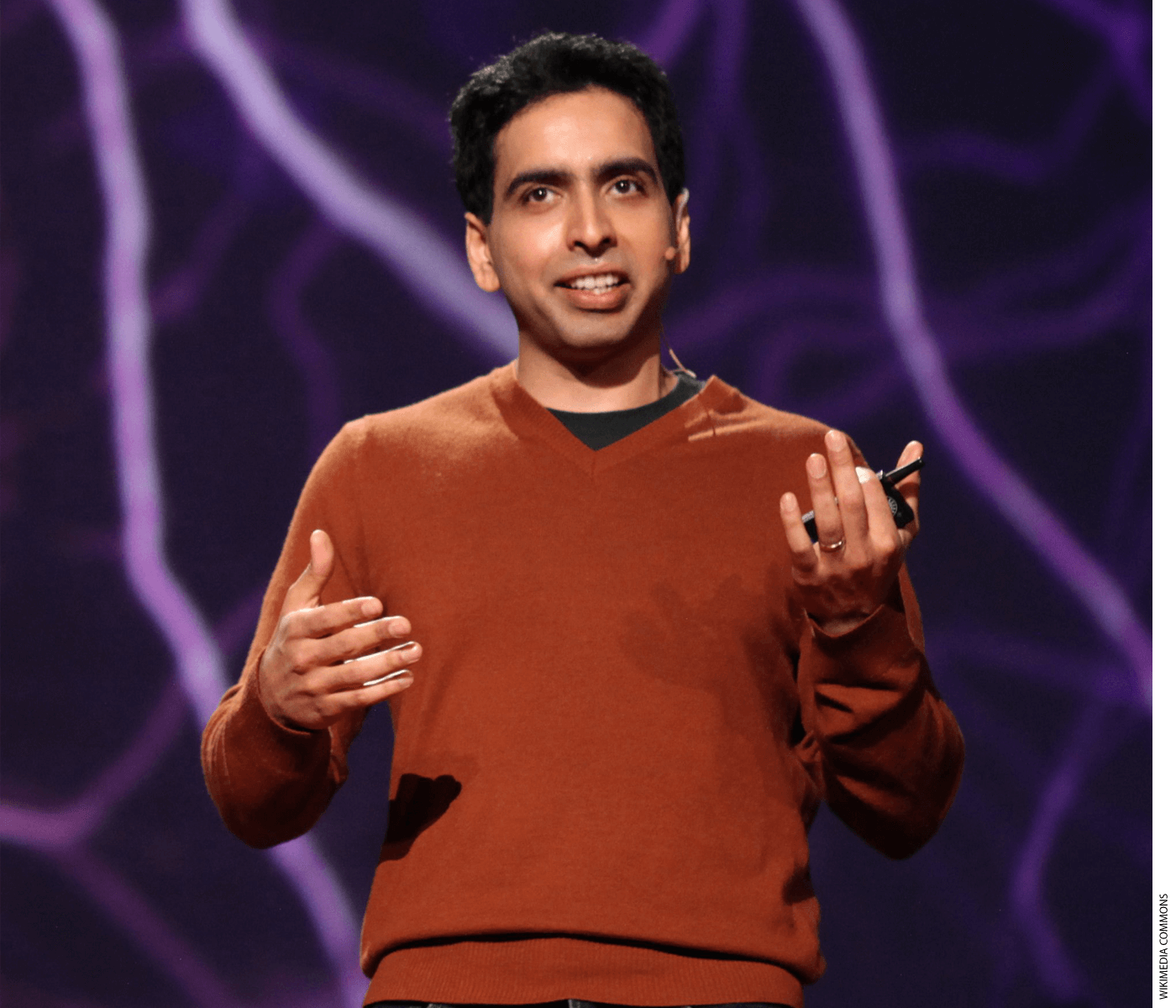

Cognitive psychologists have long pointed out the value of automaticity with number facts—the ability to retrieve facts immediately from long-term memory without even thinking about them. Working memory is limited; long-term memory is vast. In that way, math facts are to math as phonics is to reading. If these facts are learned and stored in long-term memory, they can be retrieved effortlessly when the student is tackling more-complex cognitive tasks. In a recent interview, Sal Khan, founder of Khan Academy, observed, “I visited a school in the Bronx a few months ago, and they were working on exponent properties like: two cubed, to the seventh power. So, you multiply the exponents, and it would be two to [the] 21st power. But the kids would get out the calculator to find out three times seven.” Even though they knew how to solve the exponent exercise itself, “the fluency gap was adding to the cognitive load, taking more time, and making things much more complex.”

California’s proposed framework mentions the words “memorize” and “memorization” 27 times, but all in a negative or downplaying way. For example, the framework states: “In the past, fluency has sometimes been equated with speed, which may account for the common, but counterproductive, use of timed tests for practicing facts. . . . Fluency is more than the memorization of facts or procedures, and more than understanding and having the ability to use one procedure for a given situation.” (All framework quotations here are from the most recent public version, a draft presented for the second field review, a 60-day public-comment period in 2022.)

One can find the intellectual origins of the framework on the website of Youcubed, a Stanford University math research center led by Jo Boaler, who is a math education professor at Stanford and member of the framework writing committee. Youcubed is cited 28 times in the framework, including Boaler’s essay on that site, “Fluency without Fear: Research Evidence on the Best Ways to Learn Math Facts.” The framework cites Boaler an additional 48 times.

The framework’s attempt to divorce fluency from speed (and from memory retrieval) leads it to distort the state’s math standards. “The acquisition of fluency with multiplication facts begins in third grade and development continues in grades four and five,” the framework states. Later it says, “Reaching fluency with multiplication and division within 100 represents a major portion of upper elementary grade students’ work.”

Both statements are inaccurate. The state’s 3rd-grade standard is that students will know multiplication facts “from memory,” not that they will begin fluency work and continue development in later grades. After 3rd grade, the standards do not mention multiplication facts again. In 4th grade, for example, the standards call for fluency with multidigit multiplication, a stipulation embedded within “understanding of place value to 1,000,000.” Students lacking automaticity with basic multiplication facts will be stopped cold. Parents who are concerned that their 4th graders don’t know the times tables, let alone how to multiply multidigit numbers, will be directed to the framework to justify children falling behind the standards’ expectations.

After the release of Common Core, the authors of the math standards published “Progressions” documents that fleshed out the standards in greater detail. The proposed framework notes approvingly, “The Progressions for the Common Core State Standards documents are a rich resource; they (McCallum, Daro, and Zimba, 2013) describe how students develop mathematical understanding from kindergarten through grade twelve.” But the Progressions contradict the framework on fluency. They state: “The word fluent is used in the Standards to mean ‘fast and accurate.’ Fluency in each grade involves a mixture of just knowing some answers, knowing some answers from patterns (e.g., ‘adding 0 yields the same number’), and knowing some answers from the use of strategies.”

Students progress toward fluency in a three-stage process: use strategies, apply patterns, and know from memory. Students who have attained automaticity with basic facts have reached the top step and just know them, but some students may take longer to commit facts to memory. As retrieval takes over, the possibility of error declines. Students who know 7 × 7 = 49 but must “count on” by 7 to confirm that 8 × 7 = 56 are vulnerable to errors to which students who “just know” that 7 × 8 = 56 are impervious. In terms of speed, the analogous process in reading is decoding text. Students who “just know” certain words because they have read them frequently are more fluent readers than students who must pause to sound out those words phonetically. This echoes the point Sal Khan made about students who know how to work with exponents raised to another power but still need a calculator for simple multiplication facts.

Standard Algorithms

Algorithms are methods for solving multi-digit calculations. Standard algorithms are simply those used conventionally. Learning the standard algorithms of addition, subtraction, multiplication, and division allows students to extend single-digit knowledge to multi-digit computation, while being mindful of place value and the possible need for regrouping.

Barry Garelick, a math teacher and critic of Common Core, posted a series of blog posts about the standards and asked, “Can one teach only the standard algorithm and meet the Common Core State Standards?” Jason Zimba, who is one of three authors of the Common Core math standards, responded:

Provided the standards as a whole are being met, I would say that the answer to this question is yes. The basic reason for this is that the standard algorithm is “based on place value [and] properties of operations.” That means it qualifies. In short, the Common Core requires the standard algorithm; additional algorithms aren’t named, and they aren’t required.

Zimba provides a table showing how exclusively teaching the standard algorithms of addition and subtraction could be accomplished, presented not as a recommendation, but as “one way it could be done.” Zimba’s approach begins in 1st grade, with students—after receiving instruction in place value—learning the proper way to line up numbers vertically. “Whatever one thinks of the details in the table, I would think that if the culminating standard in grade 4 is realistically to be met, then one likely wants to introduce the standard algorithm pretty early in the addition and subtraction progression.”

Note the term “culminating standard.” That implies the endpoint of development. The framework, however, interprets 4th grade as the grade of first exposure, not the culmination—and extends that misinterpretation to all four operations with whole numbers. “The progression of instruction in standard algorithms begins with the standard algorithm for addition and subtraction in grade four; multiplication is addressed in grade five; the introduction of the standard algorithm for whole number division occurs in grade six,” the framework reads.

This advice would place California 6th graders years behind the rest of the world in learning algorithms. In Singapore, for example, division of whole numbers up to 10,000 is taught in 3rd grade. The justification for delay stated in the framework is: “Students who use invented strategies before learning standard algorithms understand base-ten concepts more fully and are better able to apply their understanding in new situations than students who learn standard algorithms first (Carpenter et al., 1997).”

The 1997 Carpenter study, however, is a poor reference for the framework’s assertion. That study’s authors declare, “Instruction was not a focus of this study, and the study says very little about how students actually learned to use invented strategies.” In addition, the study sample was not scientifically selected to be representative, and the authors warn, “The characterization of patterns of development observed in this study cannot be generalized to all students.”

As for the Progressions documents mentioned above, they do not prohibit learning standard algorithms before the grade level of the “culminating expectation.” Consistent with Jason Zimba’s approach, forms of the standard addition and subtraction algorithms are presented as 2nd grade topics, two years before students are required to demonstrate fluency.

The selective use of evidence extends beyond the examples above, as is clear from the research that is cited—and not cited—by the framework.

Research Cited by the Framework

On June 1, 2021, Jo Boaler issued a tweet asserting, “This 4 week camp increases student achievement by the equivalent of 2.8 years.” The tweet included information on a two-day workshop at Stanford for educators interested in holding a Youcubed-inspired summer camp. The Youcubed website promotes the summer camp with the same claim of additional years of learning.

Where did the 2.8 years come from? The first Youcubed math camp was held on the Stanford campus in 2015 with 83 6th and 7th graders. For 18 days, students spent mornings working on math problems and afternoons touring the campus in small groups, going on scavenger hunts, and taking photographs. The students also received instruction targeting their mathematical mindsets, learning that there is no such thing as “math people” and “nonmath people,” that being fast at math is not important, and that making mistakes and struggling, along with thinking visually and making connections between mathematical representations, promote brain growth. Big ideas, open-ended tasks, collaborative problem solving, lessons on mindset, and inquiry-based teaching—these are foundational to the framework. The camp offers a test run of the proposed framework, the document asserting that the camps “significantly increase achievement in a short period of time.”

The claim of growth is based on an assessment the researchers administered on the first and last days of the camp. The test consisted of four open-ended problems, called “tasks,” scored by a rubric, with both the problems and the rubric created by the Mathematical Assessment Research Service, or MARS. Students were given four tasks on the first day and the same four tasks on the final day of camp. An effect size of 0.91 was calculated by dividing the difference between the group’s pre- and post-test average scores by the pre-test standard deviation. How this effect size was converted into years of learning is not explained, but researchers usually do this based on typical rates of achievement growth among students taking standardized math tests in consecutive years.

In 2019, the Youcubed summer-camp program went national. An in-house study was conducted involving 10 school districts in five states where the camps served about 900 students in total and ranged from 10 to 28 days. The study concluded, “The average gain score for participating students across all sites was 0.52 standard deviation units (SD), equivalent to 1.6 years of growth in math.”

Let’s consider these reported gains in the context of recent NAEP math scores. The 2022 scores triggered nationwide concern as 4th graders’ scores fell to 236 scale score points from 241 in 2019, a decline of 0.16 standard deviations. Eighth graders’ scores declined to 274 from 282, equivalent to 0.21 standard deviations. Headlines proclaimed that two decades of learning had been wiped out by two years of pandemic. A McKinsey report estimated that NAEP scores might not return to 2019 levels until 2036.

If the Youcubed gains are to be believed, all pandemic learning losses can be restored, and additional gains achieved, by two to four weeks of summer school.

There are several reasons to doubt the study’s conclusions, the most notable of which is the lack of a comparison group to gauge the program’s effects as measured by the MARS outcome. School districts recruited students for the camps. No data are provided on the number of students approached, the number who refused, and the number who accepted but didn’t show up. The final group of participating students comprises the study’s treatment group. The claim that these students experienced 1.6 years of growth in math is based solely on the change in students’ scores on the MARS tasks between the first and last day of the program.

This is especially problematic because the researchers gave students the same four MARS tasks before and after the program. Using the exact same instrument to test and re-test students within four weeks could inflate post-treatment scores, especially if the students worked on similar problems during the camp. No data are provided confirming that the MARS tasks are suitable, in terms of technical quality, for use in estimating the summer camp’s effect. Nor do the authors demonstrate that the tasks are representative of the full range of math content that students are expected to master, which is essential to justify reporting students’ progress in terms of years of learning. Even the grade level of the tasks is unknown, although camp attendees spanned grades 5 to 7, and MARS offers three levels of tasks (novice, apprentice, and expert).

The study’s problems extend to its treatment of attrition from the treatment sample. For one of the participating school districts (#2), 47 students are reported enrolled, but the camp produces 234 test scores—a mystery that goes unexplained. When this district is omitted, the remaining nine districts are lacking pre- and post-test scores for about one-third of enrolled students, who presumably were absent on either the first or last day. The study reports attendance rates in each district as the percentage of students who attended 75 percent of the days or more, with the median district registering 84 percent. Four districts reported less than 70 percent of students meeting that attendance threshold. A conventional metric for attendance during a school year is that students who miss 10 percent of days are “chronically absent.” By that standard, attendance at the camps appears spotty at best, and in four of the 10 camps, quite poor.

These are serious weaknesses. Just as the camps serve as prototypes of the framework’s ideas about good curriculum and instruction, the studies of Youcubed summer camps are illustrative of what the framework considers compelling research. The studies do not meet minimal standards of causal evidence.

Research Omitted by the Framework

It is also informative to look at research that is not included in the California framework.

The What Works Clearinghouse, housed within the federal Institute of Education Sciences, publishes practice guides for educators. The guides aim to provide concise summaries of high-quality research on various topics. A panel of experts conducts a search of the research literature and screens studies for quality, following strict protocols. Experimental and quasi-experimental studies are favored because of their ability to estimate causal effects. The panel summarizes the results, linking each recommendation to supporting studies. The practice guides present the best scientifically sound evidence on causal relationships in teaching and learning.

How many of the studies cited in the practice guides are also cited in the framework? To find out, I searched the framework for citations to the studies cited by the four practice guides most relevant to K–12 math instruction. Here are the results:

Assisting Students Struggling with Mathematics: Intervention in the Elementary Grades

(2021) 0 out of 43 studies

Teaching Strategies for Improving Algebra Knowledge in Middle and High School Students

(2015, revised 2019) 0 out of 12 studies

Improving Mathematical Problem Solving in Grades 4 Through 8

(2012) 0 out of 37 studies

Developing Effective Fractions Instruction for Kindergarten Through 8th Grade

(2010) 1 out of 22 studies

Except for one study, involving teaching the number line to young children using games, the framework ignores the best research on K–12 mathematics. How could this happen?

One powerful clue: key recommendations in the practice guides directly refute the framework. Timed activities with basic facts, for example, are recommended to increase fluency, with the “Struggling Students” guide declaring “the expert panel assigned a strong level [emphasis original] of evidence to this recommendation based on 27 studies of the effectiveness of activities to support automatic retrieval of basic facts and fluid performance of other tasks involved in solving complex problems.” Calls for explicit or systematic instruction in the guides fly in the face of the inquiry methods endorsed in the framework. Worked examples, in which teachers guide students step by step from problem to solution, are encouraged in the guides but viewed skeptically by the framework for not allowing productive struggle.

Bumpy Road Ahead

The proposed California Math Framework not only ignores key expectations of the state’s math standards, but it also distorts or redefines them to serve a reform agenda. The standards call for students to know “from memory” basic addition facts by the end of 2nd grade and multiplication facts by the end of 3rd grade. But the framework refers to developing fluency with basic facts as a major topic of 4th through 6th grades. Fluency is redefined to disregard speed. Instruction on standard algorithms is delayed by interpreting the grades for culminating standards as the grades in which standard algorithms are first encountered. California’s students will be taught the standard algorithm for division years after the rest of the world.

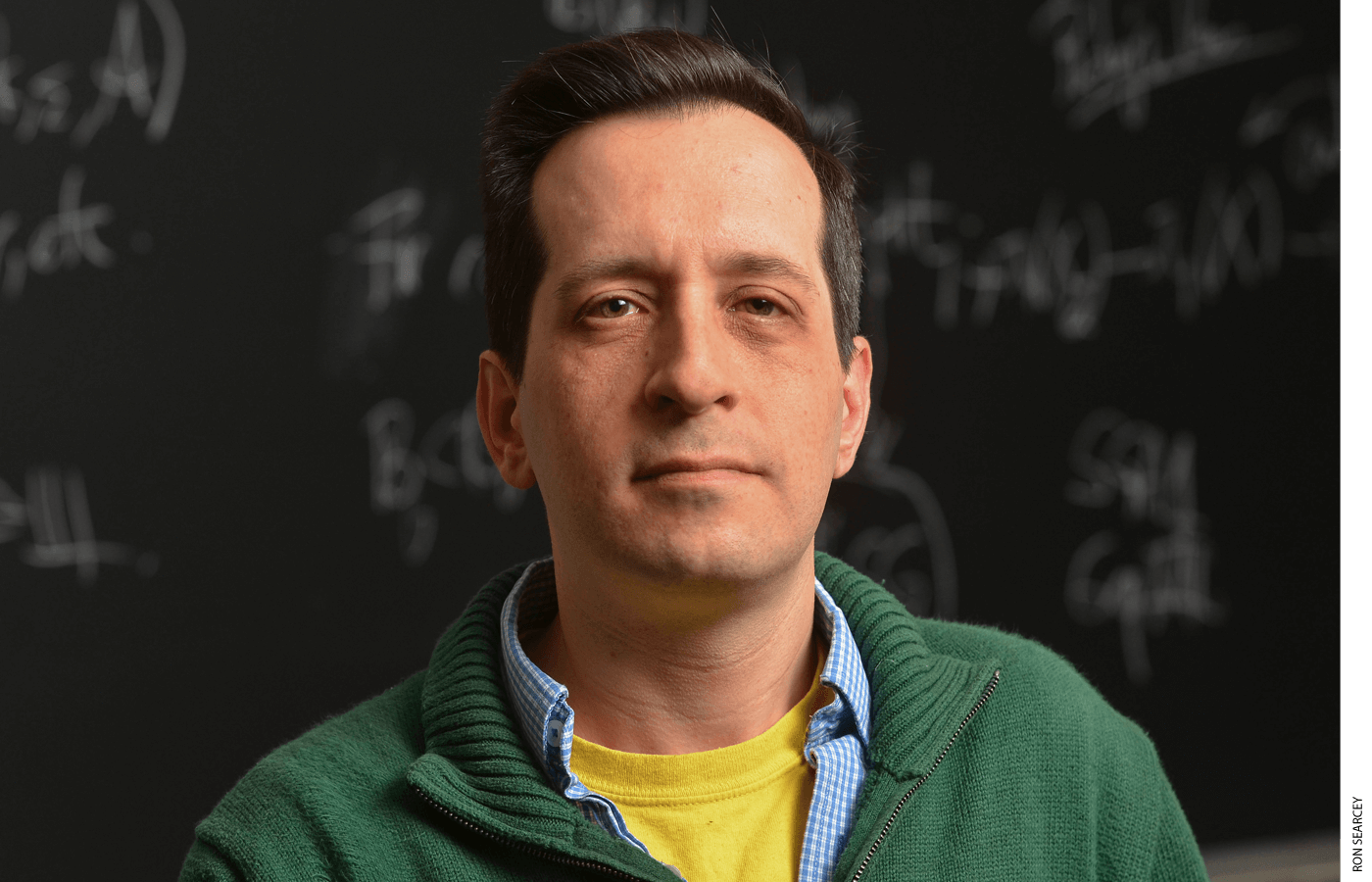

The framework’s authors claim to base their recommendations on research, but it is unclear how—or even if—they conducted a literature search or what criteria they used to identify high-quality studies. The document serves as a manifesto for K–12 math reform, citing sources that support its arguments and ignoring those that do not, even if the omitted research includes the best scholarship on teaching and learning mathematics. Brian Conrad, professor of mathematics at Stanford University, has analyzed the framework’s citations and documented many instances where the original findings of studies were distorted. In some cases, the papers’ conclusions were the opposite of those presented in the framework.

The pandemic took a toll on math learning. To return to a path of achievement will require the effort of teachers, parents, and students. Unfortunately, if the state adopts the proposed framework in its current form, the document will offer little assistance in tackling the hard work ahead.

Tom Loveless, a former 6th-grade teacher and Harvard public policy professor, is an expert on student achievement, education policy, and reform in K–12 schools. He also was a member of the National Math Advisory Panel and U.S. representative to the General Assembly, International Association for the Evaluation of Educational Achievement, 2004–2012.

This article appeared in the Fall 2023 issue of Education Next. Suggested citation format:

Loveless, T. (2023). California’s New Math Framework Doesn’t Add Up: It would place Golden State 6th graders years behind the rest of the world —and could eventually skew education in the rest of the U.S., too. Education Next, 23(4), 36-42.

For more, please see “The Top 20 Education Next Articles of 2023.”