Under the Obama administration, the federal government spent over $7 billion in an effort to turnaround failing schools via the School Improvement Grant (SIG) program.

Unfortunately, due to the flawed research design of the official federal evaluation of the effort, we don’t know if it worked.

But you wouldn’t know that from reading the study, where the authors claim:

“Overall, across all grades, we found that implementing any SIG-funded model had no significant impacts on math or reading test scores, high school graduation, or college enrollment.”

With this strong language in the study’s executive summary, it’s not surprising that Emma Brown, a thoughtful reporter at the Washington Post, covered the study by doubling down on the study’s headline:

“One of the Obama administration’s signature efforts in education, which pumped billions of federal dollars into overhauling the nation’s worst schools, failed to produce meaningful results, according to a federal analysis.”

Other commentators have echoed these claims, including on this blog.

Ultimately, I think everyone, study authors and commentators alike, are on shaky ground in saying SIG didn’t work.

I Thought the School Improvement Program was a Bad Idea

My defense of the SIG program (relative to the authors’ claims), is surely not ideological or partisan, as I didn’t think SIG would work.

I’m highly skeptical of most district turnaround efforts, and I believe that chartering is a better way to increase the educational opportunity of children attending failing schools.

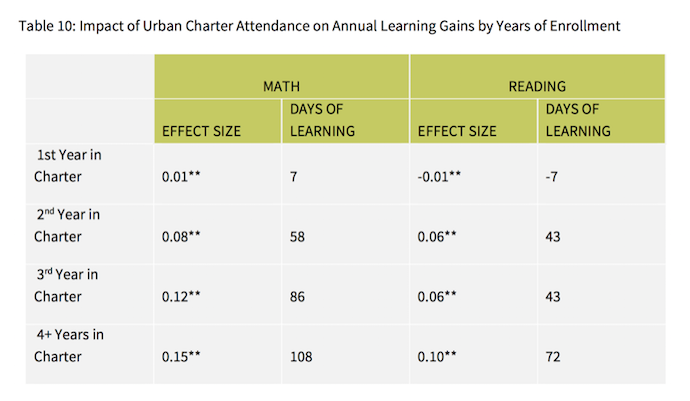

We have rigorous statistical evidence from Stanford’s Center for Research on Education Outcomes (CREDO) that urban charter schools outperform traditional schools (the table below comes from their 2015 study of charters in 41 urban regions), and I believe this should be our nation’s preferred school improvement strategy.

Given these past results of urban charters, I would have preferred that SIG money be used on high-performing charter growth rather than district turnaround efforts.

The SIG Study is Underpowered

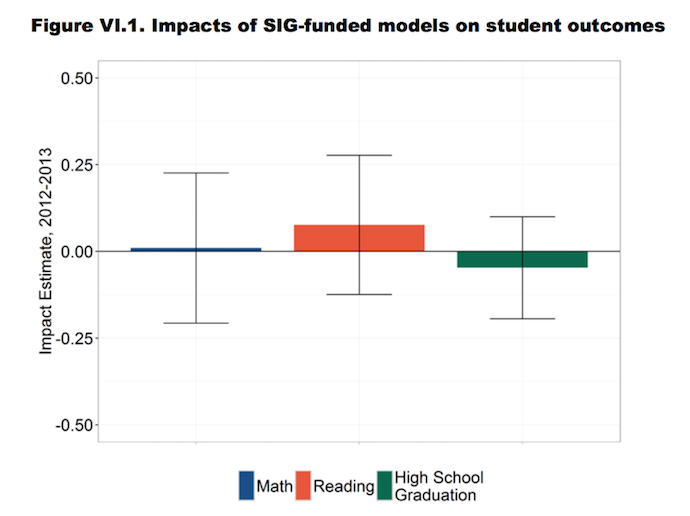

In claiming that SIG had no effects on academic achievement, the study’s authors summarized their main results in this chart:

In detailing these results, the authors note:

“The smallest impacts our benchmark approach could detect ranged from 0.19 to 0.22 standard deviations for test score outcomes, from 0.15 to 0.26 standard deviations for high school graduation, and from 0.27 to 0.39 standard deviations for college enrollment.”

Now, look back up at urban charter effects and you’ll see the three year results in math are about at the floor of what the SIG study could detect, and the results in reading are much lower than what the SIG study could detect (the SIG study also tracked children for 3 years).

So even if SIG achieved the same effects as urban charter schools the study may not have been able to detect these effects.

It seems pretty unfair for charter (or voucher) champions to call SIG a failure when SIG might have very well achieved near the same results as urban charter schools.

Moreover, we should probably be satisfied with lower results for SIG, given that turnaround work is much more difficult than new start charter growth. As such, the study should have been designed to detect ~.1 effects.

In using a regression-discontinuity design (comparing schools that received the SIG treatment to slightly higher performing schools that did not receive the treatment), the authors were not able to generate a sample size that would be sensitive to positive significant effects that, in my mind, could be considered a success.

The federal government should have either randomized which SIG-eligible schools received funding for the SIG treatment, or the authors should have used a quasi-experimental student-based methodology that allowed for a larger sample (similar to the methodology CREDO uses).

I Still Think SIG Was Wasteful

Until I see results that show that SIG worked, I won’t change my prior belief that SIG funds would have been better spent on high-quality charter growth.

Moreover, neither the existing research base nor theory warranted a $7 billion spend on district turnarounds, so even had the intervention worked I still would consider it a lucky outcome on an ill-advised bet.

But I also won’t claim that SIG failed.

Due to poor research design, we simply don’t know if that’s true.

The study authors, reporters, and commentators should walk back their strong claims on SIG’s failures.

At the same time, we should all keep advocating for government investment amounts to be in line with the existing evidence base.

If we have no reason to believe something will work, we should not spend $7 billion.

Too often, moonshots garner more status then they deserve.

—Neerav Kingsland

Neerav Kingsland is Senior Education Fellow at Laura and John Arnold Foundation.