Arguably, the most important development in K–12 education over the past decade has been the emergence of a growing number of urban schools that have been convincingly shown to have dramatic positive effects on the achievement of disadvantaged students. Those with the strongest evidence of success are oversubscribed charter schools. These schools hold admissions lotteries, which enable researchers to compare the subsequent test-score performance of students who enroll to that of similar students not given the same opportunity. Through careful study of the most effective of these charter schools, researchers have identified common practices—a longer school day and year, regular coaching to improve teacher performance, routine use of data to inform instruction, a culture of high expectations—that have yielded promising results when replicated in district schools.

We have only a limited understanding of how these practices translate into higher academic achievement, however. It may be that attending a school that employs them enhances those basic cognitive skills—such as processing speed, working memory, and reasoning—that research in psychological science has shown contribute to success in the classroom and later in life. Do schools that succeed in raising test scores do so by improving their students’ underlying cognitive capacities? Or do effective schools help their students achieve at higher levels than would be predicted based on measures of cognitive ability alone?

We have only a limited understanding of how these practices translate into higher academic achievement, however. It may be that attending a school that employs them enhances those basic cognitive skills—such as processing speed, working memory, and reasoning—that research in psychological science has shown contribute to success in the classroom and later in life. Do schools that succeed in raising test scores do so by improving their students’ underlying cognitive capacities? Or do effective schools help their students achieve at higher levels than would be predicted based on measures of cognitive ability alone?

To address this question, we draw on unique data from a sample of more than 1,300 8th graders attending 32 public schools in Boston, including traditional public schools, exam schools that admit only the city’s most academically talented students, and charter schools. In addition to the state test scores typically used by education researchers, we also gathered several measures of the cognitive abilities psychologists refer to as fluid cognitive skills. Our data confirm that the latter are powerful predictors of students’ academic performance as measured by standardized tests.

Yet while the schools in our sample vary widely in their success in raising test scores, with oversubscribed charter schools in particular demonstrating clear positive results, we find that attending a school that produces strong test-score gains does not improve students’ fluid cognitive skills. Put differently, our evidence indicates that effective schools help their students achieve at higher levels than expected based on their fluid cognitive skills. It also suggests that developing school-based strategies to raise those skills could be an important next step in helping schools to provide even greater benefits for their students.

Crystallized Knowledge and Fluid Cognitive Skills

Despite decades of relying on standardized test scores to assess and guide education policy and practice, surprisingly little work has been done to connect these measures of learning with the measures developed over a century of research by cognitive psychologists studying individual differences in cognition. Psychologists now consider cognitive ability (few dare say “intelligence” anymore) to have two primary components: crystallized knowledge and fluid cognitive skills. Crystallized knowledge comprises acquired knowledge such as vocabulary and arithmetic, while fluid skills are the abstract-reasoning capabilities needed to solve novel problems (such as the ability to identify patterns and make extrapolations) independent of how much factual knowledge has been acquired. The terms were coined by the late psychologist Raymond Cattell, who first distinguished two types of intelligence. Cattell noted that one “has the ‘fluid’ quality of being directable at almost any problem,” while the other “is invested in particular areas of crystallized skills which can be upset individually without affecting others.”

Hundreds of studies show that, at any point in time, the two are highly correlated: people with strong fluid cognitive skills are at an advantage when it comes to accumulating the kinds of crystallized knowledge assessed by most standardized tests.

That these capabilities are nonetheless distinct is best illustrated by the fact that fluid cognitive skills decline with age starting even in one’s twenties, while crystallized knowledge tends to rise over the decades, in some cases peaking as late as one’s seventies. In an influential 2002 study involving people ages 20 to 92, University of Texas at Dallas psychologist Denise Park and colleagues found that the fluid cognitive skills of participants in their twenties exceeded those of participants in their seventies by as much as 1.5 standard deviations. In other words, more than 90 percent of participants in their twenties had higher fluid cognitive skills than did typical participants in their seventies. Those in their seventies nonetheless scored higher than participants in any other age range on tests of vocabulary, a key component of crystallized knowledge.

At a more fine-grained level, cognitive psychologists have identified multiple aspects of fluid cognition, including processing speed (how efficiently information can be processed), working memory (how much information can be simultaneously processed and maintained in mind), and fluid reasoning (how well novel problems can be solved). Longitudinal studies tracking individuals from late childhood through young adulthood indicate that gains in processing speed support gains in working memory capacity that, in turn, support fluid reasoning. Each of these abilities has been shown to be associated with academic performance, suggesting that they promote or constrain learning in school.

The strength of the relationship between fluid cognitive skills and academic performance also suggests that schools that are particularly effective in improving standardized test scores may do so by improving fluid cognition along one or more of these dimensions. This is what our research sought to explore.

Data and Sample

We gathered the data for our study during the spring of 2011 from 32 of the 49 public schools in Boston that serve 8th-grade students. The schools that agreed to participate in the study included 22 open-enrollment district schools, five oversubscribed charter schools, two exam schools to which students are admitted based on their grades and standardized test scores, and three charter schools that were not oversubscribed at the time the 8th-grade students in our study were admitted. Boston’s oversubscribed charter schools are of particular interest, as multiple studies have exploited the lottery admissions process to document the schools’ effectiveness in raising student test scores (see “Boston and the Charter School Cap,” features, Winter 2014).

Within those schools, we collected data on all students for whom we obtained parental consent for participation and who were in attendance on the day we collected data. These 1,367 students represent 43 percent of all 8th-grade students attending public schools in Boston and 64 percent of the students in participating schools. Seventy-seven percent of the students in our sample are from low-income families, 38 percent are African American, and 39 percent are Hispanic, in each case closely matching the demographic composition of all 8th-grade students attending public schools in the city and 8th graders attending the same schools.

The fluid cognitive skills we measured for each student included processing speed, working memory, and fluid reasoning. For processing speed, students were asked to translate numbers into corresponding symbols using a number-symbol key, and to indicate as quickly as possible under a time constraint whether either of two symbols on the left side of a page matched any of five symbols on the right side. For working memory, students viewed an array of blue circles, blue triangles, and red circles, and were instructed to count the number of blue circles within 4.5 seconds. After viewing between one and six arrays, they were prompted to record the number of blue circles contained in each. Finally, the fluid-reasoning task required students to choose which of six pictures completed the missing piece of a series of puzzles that became progressively more difficult. Because these three measures are closely related in theory and were positively correlated among the students in our sample, we also averaged them to create a summary measure of students’ fluid cognitive ability.

Fluid Cognitive Skills Predict Test Scores

Our first step is to examine the relationship between our measures of fluid cognitive skills and scores from the state’s standardized tests. We look at the students’ scores on the Massachusetts Comprehensive Assessment System (MCAS) tests in math and reading (ELA) and improvements in those test scores over time. We use simple correlation coefficients to measure the strength of the relationship between fluid cognitive skills and test scores. Correlation coefficients can range from -1 to 1, with a correlation of 0 indicating that there is no linear relationship between the two variables in question.

The correlations between our measures of fluid cognitive skills and 8th-grade math test scores are positive and statistically significant, ranging from 0.27 for working memory to 0.53 for fluid reasoning. The correlation between math test scores and our summary measure of fluid cognitive ability is 0.58, which implies that differences in fluid cognitive skills can account for more than one-third of the total variation in math achievement. The relationships are somewhat weaker for test scores in reading. Even so, variation in our summary measure of fluid cognitive ability can explain as much as 16 percent of the total variation in reading achievement.

Fluid cognitive skills are also related to the rate at which students improve their test-score performance over time. To measure gains in student achievement, we calculate the difference between 8th-grade performance in each subject and the performance level that would have been expected based on performance in both subjects in 4th grade. The correlations between our summary measure of fluid cognitive ability and test-score gains in math and reading were 0.32 and 0.18, respectively.

A high degree of correlation between measures of fluid cognitive skills and test scores is not news. As noted above, fluid cognitive ability has a long track record of predicting how much students know and are able to do. Our findings do suggest, however, that the specific measures of fluid cognitive skills we administered in classrooms as part our research were able to capture academically relevant differences in student cognition.

Schools Improve Test Scores but Not Fluid Skills

We address our central question of whether schools that raise student test scores also improve fluid cognitive skills in two complementary ways. First, we use our entire sample to analyze the extent to which the schools that students attend can explain the overall variation in student test scores and fluid cognitive skills, controlling for differences in prior achievement and student demographic characteristics (including gender, age, race/ethnicity, and whether the student is from a low-income family, is an English language learner, or is enrolled in special education). Second, we focus on the subset of students who entered the admissions lottery at one of the five oversubscribed charter schools in order to study how attending one of those schools affected test scores and fluid cognitive skills.

Consistent with other research on school effects, we find that the school a student attends can explain a substantial share of the overall variation in test scores: that single factor explains 34 percent of the variation in math scores and 24 percent of the variation for reading. In contrast, after accounting for prior achievement and demographics, the school attended explains just 2.3 percent of our summary measure of fluid cognitive ability.

This pattern suggests that schools may influence students’ test scores but not by affecting their fluid cognitive skills. However, this analysis does not account for the possible sorting of students into particular schools based on characteristics not captured by their prior achievement and demographic characteristics. Such “selection effects” could in theory account for the apparent school impacts on test scores, or even the apparent absence of impacts on fluid cognitive skills.

Our second analysis aims to address this concern. Because the oversubscribed charter schools in our sample admit students via random lotteries, comparing the outcomes of lottery winners (most of whom enrolled in a charter school) and lottery losers (most of whom did not) is akin to a randomized-control trial of the kind often used in medical research. Evaluations led by Harvard’s Tom Kane and MIT’s Josh Angrist have used this lottery-based method to convince most skeptics that the impressive test-score performance of the Boston charter sector reflects real differences in school quality rather than the types of students charter schools serve.

Due to the limited coverage of our sample, we cannot claim for our analysis the same level of rigor as these previous lottery-based evaluations. Of the roughly 700 applicants for the lotteries used to admit students in the 8th-grade cohort in our study, only 200 of them are in our evaluation sample. Focusing on lottery applicants is nonetheless useful because it enables us to hold constant whatever unmeasured differences lead some students to apply for a seat in a charter school and others to remain within the district. When comparing lottery winners and losers, we also control for prior achievement and the same set of demographic characteristics used in our broader analysis. We use standard methods to account for the fact that not all lottery winners enrolled in a charter school and remained there throughout middle school (and some lottery losers eventually obtained a seat). This approach enables us to generate estimates of the effect of each additional year of actual attendance at a charter school between 5th and 8th grade.

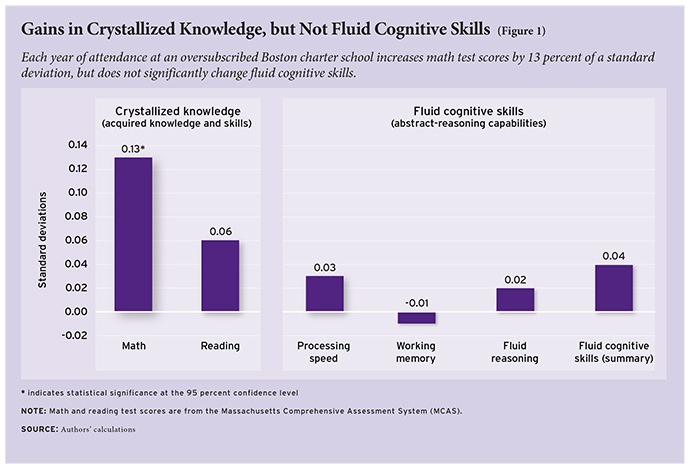

Our results show that each year of attendance at an oversubscribed Boston charter school increases the math test scores of students in our sample by 13 percent of a standard deviation. This is a noteworthy effect, equivalent to roughly a 50 percent increase in the academic progress students typically make in a school year (see Figure 1). Charter school attendance also appears to have a modest positive effect on reading scores, though this estimate falls short of statistical significance due to the relatively small number of students in our lottery sample. Even as students benefit academically, however, their fluid cognitive skills hardly budge. The estimated effect of charter school attendance for each of our measures is very small in magnitude; none is statistically significant.

Are Test-Score Gains “Real”?

There is ample reason to believe that the test-score gains generated by these schools are meaningful, despite the lack of corresponding improvement in fluid cognition. State tests are aligned to standards that specify the knowledge and capabilities students are expected to acquire—the very things cognitive psychologists call crystallized knowledge. And there is strong evidence that crystallized knowledge, which also bears a strong resemblance to E. D. Hirsch’s notion of Core Knowledge, matters a great deal for success in school and beyond. Recent studies by Harvard economist Raj Chetty and colleagues confirm that teachers who improve student test scores also improve their students’ earnings as adults (see “Great Teaching,” research, Summer 2012). Moreover, lottery-based evaluations of the Boston charter sector show that attending high schools affiliated with three of the charter schools in our sample increases Advanced Placement test-taking and performance and the likelihood of attending a four-year college.

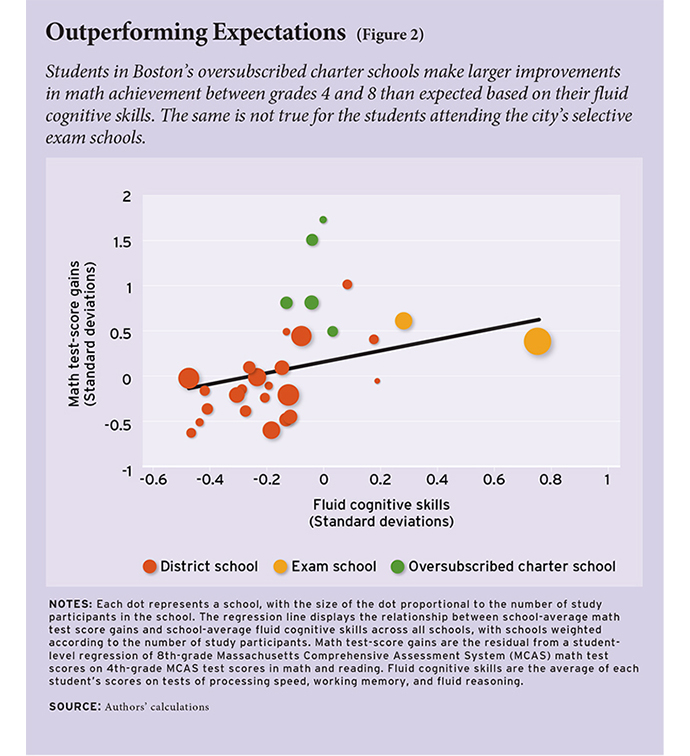

Indeed, in our view, the unique data we gathered for this study make these schools’ accomplishments all the more impressive. They show that the schools that are most effective in raising student test scores do so in spite of the strength of the underlying relationship between math achievement and fluid cognitive skills. In other words, these schools have figured out ways to raise students’ academic achievement well above what is expected given the students’ baseline fluid cognitive skills.

A compelling way to see this is to look at the relationship across schools between the average test-score gain students make between the 4th and 8th grade and our summary measure of their students’ fluid cognitive ability at the end of that period (see Figure 2). Each dot represents a school, and the diagonal line shows the overall relationship between test-score gains and fluid cognitive ability across the full sample of schools. The extent to which a school is above or below that line indicates whether the average test-score improvement among its students has been greater or less than would be predicted based on their fluid cognitive skills.

Most schools fall relatively close to the regression line, indicating that their students’ academic progress is roughly as expected given the students’ fluid cognitive skills, but there are clear exceptions. Most notably, each of the five oversubscribed charter schools is well above the regression line. A few open-enrollment district schools also show the ability to drive similarly outsized gains, an important reminder that while governance matters, what counts in the end is effective practice. Finally, while exam-school students have considerably higher fluid cognitive skills (as would be expected of students who gain admission via test scores and grades), attending one of these locally renowned schools in the company of other bright students confers no systematic advantage. This last finding is consistent with recent evidence showing no academic benefits of attending a Boston or New York City exam school for students who just met the admissions criteria (see “Exam Schools from the Inside,” features, Fall 2012).

What do these differences in school performance mean in layman’s terms? Among students who fell below the midway point on our summary measure of fluid cognitive ability, only 20 percent of those attending a district school were deemed proficient in math as defined by Massachusetts on its 8th-grade math test. In oversubscribed charter schools, 71 percent of such students were deemed proficient. This is a remarkable difference for students who rank lower than their peers on a key enabling capacity. For district students, success is the rare exception (2 in 10), while for oversubscribed charter school students, it is closer to the rule (7 out of 10).

At the same time, fluid cognitive skills remain potent predictors of academic progress even among students attending oversubscribed charter schools. While these schools succeed in generating test-score gains for students of all cognitive abilities, it is still the case that students with strong fluid cognitive skills learn more. Indeed, the strength of the correlation between fluid cognitive skills and test-score growth in oversubscribed charter schools is statistically indistinguishable from the correlations we observe among students in open-enrollment district schools and exam schools.

Could Schools Boost Fluid Cognitive Skills, Too?

Our research sought to examine whether schools that have demonstrated success in raising test scores also boost students’ fluid cognitive skills—either as a byproduct or perhaps as a principal pathway for improvements in test scores. That turns out not to be the case. This result does not, in our view, call into question the value of the improvements in crystallized knowledge captured by improvements in test scores.

What we do not yet know, however, is which long-term outcomes are more strongly influenced by fluid cognitive skills and which by crystallized knowledge. One reason is that, as we see in our study sample, fluid cognitive skills and crystallized knowledge tend to be highly correlated. In fact, it may be accurate to say that schools like the most effective schools in our study may be the first to produce students for whom these two types of cognitive ability are consistently decoupled, providing an opportunity to study just which kinds of outcomes are enabled by gains in crystallized knowledge alone. For example, it is possible that the oft-discussed challenges some students from high-performing urban schools experience in college (see “‘No Excuses’ Kids Go to College,” features, Spring 2013) stem in part from deficits in fluid cognitive skills.

Indeed, perhaps the most important implication that we draw is that educators seeking to innovate should get about the business of developing and rigorously testing the effects of interventions to raise these fluid cognitive skills. Improved abstract-reasoning capacity likely has important benefits in its own right and is highly related to important skills such as reading comprehension. Deficits in students’ fluid cognitive skills may also prevent even the most effective schools from raising all of their students’ academic performance to the desired level.

The question of whether processing speed, working memory, and fluid reasoning skills can be developed through intentional efforts is an area of active debate among cognitive psychologists. Several researchers have published studies claiming that they have improved these skills through deliberate practice aimed at one or more of these skills and, in a few cases, have shown that such improvements have translated into gains in other, broader measures of cognitive ability. None of these interventions has yet been shown to improve long-term outcomes such as college completion or earnings, however, and other researchers have failed to replicate even the narrower impacts that have been reported. Meanwhile, private companies such as Lumosity are aggressively marketing software-based training programs derived from this line of research to the general public as “brain training.”

This is a perfect time for cognitive psychologists, educators, and perhaps even game and software developers to join forces in rapid-cycle experimentation to explore whether and how schools can broadly and permanently raise students’ fluid cognitive skills. Successful schools have demonstrated their ability to dramatically increase crystallized knowledge and thereby raise test scores, improving other important student outcomes in the process. Boosting fluid cognitive skills might have an equally profound impact on students’ academic and life outcomes.

Martin West is an associate professor at the Harvard Graduate School of Education (HGSE) and deputy director of the Program on Education Policy and Governance at the Harvard Kennedy School. Christopher Gabrieli is adjunct lecturer at HGSE and executive chairman of the National Center on Time & Learning. Matthew Kraft is assistant professor of education at Brown University. Amy Finn is a postdoctoral fellow at the Massachusetts Institute of Technology, where John Gabrieli is professor of health sciences and technology and cognitive neuroscience.

This article is based on a study published in the March 2014 issue of Psychological Science.

This article appeared in the Fall 2014 issue of Education Next. Suggested citation format:

West, M.R., Gabrieli, C.F.O., Finn, A.S., Kraft, M.A., and Gabrieli, J.D.E. (2014). What Effective Schools Do: Stretching the cognitive limits on achievement. Education Next, 14(4), 72-79.