Let’s invent a game; it’s called “Rate This School!”

Start with some facts. Our school—let’s call it Jefferson—serves a high-poverty population of middle and high school students. Eighty-nine percent of them are eligible for a free or reduced-price lunch; 100 percent are African American or Hispanic. And on the most recent state assessment, less than a third of its students were proficient in reading or math. In some grades, fewer than 10 percent were proficient as gauged by current state standards.

That school deserves a big ole F, right?

Now let me give you a little more information. According to a rigorous Harvard evaluation, every year Jefferson students gain two and a half times as much in math and five times as much in English as the average school in New York City’s relatively high-performing charter sector. Its gains over time are on par or better than those of uber-high performing charters like KIPP Lynn and Geoffrey Canada’s Promise Academy.

Jefferson is so successful, the Harvard researchers conclude, because it has “more instructional time, a relentless focus on academic achievement, and more parent outreach” than other schools.

Now how would you rate this school? How about an A?

* * *

My little thought experiment makes an obvious point, one that isn’t particularly novel: Proficiency rates are terrible measures of school effectiveness. As any graduate student will tell you, those rates mostly reflect a school’s demographics. What is more telling, in terms of the impact of a school on its students’ achievement and life chances, is how much growth the school helps its charges make over the course of a school year—what accountability-guru Rich Wenning aptly calls students’ “velocity.” This is doubly so in the Common Core era, as states (like New York) move to raise the bar and ask students to show their stuff against a college- and career-readiness standard.

To be sure, proficiency rates should be reported publicly, and parents should be told whether their children are on track for college or a well-paying career. (That’s one of the great benefits of a high standard like the Common Core.) But using these rates to evaluate schools will end up mislabeling many as failures that might in fact be doing incredible work at helping their students make progress over time.

Let’s go back to Jefferson. As a middle school, it welcomes children who enter several grade levels behind. Even if these students make incredible gains in their sixth-, seventh-, and eighth-grade years, they still won’t be at grade level, much less “proficient,” when they sit for the state test. Furthermore, unless the state gives an assessment that is sensitive enough to detect progress—ideally a computerized adaptive instrument that allows for “out of grade level” testing—it might not give Jefferson the credit for all the progress its students are making.

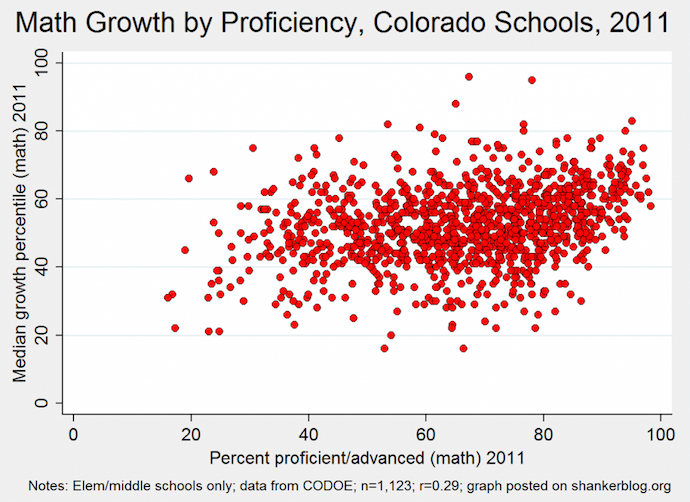

Here’s the rub: There are thousands of Jeffersons out there: Schools with low proficiency rates but strong growth scores. (See figure, borrowed from this Shanker Blog post, and notice in particular the many schools whose “growth percentile” is above 50 but whose percent proficient is below 50.)

This is particularly the case with middle schools and high schools, serving as they do students who might be four or five grade levels behind when they enter. Is it any surprise that middle schools and high schools are significantly more likely to be subject to interventions via the federal School Improvement Grants program? They are being punished for serving students who are coming to them way, way below grade level.

Again, none of this is particularly new or noteworthy. Others (especially reform critics) have made the same arguments countless times before. Yet an emphasis on proficiency rates over student growth is still entrenched in state and federal policy. Yes, Margaret Spellings allowed for a “growth model pilot” when she was secretary of education—but schools still had to get all students to proficiency within three years, an unrealistic standard in states with a meaningful (and rigorous) definition of proficiency. Arne Duncan has also espoused the wisdom of looking at progress over time, yet his ESEA waiver rules require state accountability systems to take proficiency rates into account—those are expected to be the drivers in identifying “focus” and “priority” schools. Nor are Democrats in Congress any better; their ESEA reauthorization bills would maintain No Child Left Behind’s reliance on “annual measurable objectives” driven by proficiency rates.

The charter sector is wedded to proficiency rates, too. In New Orleans, for instance, the Recovery School District has shut down schools with low proficiency rates but strong individual student gains over time in the cause of boosting quality. What it has really done, however, is closed schools worthy of replication, not extinction.*

* * *

Have you figured out by now the true identity of our “Jefferson School”? It’s the highly-acclaimed Democracy Prep. According to the new Common Core–aligned New York test, it’s a low-proficiency-rate, high-growth school. Seth Andrew, Democracy Prep’s founder, explained to me,

Like the rest of New York, our Democracy Prep Public Schools saw dramatic drops in “proficiency rates.” In fact, we saw declines that were even greater than most. Why?

1) Entry Grade Level: Charters that enroll at the K-1 level did dramatically better than those (like Democracy Prep) who enroll in the middle school grades. This is potentially GREAT news for urban education because it means that if students don’t fall dramatically behind, they can get on grade level by grade 3, and stay on or above grade level over time. However, it is not even remotely reasonable to compare schools that randomly enroll in kindergarten to those that enroll in the sixth grade. One school has had seven years with a student while we’ve had nine months!

2) Growth Matters Most: The metric that no one has seen yet and that will be the most important to our teachers, administrators, students, and families at Democracy Prep is not “proficiency” but “value-added growth.” The reason we have operated only “A” rated schools every year since 2006 is primarily because 60 percent of that grade has been based on individual student growth, a metric on which our scholars and teachers post some of the most dramatic improvements year-over-year. In fact, even this year, our percent of “1’s” goes dramatically down in grade seven while our “2’s” go up, and by eighth grade we’ve dramatically reduced “1’s” and substantially increased “3’s and 4’s.”

My key point here is that NO ONE in this work, especially at Democracy Prep, makes so-called “miracle school claims” as reported by our critics. We believe, in fact we KNOW, that educating low-income students is incredibly hard work, compounded by the challenges of poverty, mobility, ELL status, and disability. These are not excuses; they are facts. To move our scholars from whatever grade or performance level they enter to be ready for success in the college of their choice and a life of active citizenship takes us at least five years. Given that time, our scholars consistently out-perform wealthy Westchester County on their Regents exams in nearly every subject and our first class of graduates outperformed white students on their SAT’s. Nearly 70 percent of our graduates met the NYC “aspirational performance measure” for college readiness compared to 22 percent across NYC and we require that our graduates earn an Advanced Regents Diploma because, as these new CCSS results prove, the old bar was far too low.

Is Democracy Prep an A school or an F school? The answer seems obvious to me—and should apply to any school with similar results, name brand or not, charter school or not. It’s time that policy caught up to common sense and put proficiency-rates-as-school-measures out of their misery once and for all.

— Mike Petrilli

*CORRECTION: The school I had in mind is Pride College Prep; someone associated with the school had informed me that it had made strong student-level gains. But I’ve learned from Michael Stone, the Chief External Relations Officer at New Schools for New Orleans, that in fact data from CREDO showed the school’s gains to be among the weakest in the RSD. Furthermore, Michael wrote to me, “None of the charters BESE or the RSD closed would have been candidates for replication.” My apologies for the error.

This blog entry first appeared in the Fordham Institute’s Education Gadfly Weekly.