Recently Carlos Xabel Lastra-Anadon and I compared 2009 state proficiency standards with one another, using as a benchmark the 4th and 8th grade state-by-state assessments in math and reading administered by the National Assessment of Educational Progress (NAEP). Our findings can be found on the EdNext website.

Shortly after our report was released, a reporter asked whether our results differed from those released last fall by the U. S. National Center for Education Statistics (NCES) in “Mapping State Proficiency Standards on to NAEP Scales: 2005-2007.”

The quick answer to that question is that our study provides updated information on 2009 state proficiency standards, while the NCES report provides information on proficiency standards in 2007. Two years ago, EdNext provided its own ranking of states based on the 2007 proficiency standards (see Paul E. Peterson and Frederick Hess, “Few States Set World-Class Standards,” Education Next, Summer 2008).

So our study has the virtue of timeliness, a worthy quality, as long as the substance is accurate. The more interesting question is whether our ranking of the 2007 state proficiency standards that we provided in 2008 provides results similar to those that NCES just recently provided on the same 2007 standards.

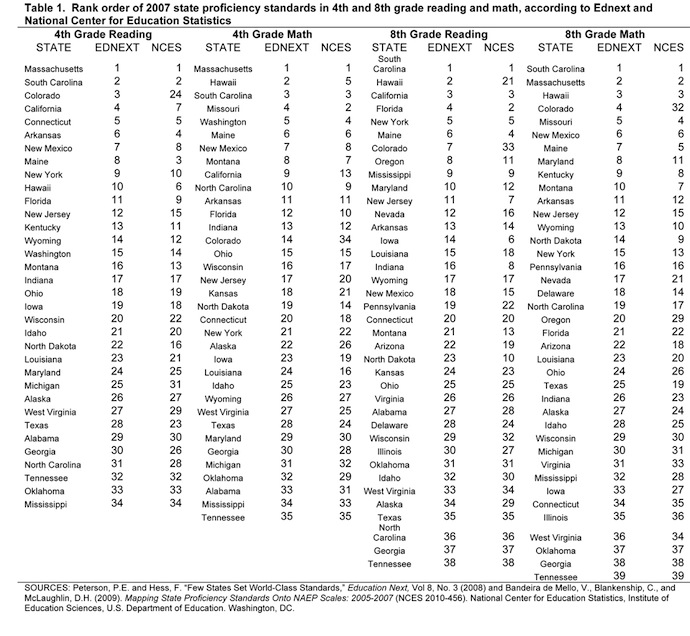

One can find the detailed answer to this question in Table 1. But the bottom line is that both reports yield essentially the same results. In 4th grade reading, 9 out of our top 10 states are given a similar top-ten ranking by NCES. Also, 9 out of our bottom 10 states also rank in NCES’s bottom ten. In both studies, Race to the Top winner Tennessee ranks at the bottom in 8th grade reading, 8th grade math, and 4th grade math. In 4th grade reading, both studies put Tennessee only two states away from the bottom ranking. In other words, the NCES and the Education Next studies generate pretty much the same findings.

In the NCES report on 2007 proficiency standards, state rankings are difficult to find (perhaps because NCES had provided rankings based on 2005 proficiency standards in an earlier report, “Mapping 2005 State Proficiency Standards onto the NAEP Scales”). But in Tables 11, 12, 17 and 18 of the new report (covering the 2007 proficiency standards), the column headed “2007 NAEP scale equivalent” provides scores needed to generate rankings for some thirty states. The actual number of states for which scores are available varies with the grade and subject. But if you want to find out the NCES ranking of the 2007 state proficiency standards without undertaking the laborious task of converting those scale scores to a rank order, simply view Table 1.

Though the NCES and EdNext approaches generate similar results, they differ in an interesting way. We rely upon the data on the percentage proficient reported on each state’s department of education website that averages student performances from every school throughout the state. In other words, we rely upon the state’s own census of all reported results across the state. NCES looks at the proficiency ratings of students at schools who participated in the National Assessment of Educational Progress, a fairly–but not altogether perfectly–representative sample.

Surveys yield results similar to censuses, if accurate surveys are perfectly representative and accurate censuses are complete. Divergences arise when surveys are not representative or censuses are incomplete. A little of both could be going on, which could account for the minor differences between the two studies. Thus, the two approaches provide a useful check on one another.

The main reason for preferring our census-like approach is that it allows for a timely assessment of every state, whereas the IES approach is not only delayed by more than two years but is also limited to only some thirty states. For example, IES does not report the state proficiency standards ranking for RttT winner Delaware, apparently because NCES did not have enough observations to provide a reliable estimate for that state. By using the census approach, we were able to give Delaware a C minus, putting it well below the median state.

For one state, our study produced a result that differs dramatically from NCES’s. We rank Colorado in our top tier of states, while NCES ranked the state at the bottom. We are not quite sure what happened, but we have a guess. After double-checking our numbers and finding no error, we noticed that between 2005 and 2007, Colorado raised its proficiency standards dramatically. The NCES data may have been collected before Colorado made the shift, so it remained stuck near the bottom, according to their results. If any reader can verify that explanation—or offer a better one—we would be most appreciative.

Otherwise, one can assume our 2009 report reveals pretty much what NCES will report in a couple of years—as long as one does not put a lot of weight on any specific ranking number. We prefer to give states a grade from A to F, because that way of grading states groups similar states together rather than highlighting any specific rank number as significant.

Still, one does not go wrong if one thinks of Tennessee as pretty much at the very bottom. On that question, NCES and EdNext are in perfect agreement.