The Trump administration’s Department of Government Efficiency (DOGE) did not just cut the Institute of Education Sciences (IES). It demolished it. Staff were laid off. Contracts were canceled. Core data collections were thrown into disarray. The execution was chaotic.

But the disruption creates an opportunity. As Rick Hess has argued in Education Next, the first priority for rebuilding IES should be restoring its core function: rigorous, national data collection.

We agree. But rebuilding IES can also mean fixing its most obvious gap.

The National Assessment of Educational Progress (NAEP) is one of the great achievements of American social science. It uses large, nationally representative samples of students. It has produced decades of consistent data. It allows researchers to track long-term trends and measure learning loss with precision. And the data helps us understand the most fundamental purposes of education: Can kids read? Can they do math?

But . . .

Over the past decade, the biggest changes in adolescent life have happened outside school hours. Teenagers spend more time on screens and on the couch, and there’s no sign of that changing. They spend less time playing sports, working jobs, reading books, hanging out in person, and dating. Sleep has declined. Achievement has declined. Anxiety and depression have risen.

Yet there are basic questions we cannot answer with reliable data. What fraction of teens are mostly languishing? How many hours do teens spend on different activities each week? How does this vary by income, geography, or family structure? Once a teen is at a steady state, for example 6 hours a day, how often do they change and halve that to 3 hours a day? What happens when those patterns change? Enter the National Assessment of Flourishing and Participation: the NAFP.

The Right Moment to Ask This Question

The Trump administration’s recently released report, “Reimagining the Institute of Education Sciences,” written by Amber Northern, poses a key question about IES’s longitudinal studies. Before DOGE canceled them, the National Center for Education Statistics, or NCES (IES’s statistical division), was running four separate panels: the Early Childhood Longitudinal Study, High School and Beyond, the High School Longitudinal Study of 2009, and the Beginning Postsecondary Longitudinal Study. The Northern Report notes that these studies were expensive, sometimes overlapping, and not designed to produce timely, practitioner-facing results. It asks whether a single “super panel with various cohort types” might be more efficient than tracking three or four separate panels of students over time.

That is exactly the right question. And we want to propose the right answer.

First, a clarification about NAEP. The existing national assessment is cross-sectional by design, and by law. The program is legally prohibited from collecting personally identifiable information or tracking individual students over time. Its results provide a snapshot of what students know at a given moment, taken at enormous scale. It is not a longitudinal instrument.

By contrast, our proposed NAFP sits in the longitudinal-study family. What NAEP and NAFP share is not methodology but function: Both are meant to serve as national report cards, generating a reliable shared factual basis for public debate. NAEP plays that role for academic achievement inside school. No instrument plays that role for how teenagers spend their time outside of it.

The Northern Report actually gestures at this gap. In a footnote, it mentions stakeholder interest in “refreshing the various [NAEP] background questionnaires to capture data on use of AI, screen time, and other present-day concerns.”

That impulse is right. But squeezing screen time questions into a NAEP background survey is the wrong vehicle. Those surveys aren’t reliable. NAEP cannot follow individual students. It cannot use data from wearables or phone telemetry. It cannot run experiments. The answer to that impulse is not a tweaked questionnaire but a new instrument, built for the job.

What We Have—and Why It Falls Short

The federal government does collect some data on how young people spend their time, but none of its surveys or studies answer the key questions. It is worth being precise about why, because the case for developing a new instrument rests on understanding exactly what the existing ones cannot do.

The American Time Use Survey (ATUS, run by the Bureau of Labor and Statistics) is the gold standard for one-day time diaries. It has been running since 2003, samples teens 15 and older, and captures detailed records of how individuals spend their time during a single 24-hour period. It is genuinely useful for tracking national averages.

But its fundamental limitation is structural. Each respondent provides exactly one diary day. That means ATUS cannot tell you how an individual teen behaves across a typical week. It cannot tell you whether the teen who spent six hours on screens Monday was the same teen who skipped soccer practice on Tuesday. It cannot track changes in an individual teen’s time use over time, nor can it serve as a platform for any intervention. ATUS is a snapshot, not a film. For understanding national averages, that’s fine. For understanding whether a particular kid is flourishing this semester, it tells you nothing.

The Adolescent Brain Cognitive Development Study (ABCD, run by the National Institutes of Health) is the most ambitious longitudinal effort in the space. It enrolled over 10,000 children ages 9–10, uses wearables and passive smartphone sensing, and tracks behavior in serious depth. It proves that sensor-based measurement of teen behavior at scale is feasible. That matters.

But ABCD is a neuroscience study. It is brain-first, not participation-first. It was not designed to measure whether teens are converting their evenings into sports, the arts, paid work, or homework. It does not link to schools’ learning management systems or team rosters. It has no experimental engine for testing evening-time interventions. And it is not designed to produce the simple, policy-facing measures that a national assessment would provide.

The High School Longitudinal Study (HSLS:09) is NCES’s own flagship adolescent panel, tracking over 23,000 9th graders’ academic outcomes. It is excellent at capturing what happens inside schools. It is nearly silent on what happens between 3 p.m. and midnight. No wearables, no phone data, no after-school participation verification. HSLS tells us whether a student graduated or took calculus. It cannot tell us whether that student was spending five hours a day on TikTok.

Surveys like the Youth Risk Behavior Surveillance System (YRBSS, run by the Centers for Disease Control and Prevention) provide broad snapshots of teen behavior. But they rely on self-report, do not follow individuals over time, and again are not designed as experimental platforms. They are useful for surveillance. They are not useful for policy evaluation.

Monitoring for the Future is a longstanding (since 1975) longitudinal study of the “behaviors, attitudes, and values” of adolescents from 8th to 12th grade and young adults. But it relies on self-report and was designed around substance use rather than time allocation.

The University of Michigan’s Panel Study of Income Dynamics is another venerable longitudinal study (running since 1968) that includes youth time-diary data through its Child Development Supplement. But it was built to study household economics and intergenerational mobility, not afterschool hours.

Each of these efforts matters. Even taken together, they still leave a gap.

No national system tracks how teenagers spend their time week by week, with verified measurement, linked cleanly to outcomes. The one that comes closest on depth is ABCD, due to its pioneering use of sensors. The one that comes closest on education outcomes is HSLS:09. The one that comes closest on time use is ATUS. But none of them, alone or in combination, can answer the most basic question: How many verified hours is a nationally representative sample of American teenagers spending on flourishing activities versus languishing activities each week?

A New Instrument: The National Assessment of Flourishing and Participation

The idea is simple. NAEP measures what students know. NAFP would measure what they do, outside of school, all week.

At the center is a clear metric. The NAFP would provide a weekly count of hours spent doing activities tied to teens’ development: sports and fitness, the arts, paid work, volunteering, in-person socializing, homework (verified to ensure it is actual work, not fake), and sleep. This would yield what we’ll call the Productive Hours Index (PHI).

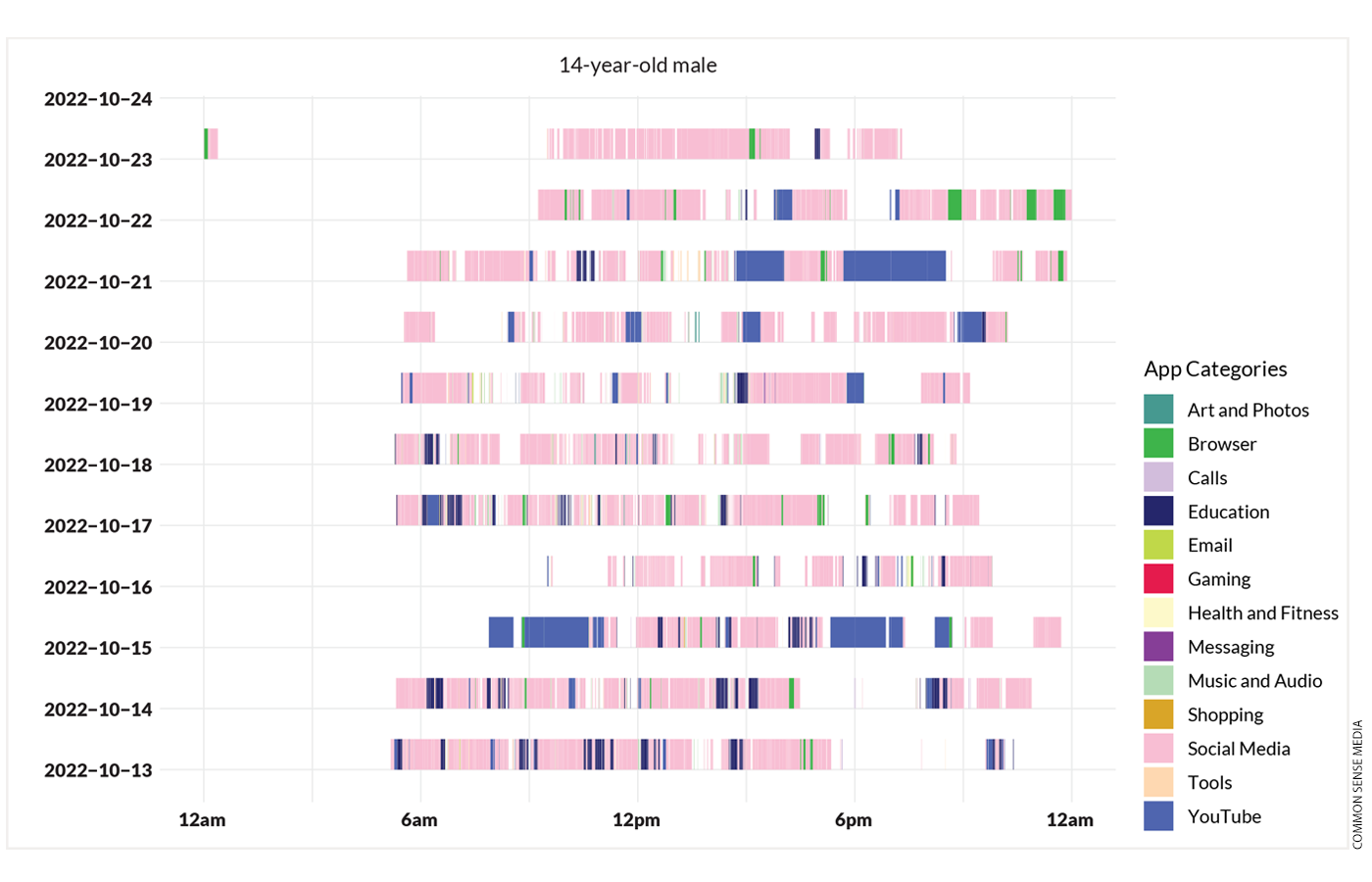

Alongside PHI would be a measure of the Screentime Hours Index (SHI). Here is an example that comes from Common Sense Media’s Constant Companion report:

Imagine an assessment that provides a standardized, nationally representative measure, making comparisons possible across time, place, and policy environment. The country needs a consistent way to observe how teenagers allocate their time—both the actively real and the passively virtual.

The design would be the super panel the Northern Report is looking for but focused on the window of time that the existing longitudinal studies miss, with a panel of about 1,000 students in two age cohorts followed over several years. New participants would be added each year to maintain representativeness. Compared to the cancelled NCES longitudinal studies, the scope here is smaller but far deeper. Intensive measurement of a focused sample produces more actionable signals than a broad survey that skips the hours that matter most for teen flourishing.

Instead of relying on recall surveys, the NAFP would use tools that already exist: smartphone usage logs from Apple Screen Time and Android Digital Wellbeing, with a whitelist distinguishing productive from passive apps; wearables for sleep and physical activity; school LMS data on assignments and completion; simple third-party verification of participation in structured activities—from coach, employer, music teacher, perhaps mom.

From Description to Experiment

NAFP would ideally do more than capture teen behavior. It would allow experimentation.

Today, most youth interventions are evaluated slowly. Often not at all.

A standing panel would allow researchers to run small, fast trials inside the ongoing cohort. They can evaluate phone curfew kits, sleep campaigns, transportation vouchers to rec leagues, homework check-ins, and job placement support. Each trial would be small (roughly 150 to 400 participants) and fast (six to twelve weeks). Results can be measured in weeks, not years.

The Northern Report calls for IES to move toward rapid-cycle research that produces actionable evidence on shorter timelines. NAFP is built for exactly that, applied to the domain where the need is greatest.

EdNext in your inbox

Sign up for the EdNext Weekly newsletter, and stay up to date with the Daily Digest, delivered straight to your inbox.

Parental Consent and Representative Mix

Any national assessment of teen behavior would face a consent problem. NAEP has a parent opt-out model, but very few families withdraw their kids. The result is a genuinely representative sample of students.

NAFP cannot work that way. Asking families to passively accept phone telemetry, wearables, and activity verification for their children is a different proposition than asking their permission to administer a reading assessment. The intrusiveness is more like what’s required from ABCD, which does MRIs!

Despite ABCD’s aggressive efforts to build a nationally representative sample, roughly 41 percent of its participants came from families earning over $100,000 annually, a demographic group that comprises only 27 percent of American children within the target ages.

NAFP’s sample would be similarly skewed—perhaps more so. Phone monitoring is a different kind of commitment than submitting to an MRI, but not necessarily a smaller one to many teens!

Randomized studies of panel surveys and clinical trials consistently find that paying participants increases participation rates. That’s what we propose for NAFP. The precise incentive level should be determined through pilot testing, but a payment in the range of $500 to $1,000 per teen is likely needed to offset the time commitment, the burden of being monitored, and privacy concerns. The aim is a meaningful reduction in selection bias and a sample that we can trust represents American teens.

Why This Belongs at IES

Rebuilding IES around data collection is the right move. But the mission should not stop at the schoolhouse door.

The federal role in education has always been about more than what happens during school hours. The biggest challenges students face today—poor mental health, social isolation, declining participation in structured activities—manifest primarily outside of school. Yet we have no national instrument to track them.

An assessment that measures how teenagers spend their time would inform major debates. It would shape discussions about technology and mental health, provide evidence on extracurricular investments, and give parents and policymakers a clearer picture of whether teens are thriving, not just how they’re scoring.

The NAFP is the rare idea that could endure across administrations. Figures as diverse as Arne Duncan and Marco Rubio have pointed to the importance of what happens with kids outside school. As IES looks for a rebirth, NAFP could be an animating, powerful path.

Mike Goldstein and Sean Geraghty are co-founders of the Center for Teen Flourishing.