This post also appears at Rick Hess Straight Up.

Today, RHSU unveils its first annual edu-scholar “Public Presence” rankings. The metrics, as I explained earlier, seek to capture many of the various ways in which academics contribute to public discourse. My hope is that this exercise helps spur conversation about which university-based academics are contributing most substantially to public debates over education and ed policy, and how they do so. The scoring rubric reflects a given scholar’s body of academic work– encompassing books, articles, and the degree to which these are cited–as well as their footprint on the public discourse in 2010.

The rankings are not imagined to be definitive. Rather, they are an imperfect gauge of the various ways in which scholars impacted the edu-debates in 2010, factoring in both long-term and shorter-term contributions. The rankings were restricted to university-based researchers and excluded think tankers (e.g. Checker Finn or Tom Loveless) whose very job is to impact the public discourse. After all, the intent is to nudge what is rewarded and recognized at universities. (The term “university-based” provides a bit of useful flexibility. For instance, at the moment, Tom Kane hangs his hat at Gates, and Tony Bryk hangs his at Carnegie. However, both are established academics who retain a university affiliation and digs on the campus. So, I figured, what the heck….)

Public Presence scores reflect, in more or less equal parts, three things: articles and academic scholarship, book authorship and current book success, and presence in new and old media. (See my earlier post for the specifics of scoring.) The point of measuring quotes and blog presence is not to tally sound bites, but to harness a “wisdom of crowds” feel for how big a footprint a given scholar had in public debate–whether due to current scholarship, commentary and analysis, their larger body of work, media presence, or whatnot. The specifics ought not be taken too literally. We worked hard to be careful and consistent, but there were inevitable challenges in determining search parameters, dealing with common names or quirky diminutives, and so forth. Bottom line: this is an inaugural attempt intended to stir interest and perhaps urge universities, foundations, and professional associations to consider the merits of doing more to cultivate, encourage, and recognize contributions to the public debate that the academy may overlook or dismiss.

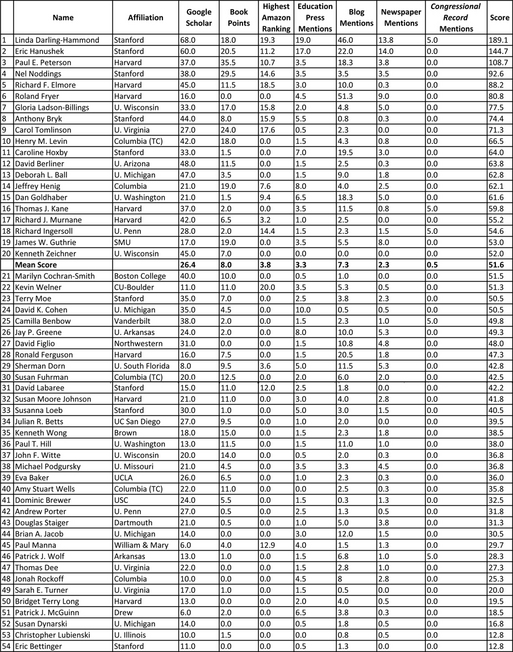

The top scorers made sense. All are familiar edu-names, with long careers featuring influential scholarship, track records of comment on public developments, and outsized public and professional roles. In order, the top five were Linda Darling-Hammond, Rick Hanushek, Paul Peterson, Nel Noddings, and Richard Elmore. Darling-Hammond lapped the field, scoring a ridiculous 189.1 on a scale where a 100 was engineered to reflect chart-topping public presence. Hanushek tallied an almost equally ludicrous 144.7. Peterson was the only other figure to break 100, posting a 108.7. After that, the next highest score was Noddings’ 92.6.

Notable, if not unduly surprising, is that the top five all hang their hats at either Stanford or Harvard. All are veteran, accomplished scholars. This reflects both the nature of the Public Presence scoring (which factors in a scholar’s body of accumulated work) and the fact that the edu-media tended to quote and discuss the work of these thinkers.

After the top finishers, scores fell off quickly. After the top six, no other scholar rated cracked 80, and just three more broke 70. Indeed, on this list of 50+ impressive researchers, a score north of 50 was sufficient to land a place in the top twenty.

How did the scores break out? The highest Google Scholar number (gauging scholarly publication and citation) was a 68, with most scholars scoring in the high teens or twenties. In other categories, scores were normed to broadly fall in the zero to twenty range. This was done for book authorship points, book ranking points, and mentions in blogs, newspapers, and the established edu-press (e.g. Ed Week and The Chronicle of Higher Ed), with an expectation that blog/newspaper/edu-press mentions would each typically max out around 10 to 15 points.

If you eyeball the relevant columns, you’ll see the scores generally conformed to these parameters, with the exception of a few determined outliers. When it came to books, Harvard’s Paul Peterson, Stanford’s Nel Noddings, and U. Virginia’s Carol Tomlinson–three prodigious and widely read authors–blew the lid on the expected scoring range. As for 2010 edu-press quotes, all but two folks scored less than ten points. The two exceptions were Stanford quotemeisters Darling-Hammond and Hanushek, both of whom appeared almost twice that often. As for blog mentions, just four scholars tallied more than 20 points: Darling-Hammond and Hanushek, once again, along with Harvard scholars Ron Ferguson and Roland Fryer. Fryer, in fact, was something of a blog phenom–getting mentioned more than twice as much in the blogosphere as any other ranked scholar. If his blog presence had been less remarkable, Fryer would probably have ranked 30-something rather than sixth.

For those wondering about patterns, implications, or potential biases, there are any number of questions. Economists rarely pen books, but they do tend to coauthor substantial numbers of articles and rack up impressive citation counts. It’s not clear how all that cuts. Indeed, given that economists like Rick Hanushek, Roland Fryer, Hank Levin, Caroline Hoxby, Dan Goldhaber, Tom Kane, and Dick Murnane all finished in the top twenty, it’s clear that the discipline’s low regard for books wasn’t much of a handicap. That said, I was struck that many young, accomplished economists finished much lower than I’d have expected. What to make of this, and whether this reflects issues in the scoring or the limited public presence of these talented thinkers, is an open question.

Scholars focused on higher education generally post low scores, and seem to be quoted much less frequently than K-12 scholars–though it’s not clear whether that’s because there’s less interest in and opportunity for public discussion of higher education, or a choice that these scholars are making.

The emphasis accorded to established bodies of work clearly advantages senior scholars at the expense of junior academics. And, given that the ratings are a snapshot of public presence in 2010, the results obviously advantage scholars who recently penned a successful book or big-impact study. But both of these also accurately reflect the way in which bodies of work and successful books can have a disproportionate impact on public discussion–so I’m disinclined to see any problems in such a “bias.”

There’s also the challenge posed by bloggers like Jay Greene and Sherman Dorn, whose own blogging or think tank critiques mean that they are publishing with great frequency. The key to addressing this was encapsulated in the mantra that the aim was not to measure how much a scholar writes, but how much resonance that work has. Flagging blog entries and newspaper mentions in which a scholar is identified by university affiliation here serves a dual purpose: avoiding confusion caused by common names while also ensuring that a scholar’s own blogging or letters to the editor won’t pad their scores. If bloggers or letter-writers are frequently provoking discussion, the figures will reflect that. If a scholar is mentioned sans affiliation, that mention is omitted here; but that’s true across-the-board. If anything, that seems likely to consistently moderate the scores of well-known scholars for whom university affiliation may seem unnecessary. Of course, if certain scholars are disproportionately less likely to have their affiliation referenced, their blog and newspaper scores will be biased downward.

If readers want to argue the relevance, construction, reliability, or validity of the metrics or the rankings, I’ll be happy as a clam. I’m not at all sure I’ve got the measures right, that categories have been normed in the smartest ways, or even how much these results can or should tell us. That said, I think the same can be said about U.S. News college rankings, NFL quarterback ratings, or international scorecards of human rights or international competitiveness. For all their imperfections, I think these systems convey real information–and do an effective job of sparking discussion (about questions that are variously trivial and substantial). That’s what I’ve sought to do here.

I’d welcome suggestions regarding possible improvements–whether that entails metrics worth adding or subtracting, smarter approaches to norming, or what have you. I’m also curious to hear critiques, concerns, questions, and suggestions from any and all. Take a look, and then have at it. That’s kind of the point, after all.

-Frederick Hess