Imagine this: You pass your high-school classes, graduate, and enroll in college. When you get to campus, you’re told to take placement tests in English and math. The questions range from basic grammar to advanced algebra, drawing on lessons from years ago. You don’t pass, and are suddenly shunted into an expensive academic purgatory: remedial classes. The required courses take time and tuition dollars but don’t count toward graduation.

Imagine this: You pass your high-school classes, graduate, and enroll in college. When you get to campus, you’re told to take placement tests in English and math. The questions range from basic grammar to advanced algebra, drawing on lessons from years ago. You don’t pass, and are suddenly shunted into an expensive academic purgatory: remedial classes. The required courses take time and tuition dollars but don’t count toward graduation.

It’s a widespread problem. Across the United States, more than half of entering freshmen discover they are ineligible for college-level coursework each year, most commonly in math. The readiness gap is widest when considering students at nonselective two-year colleges, and students who are black, Hispanic, or from low-income families. And it often spells disaster for students looking to earn a degree: among community college students needing remediation, an estimated 30 percent don’t ever enroll in required remedial courses, most don’t pass the courses they do take, and just 1 in 10 graduates within three years.

How can colleges and policymakers better support students assigned to remediation and improve their rates of college completion? One way would be to boost pass rates in remedial math courses, which may be the single largest academic barrier to college success. Colleges across the country are also experimenting with interventions such as mainstreaming, whereby students assessed as needing remediation are placed directly into a college-level course, sometimes with additional academic support. Although prior studies of mainstreaming have produced mixed results, none have used experimental methods to gain a clear picture of its effectiveness.

Here we present new results from a randomized controlled trial of mainstreaming at three community colleges at the City University of New York (CUNY). Some entering students who ordinarily would have been assigned to a remedial elementary-algebra class were placed instead in a college-level statistics course and provided with extra academic support. We find that the students placed directly in college-level statistics did far better than their counterparts in remedial classes, even when students in remedial classes were also given extra support. They were more likely to pass their initial math course and, three semesters after the experiment, had completed more college credits overall. In short, our study suggests that many students consigned to remediation can pass credit-bearing quantitative courses right away.

The CUNY Experiment

CUNY’s challenges with remedial education mirror national patterns: 76 percent of new community-college freshmen were assessed as needing remediation in the fall of 2014, based largely on college-administered placement tests. Many students assigned to remedial courses delay or simply never complete them, and the pass rate among those who do complete is only 38 percent in the highest-level remedial mathematics course. Among such students entering CUNY in 2011, just 7 percent graduated within three years, compared to 28 percent of other students.

In the fall of 2013, we conducted a randomized controlled trial at three CUNY community colleges aimed at assessing the effects of the mainstreaming approach to remediation, in which students are placed directly into college-level courses. Incoming students assessed as needing remediation were randomly assigned to one of three course types: traditional remedial elementary algebra; the same algebra course with an additional two-hour weekly workshop; or a college-level statistics class with an additional two-hour weekly workshop. Only students enrolled in the college-level statistics class would earn college credit if they passed.

We limited our sample to freshmen intending to major in disciplines not requiring college-level algebra, and to students who were assessed as needing mathematics remediation (based primarily on their performance on the college-placement COMPASS exam). Across the three colleges, 2,860 eligible students were identified and invited to sign up at new-student orientation sessions, and 907 agreed to participate. Research personnel randomly assigned these students to one of the three course types and informed students of their assignments. Two weeks after the start of the semester, at the end of the colleges’ course drop period, 717 of these consenting students were enrolled in their assigned sections.

Attrition rates before the end of the drop period differed somewhat across groups: 18 percent in remedial elementary algebra without workshops, 28 percent in algebra with workshops, and 17 percent in statistics. There were no statistically significant differences among the three groups in attrition once the census date had passed. For simplicity, we report results based on the 717 students who enrolled in their assigned sections. However, we obtain the same pattern of findings when we conduct “intent to treat” analyses that examine data on all 907 students, regardless of whether they attended the course to which they were assigned.

The participants in our experiment are broadly representative of all students assessed as needing remedial elementary algebra. We compared our participants with students who did not consent to participate in the experiment but who had been assessed as needing remediation and who enrolled in other sections of remedial elementary algebra during the same semester as the experiment. We found no significant differences, though participants had a larger share of minority students than nonparticipants, with both groups being majority-minority. Of course we cannot rule out the possibility that the two groups differed on other, unmeasured variables, given that participating students consented to be in our experiment, which involved attending a class taught during the day, and the other group of students did not opt to take part.

We also ensured that instructor quality did not vary across treatment groups. Each of the study’s 12 full-time instructors (four at each campus) taught one section of each of the three course types, following a common syllabus for the course (common across each college for statistics, and across CUNY for remedial elementary algebra). Faculty were not given the experiment’s research hypothesis and were never told that the researchers hoped that students in statistics would have at least the same pass rate as those in remedial elementary algebra. They were instructed to teach and grade the research sections as they ordinarily would.

Students in each of the courses were asked to complete a mathematics attitude survey at the semester’s start and end, and a student satisfaction survey at the semester’s end. The mathematics attitude survey consisted of 17 questions covering four domains: perceived mathematical ability and confidence; interest in and enjoyment of mathematics; the belief that mathematics contributes to personal growth; and the belief that mathematics contributes to career success and utility. The student satisfaction survey asked about a student’s activities during the semester, such as whether he or she had participated in a student-initiated study group or gone for tutoring (which was available to all students independent of the experiment), and about the student’s satisfaction with those activities.

We reviewed data on student performance in the algebra and statistics courses. At the end of the semester, students enrolled in remedial elementary algebra, with or without weekly workshops, took the required CUNY-wide elementary algebra final examination and received a final grade based on a CUNY-wide rubric. Students enrolled in the statistics class with workshops were graded by their instructors using the common syllabus for their college. All outcomes other than a passing grade, including any type of withdrawal or a grade of incomplete, were categorized as not passing.

We also gathered data on students’ future course taking and completion. All participants who passed their math classes were exempt from any further remedial mathematics courses and were eligible to enroll in introductory college-level (i.e., credit-bearing) quantitative courses. Statistics students who passed earned college credit, satisfied the quantitative requirement of the CUNY general-education curriculum, and were qualified to enroll in courses for which introductory statistics was the prerequisite. Any participant who did not pass his or her class had to re-enroll in traditional remedial elementary algebra and pass it before taking any college-level quantitative courses.

Higher Pass Rates

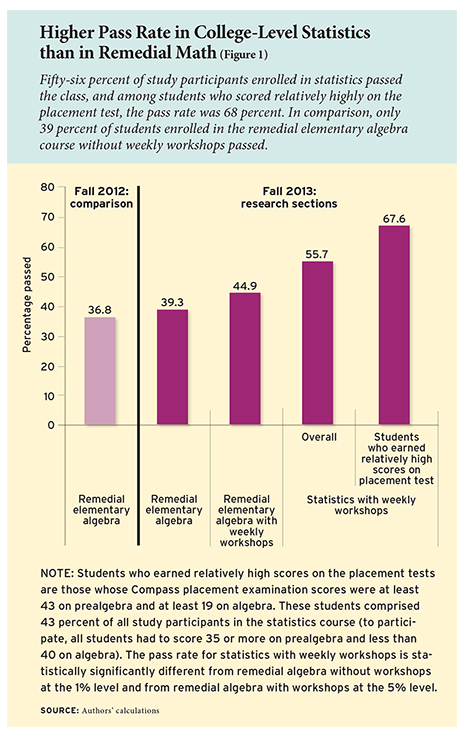

Students who were placed directly in college-level statistics did far better than their counterparts in remedial classes, regardless of their score on the placement test. In fact, the statistics class with weekly workshops was the only course in which a majority of students passed (Figure 1). Fifty-six percent of students in statistics with weekly workshops passed, compared to 45 percent of students in the remedial elementary algebra course with weekly workshops and 39 percent of remedial elementary algebra students who did not have extra workshops. The 17-percentage-point difference between algebra without workshops and statistics was statistically significant, as was the 11-percentage-point difference between algebra with workshops and statistics with workshops.

Students who were placed directly in college-level statistics did far better than their counterparts in remedial classes, regardless of their score on the placement test. In fact, the statistics class with weekly workshops was the only course in which a majority of students passed (Figure 1). Fifty-six percent of students in statistics with weekly workshops passed, compared to 45 percent of students in the remedial elementary algebra course with weekly workshops and 39 percent of remedial elementary algebra students who did not have extra workshops. The 17-percentage-point difference between algebra without workshops and statistics was statistically significant, as was the 11-percentage-point difference between algebra with workshops and statistics with workshops.

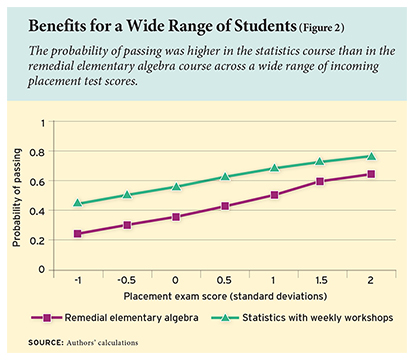

The positive effect of taking statistics (relative to remedial elementary algebra without workshops) was similar across students with a wide range of placement test scores (Figure 2). Most notably, students with the lowest scores on the placement test benefited just as much from taking statistics as students with higher scores. We also found a similar pattern of results after controlling for a variety of student characteristics, including gender, high school GPA, and number of days to consent to participate in the study.

We also compared the overall pass rates for each of the three groups of participants to the historical pass rates for the courses in fall 2012. Pass rates for remedial elementary algebra without workshops were similar during the study period and during the year before: 39 percent of study participants passed, compared to 37 percent of students in fall 2012.

Students assessed as needing remediation who were placed directly into the college-level statistics class passed at a lower rate than nonremedial students the year before: 56 percent of study participants passed, compared to 69 percent of nonremedial students in fall 2012. This is not surprising given their difference in preparation. Participants who had earned relatively high—though still not passing—scores on the placement test passed at a mean rate of 68 percent, similar to students who were not assessed as needing remediation and who took the statistics class the year before. This suggests that colleges can place students into credit-bearing statistics classes even if their placement-test performance is just below the cut score without any diminution in the typical statistics pass rate—at least if those students can draw on the kind of support the weekly workshops provided to students in our experiment.

Students assessed as needing remediation who were placed directly into the college-level statistics class passed at a lower rate than nonremedial students the year before: 56 percent of study participants passed, compared to 69 percent of nonremedial students in fall 2012. This is not surprising given their difference in preparation. Participants who had earned relatively high—though still not passing—scores on the placement test passed at a mean rate of 68 percent, similar to students who were not assessed as needing remediation and who took the statistics class the year before. This suggests that colleges can place students into credit-bearing statistics classes even if their placement-test performance is just below the cut score without any diminution in the typical statistics pass rate—at least if those students can draw on the kind of support the weekly workshops provided to students in our experiment.

Lasting Effects

The statistics students’ enhanced academic success lasted well beyond the end of the experiment. One year later, statistics students were slightly more likely to persist in college: 66 percent were still enrolled in fall 2014 versus 62 percent of students in remedial elementary algebra without workshops, though this difference was not statistically significant.

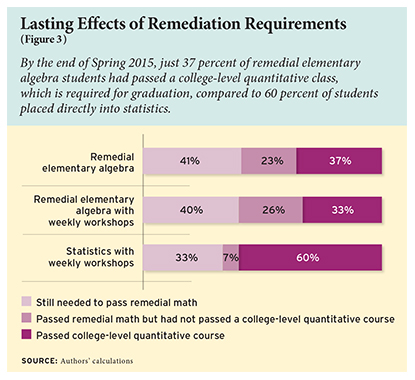

Not surprisingly, students placed directly into statistics were also significantly more likely to have passed a college-level quantitative course. After four semesters in college, 60 percent had, compared to 37 percent of students placed in the remedial elementary algebra class and 33 percent of students who took remedial elementary algebra with workshops (Figure 3). More than one-third of students placed in remedial elementary algebra—with or without workshops—still had not passed that class by the end of their second year of college.

In addition, statistics students were no less likely to have passed classes in other general-education course categories, such as STEM, English, and other disciplines, compared to students in the remedial algebra groups. This suggests that the completion of remedial algebra is not necessary to ensure successful completion of other college courses.

In addition, statistics students were no less likely to have passed classes in other general-education course categories, such as STEM, English, and other disciplines, compared to students in the remedial algebra groups. This suggests that the completion of remedial algebra is not necessary to ensure successful completion of other college courses.

Statistics students also completed more credits during their first two years of college. By the end of the spring 2015 semester, three semesters after the experiment’s end, they had accumulated four to five more college credits than students in the remedial elementary algebra course without workshops. This gap has grown larger after each semester, indicating that it was not just the one statistics course that put students ahead of their peers.

Statistics students expressed more positive attitudes about math, as well. Our survey data should be interpreted with caution given response rates of around 50 percent, but the data suggest that statistics students showed significant increases on the Interest, Growth, and Utility domains. By contrast, students in both remedial algebra courses showed increases only in the Interest domain.

The surveys also revealed that students in different classes behaved somewhat differently. Students reported higher levels of engagement with the college-level statistics class, establishing more self-initiated study groups than students in the remedial algebra classes. In addition, statistics students were significantly more likely to attend their workshops than remedial algebra students: 72 percent versus 65 percent.

These differences in behavior may be course effects, indicating the positive motivational effects of being assigned to a college-level course. It is also possible that, due to the random assignment methods, statistics students may have felt that they won a lottery and therefore were more motivated to succeed. However, any such effect, if it existed, would have had to have continued into the year after the experiment was over, when the credit gap between the statistics and remedial algebra students widened.

Could there be other explanations for the differences observed among our three groups? The 12 classes in our study were taught by four different instructors at three different colleges. Could their actions have influenced these different outcomes?

Remedial elementary algebra and introductory statistics are qualitatively different classes, so it is not possible to compare their grading or difficulty directly. However, several pieces of evidence suggest that students’ varying results were due to group assignment, not their instructor. We found no significant relationships between the percentage of students passing each section and instructors’ tenure status, total years of experience, or experience teaching statistics. In addition, all remedial elementary algebra classes in the experiment covered standard topics and used a common final exam and final grade rubric. The introductory statistics courses at each of the three colleges followed a college-wide common syllabus.

Implications

Remedial education is estimated to cost states and students at least $1.3 billion annually. The requirements most affect student groups least likely to graduate from college overall: students of color, students from low-income families, and students at less-selective academic institutions. Although remedial classes are intended to help students prepare for college-level coursework, they often have precisely the opposite effect—trapping students in developmental coursework sequences that do not earn college credit.

Colleges and policymakers should consider whether students need to pass remedial classes in order to progress to credit-bearing courses. While there are several possible strategies to help students who cannot demonstrate college readiness at the outset of their studies, mainstreaming is an efficient approach with the potential to transform the college experience.

Our study indicates that remedial courses may not be needed in many cases. Regardless of their race or ethnicity, students with a wide range of incoming placement test scores did better in statistics with weekly workshops than their counterparts in noncredit remedial elementary algebra, with or without weekly workshops. In addition, those early benefits persisted: students in our study continued to advance more quickly in college than other students, even though they did not enjoy the supposed benefits of having passed the remedial class. Views can differ as to which quantitative subjects a college graduate should know, but passing remedial elementary algebra does not seem necessary in order to pass introductory statistics.

Requiring all students to pass remedial classes before they can earn the credits needed for their degrees imposes extra costs on students, colleges, and taxpayers—funds that could be spent on other college courses and programs. That cost, as well as our overall educational goals, should be taken into account when setting higher-education policies. College communities, and our society, must decide whether the investment is worth the results.

A shift in college requirements away from remediation could positively affect the academic progress of hundreds of thousands of college students each year. Although nationwide figures are hard to come by, in part because states count college enrollment, persistence, and remediation rates differently, a single example can make the potential clear.

Let’s focus just on math, just at CUNY community colleges, for just one semester. In fall 2012, 7,675 new students were initially assessed as needing remedial elementary algebra. For those students to complete statistics within two semesters, they would have to pass remedial algebra in the fall, return to college in the spring, and then pass statistics.

The chances of that happening? At best, one in four. The remedial algebra pass rate is 37 percent. The retention rate from fall to spring is 84 percent. The pass rate in statistics for students who have taken remedial algebra is 68 percent. Even if we were to assume that all fall students who pass elementary algebra are retained for the spring, and that all such students take statistics that spring, the probability of completing statistics within two semesters is still only 25 percent. Compare that to students in our study who took statistics right away, 56 percent of whom passed. In our example, fast-tracking students assessed as needing remediation into statistics would result in at least 2,379 additional students passing statistics by the end of their second semester—out of one semester’s incoming class at one university.

The benefits of a college degree are considerable and wide-ranging, and go beyond enhanced lifetime earnings. Graduates may also enjoy lower rates of poverty, better health outcomes, be less likely to engage with the criminal justice system, and report higher levels of personal happiness. If closing persistent gaps in degree attainment is a critical value, policies allowing new students to directly enroll in credit-bearing quantitative college classes deserve serious consideration.

Alexandra W. Logue is former university provost and executive vice chancellor of the City University of New York (CUNY) system, and a current research professor at CUNY’s Center for Advanced Study in Education (CASE). Mari Watanabe-Rose is director of undergraduate education initiatives and research at CUNY. Daniel Douglas is a senior researcher at Rutgers University’s School of Management and Labor Relations.

More detailed information about the authors’ research can be found in the September 2016 issue of Educational Evaluation and Policy Analysis.

This article appeared in the Spring 2017 issue of Education Next. Suggested citation format:

Logue, A.W., Watanabe-Rose, M., Douglas, D. (2017). Reforming Remediation: College students mainstreamed into statistics are more likely to succeed. Education Next, 17(2), 78-84.