With graduation rates at an all-time high, three million students will graduate from U.S. public high schools this spring. But federal achievement data indicate that these students likely have no better math or reading skills than their parents did. Commentators often try to explain away this troubling trend as an artifact of changing student populations, flaws in test design, or declining student effort on low-stakes tests. But a new analysis suggests that stagnant high school achievement is a real phenomenon that warrants increased attention.

With graduation rates at an all-time high, three million students will graduate from U.S. public high schools this spring. But federal achievement data indicate that these students likely have no better math or reading skills than their parents did. Commentators often try to explain away this troubling trend as an artifact of changing student populations, flaws in test design, or declining student effort on low-stakes tests. But a new analysis suggests that stagnant high school achievement is a real phenomenon that warrants increased attention.

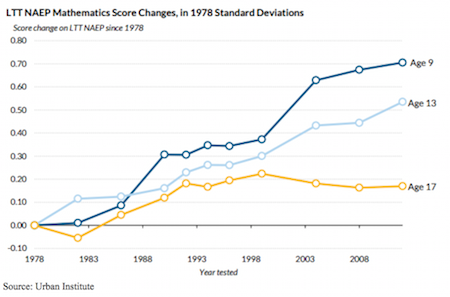

The National Assessment of Educational Progress (NAEP) administers standardized tests to nationally representative samples of U.S. students, including 12th-grade students and 17-year-olds. NAEP data indicate that the scores of high school students have been flat since the early 1990s, despite rising achievement among students in elementary and middle school.

In a new Urban Institute report, we examine four hypotheses for why achievement gains fade out by the end of high school. In short, we find little evidence to support any of them.

First, high school scores might appear to be stagnant because not enough time has passed for the gains from earlier grades to show up in the test scores of students in later grades. However, the NAEP data show that student cohorts made gains relative to previous cohorts when tested at ages 9 and 13, but that those gains disappeared by the time they were 17.

Second, increases in high school persistence and graduation rates may have increased the number of academically marginal students who remain enrolled in school and are therefore included in the testing pool. But we find little evidence that shifting student characteristics explain flat scores, or that periods of increasing high school persistence coincide with periods of divergent scores between older and younger students.

Third, even a casual observer of education might posit that high school students do not take a low-stakes test like NAEP seriously. For this to affect score trends, it would have to be the case that today’s high school students are taking the test less seriously than their predecessors. But we find no evidence that metrics such as how hard students report trying on the test or how many test items they leave blank have changed over time.

Finally, we might worry that NAEP has become less aligned to the subject matter taught in high schools over time. That could be the case, but the pattern of increasing achievement among younger students and flat high school achievement is observed on two different versions of the NAEP as well as on international assessments used in the U.S.

For too long, the academic performance of the nation’s high school students has been overlooked or explained away. Policy efforts often focus on elementary and middle schools, such as federal annual testing requirements that apply to grades 3-8 but only one grade in high school. And the federal government collects much more detailed, regular data on the academic achievement of younger students.

That needs to change. All of the available data provide a wake-up call for researchers and policymakers to renew their commitment to high school students and ensure that the academic gains that elementary and middle schools have produced are not squandered.

—Matthew M. Chingos and Kristin Blagg

Matthew Chingos is a senior fellow at the Urban Institute, where Kristin Blagg is a research associate. The full report on which this is based is “Varsity Blues: Are High School Students Being Left Behind?” which was published by the Urban Institute on May 3, 2016.