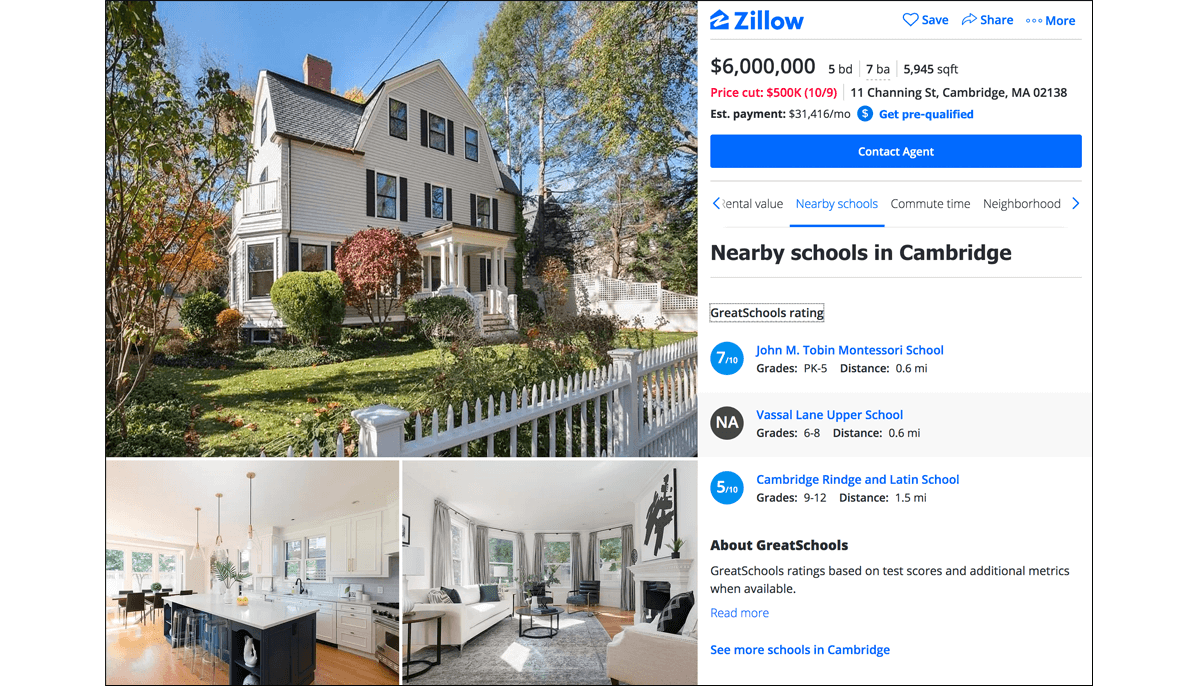

If you are a parent looking for a new home, you probably asked friends and family about the quality of the local schools in the area. Perhaps you visited school information websites like Niche, SchoolDigger, or GreatSchools. Or you may have seen GreatSchools’ ratings on real estate websites like Zillow, Realtor.com, and Redfin. A few intrepid individuals may have attempted to navigate the official school report cards that states and school districts release each year.

And, more than likely, you found yourself on the receiving end of a deluge of advice, rankings, and page after page of difficult-to-decipher data. School quality is an incredibly important issue—especially to families on the move—but it is hard to define and even harder to measure.

Recently, the education news outlet Chalkbeat published an original analysis suggesting that the school ratings found on GreatSchools systematically favor whiter and wealthier schools. GreatSchools’ ratings are derived from a combination of measures: average student test scores, student growth from year to year, graduation rates, Advanced Placement courses, and student performance on college placement tests like the SAT and the ACT. Currently, the most heavily-weighted factor in the ratings is students’ average test scores. There is a well-established body of research that documents the relationship between average test scores and the racial and socio-economic composition of the student body: whiter and wealthier schools tend to score higher than their counterparts that serve a larger proportion of disadvantaged students.

This is where the confusion begins to creep in. Low-income students and students of color face far more obstacles in the pursuit of their education than their more advantaged peers. The differences in average test scores may reflect differences in school quality, but they may also reflect the effects of poverty and discrimination on student achievement. To understand school performance—instead of all the other factors that can influence student achievement—families need more than just average test scores. They need information on students’ rates of improvement over time.

Student growth can be surprisingly difficult to measure. It is not enough to compare a school’s results from one year to the next. Changes in average test scores in a school can be the result of changes in the difficulty of the test or changes in the composition of students (e.g., a relatively low-performing class one year and a relatively high-performing class the next). To measure growth effectively, we need to track individual students’ trajectories over time. For example, how does a student’s test scores in 4th grade compare to her test scores in 3rd grade? Did she progress academically at the average rate as her peers? Faster than average? Slower than average? When we calculate these growth rates for all students in a school, we can get a more accurate indicator of how well that school serves its students, regardless of where they started. Importantly, average student growth also bears a much weaker underlying relationship with student demographics, making it easier to disentangle school performance from the confounding influence of racial and socio-economic inequities.

Even when measured well, growth is not a perfect indicator of school quality. Average growth should not be misconstrued as a straightforward measure of a school’s causal effect on student learning. There are other, non-school factors that also influence growth. Moreover, contemporary growth models only assess student progress in tested subjects like reading and math. Lastly, peer performance—which is better captured with a measure like average test scores—could also be an important element of school quality and one that some families may find relevant when making school and housing decisions.

States and school districts have started to add measures of student growth into their school accountability systems. This shift raises two important questions. First, as growth information becomes more widely available, will families incorporate it into their own decision-making processes? Second, because of average growth’s weaker relationships with race and class, will the distribution of growth information steer families towards less white and less affluent schools?

To help us think through these questions, my colleague and I conducted a simple experiment. We recruited 2,500 US adults to participate in an online survey. We asked them to imagine that they were parents moving to a new city (either New York City, Los Angeles, Chicago, Houston, or Phoenix). Their task was to choose between the five largest school districts in each metro area. To guide this decision, we provided them with real data on the demographic characteristics of each district. In addition, participants were randomly assigned to receive some form of academic performance information that we collected from the Stanford Education Data Archive: average test scores, average growth, both, or neither.

We discovered that people did, in fact, notice and use the information about student growth. The participants who received average growth data tended to choose higher growth districts than their peers who received either no academic performance information or only average test scores. Furthermore, because of the different underlying relationships between average growth and student demographics, the participants who received average growth data also tended to choose less white and less affluent districts. Importantly, we discovered that it was not necessary to withhold any information. The participants who received both average test scores and average growth data tended to choose higher growth, less white, and less affluent districts than their peers who only received average test scores.

In short, we found that giving people information about student growth can A) help them identify higher performing school districts and B) steer them towards less racially, ethnically, and economically homogenous districts. These results are consistent with the conclusion that the folks at Chalkbeat draw: the kinds of data that school information websites choose to emphasize can influence families’ school and housing decisions with real consequences for racial and socio-economic segregation.

States and districts should place more weight on student growth in their summative evaluations of schools, and they should display student growth more prominently in the report cards that they release to the public. The same applies to school rating websites like Niche, SchoolDigger, and GreatSchools, which play a pivotal role in distributing these data to the public. However, it is not entirely clear what the optimal weighting system ought to be. Schools serve many stakeholders, including students, families, employees, and local communities. Different groups may assign different levels of importance to different indicators of school quality. Moreover, not all state data systems are created equal. Some states have considerably more robust and sophisticated models for calculating student growth than others. Some states do not measure growth at all.

The question at the heart of the matter—How should we measure school quality?—does not have one answer. Nevertheless, we can be quite confident that answers to this question that rely exclusively or primarily on average test scores are woefully incomplete. Families need to be aware that there are more and better sources of information about school quality than average test scores. As families begin to demand information about student growth, we can challenge the conventional and flawed wisdom that the best schools and districts are almost always the whitest and wealthiest schools and districts.

David M. Houston is a postdoctoral research fellow at Harvard University’s Program on Education Policy and Governance.