The rapid pace of Covid-19–related school closures forced districts to switch to remote-learning plans under incredible time pressure. This urgent instructional retooling led to wide variation in program quality across a number of factors—including when remote instruction actually began. While many districts responded quickly and began providing instruction almost immediately after school buildings were shuttered, others didn’t provide remote learning until weeks after closures began.

Timing was just one small piece of the remote-learning puzzle districts had to solve, however. The Covid-19 Educational Response Longitudinal Survey, or C-ERLS, which I lead, has attempted to gauge the full spectrum of school districts’ efforts, including those related to technological supports and instructional platforms, over six waves of nationally representative data collected in 12 weeks. After a period of swift change through March and April, the data on remote-learning offerings stabilized in May, giving the opportunity to clearly compare variations between districts. Though the sample size is small, its statistical power can nonetheless detect substantial differences—many of which give cause for concern.

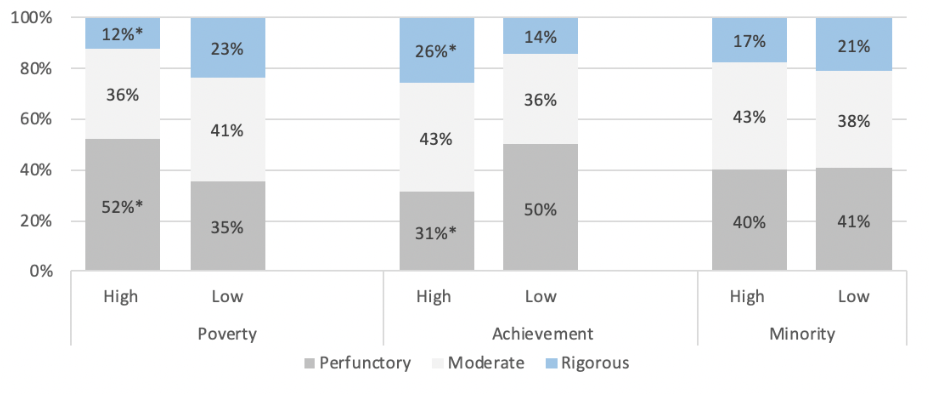

Only about one in five schools has a remote-learning program that meets the standard we defined as “rigorous.” Remote-learning programs are less rigorous in more schools in historically higher-poverty and low-achieving districts than in wealthier, higher-achieving districts. These district-level differences in remote-instruction offerings throughout the pandemic may exacerbate existing achievement gaps.

Data and Methods

C-ERLS was designed in the early days of pandemic closures to be rapid, reliable, representative, and repetitive. The first round of data, which assessed districts’ early responses in the immediate wake of building closures, was collected on March 27. Anticipating many changes as the school year progressed, American Enterprise Institute researchers collected data in waves from late March to the end of May. The data used in this article come from the sixth collection wave from May 27 to 29, 2020. While some districts were already closed by then, these data reflect districts’ most recent offerings as of the last round of collection during which schools were open.

Information was gathered from school-district websites, rather than district personnel, because websites are centralized communication hubs and provide an assuredly high response rate. It is possible that schools offered more than was reflected on district websites, but districts’ directives to schools are the best indicators available at this early stage. It is also possible that individual schools or classes did not follow the directives on district websites. This data interpretation captures district intent, which may not necessarily translate into instructional behavior.

Data comes from a nationally representative sample of 250 regular school districts that reflect the offerings of districts across the nation. I drew the sample so that larger districts—those with a greater number of schools—had a proportionately higher chance of being included. Though the data was collected at the district level, those districts’ offerings were applied to all schools within the district, and results are reported as percentages of schools, because schools are the level at which services are delivered. More information on the sample, six waves of C-ERLS, and a more detailed forthcoming report, is available at the C-ERLS webpage.

I used additional data sources to compare schools in districts with higher and lower percentages of minority students and students eligible for free and reduced-priced meals. Measures of minority student composition and poverty, as determined by districts’ student free and reduced-priced–meal eligibility, came from the National Center for Education Statistics’ Common Core of Data. I defined high-minority and high-poverty districts as those where more than 60 percent of students are non-white and eligible for free and reduced-price meals, respectively. To divide districts by student achievement, I used data from the Educational Opportunity Project at the Stanford Education Data Archive, which offer comparable measures of student math and reading achievement based on state tests from school years 2009 to 2016. Though somewhat dated, these scores are comparable across states and adequate to categorize districts according to their historical achievement.

Were Efforts Rigorous, Moderate, or Perfunctory?

Remote-learning efforts differ across districts, as detailed further below, but no single aspect captures the entire package of remote-instruction education. A combination of data points, however, can provide a more holistic assessment of potential instructional quality. We placed districts in one of three categories based on how instructional offerings might, or might not, approximate the classroom instruction students receive when school buildings are open.

Rigorous instructional offerings occurred in districts that: relied on online platforms to allow individual teachers to direct students’ remote learning; provided some synchronous instructional platforms; expected all students to participate, either through explicit statements or by formally taking attendance in remote instruction; required that teachers grade student’s remote work; and expected teachers to have some form of direct contact with students.

Perfunctory instructional offerings occurred in districts that relied on instructional packets or explicitly stated on their websites that students’ participation was not required, that attendance would not be taken, or that student work would not be graded. If a district website did not communicate any information on remote-instructional offerings, then it was placed in this category.

Moderate instructional offerings occurred in districts that were less ambitious than rigorous counterparts but more ambitious than perfunctory ones.

Overall, 40 percent of schools were in districts with perfunctory programs, and 40 percent were in districts with moderate programs. One in five schools, or 20 percent, were in districts whose websites described rigorous programs of remote instruction.

Figure 1. Categories of Remote Instruction by District Characteristics

Note: * indicates difference is statistically significant at the 95-percent confidence level.

Figure 1 shows stark differences in remote-instruction offerings by levels of poverty and academic achievement. Half of schools in higher-poverty and lower-academic achievement districts had perfunctory instructional programs, compared to about a third of wealthier and higher-achieving districts. The share of schools with rigorous remote instructional programs in high-poverty and low-achieving districts was about half the share in low-poverty and high-achieving districts.

In contrast, there was little difference in the percentages of schools offering rigorous, moderate, or perfunctory performance by district minority-student composition. This contrast, which persists through most of the other instructional offerings reviewed in this article, suggest that districts’ pandemic responses may have had dynamics different from those often found in education research, where minority and poverty influences are often interrelated.

Instructional Platforms for Remote Learning

Remote instructional platforms refer to the methods districts used to deliver content to students. C-ERLS captured three platforms: instructional packets, which provide students static material to work on throughout a week or weeks; asynchronous platforms, like Google Classroom, that allow teachers to provide and collect materials online throughout the school day or week; and synchronous platforms, like Zoom or Google Hangouts, which allow entire classes or groups of students to participate in live video instruction at the same time. Figure 2 displays the percentages of schools that offered, and relied on, these platforms during remote instruction.

Figure 2. Remote-Learning Platforms Offered and Relied On by District Characteristics

| Poverty | Achievement | Minority | |||||||

| High | Low | High | Low | High | Low | ||||

| Platforms offered | |||||||||

| Instructional packets | 92% | 79% | * | 79% | 88% | † | 91% | 80% | * |

| Asynchronous | 77% | 89% | * | 90% | 81% | † | 91% | 83% | † |

| Synchronous | 33% | 49% | * | 52% | 36% | * | 39% | 46% | |

| Platforms primarily relied on | |||||||||

| Instructional packets | 29% | 17% | * | 16% | 26% | † | 15% | 23% | † |

| Both packets and platforms | 21% | 17% | 14% | 23% | † | 23% | 16% | ||

| Online platforms | 50% | 66% | * | 70% | 51% | * | 63% | 60% | |

Note: * indicates difference is statistically significant at the 95-percent confidence level; † indicates difference is statistically significant at the 90-percent confidence level.

Instructional packets can be completed with little interaction between teachers and students and allow for a lower quality of instruction, on average, than online platforms might. A larger percentage of schools in high-poverty or low-achieving districts offered these packets than in low-poverty or high-achieving ones. A lower percentage of schools in high-poverty districts offered online platforms of any kind, whether synchronous or asynchronous. High-minority districts offered both packets and asynchronous platforms in more schools than low-minority districts. Many districts offered more than one option, most often when districts used asynchronous platforms as their first platform but provided paper packets as backups for students with insufficient access to technology.

To go beyond what districts offered and capture which platform districts relied on primarily for remote instruction, the C-ERLS team categorized districts by whether their instructional program relied primarily on online platforms, primarily on instructional packets, or equally on both.

The only difference in platforms relied on by high- and low-minority districts was for instructional packets, which were more often relied on by schools in low-minority districts. The pattern of differences by poverty and achievement was more pronounced. Compared to lower-poverty and higher-performing districts, more schools in high-poverty and low-performing districts offered and relied on instructional packets. Both asynchronous and synchronous instruction was more common in more-advantaged and higher-achieving districts.

Certainly, instructional packets have their place as an emergency remote-instructional platform during a pandemic and may emulate homework that students receive in normal times. On average, though, packets alone are likely much further from typical instruction than online platforms. These differences persisted and are evident eight weeks after most school closures started, past the initial chaos. The fact that districts with more-disadvantaged students offered the least-ambitious platforms after so much time had elapsed suggests more students in those districts received lower-quality remote instruction than students in other districts.

Technology Assistance and Expectations

With the shift to online instruction, access to the internet and devices became a precursor to learning in many districts. Most schools were in districts that offered technology assistance to students. Assistance with internet access was similar across districts by poverty level, but device loans were more common in low-poverty districts.

A smaller share of schools in high-poverty and low-achieving districts had explicit expectations for one-on-one contacts between teachers and students. High-poverty districts also have lower percentages of students getting grades for remote work, driven by the lower percentage of schools grading work based on performance.

Figure 3. Technology Assistance and Expectations by District Characteristics

| Poverty | Achievement | Minority | |||||||

| High | Low | High | Low | High | Low | ||||

| Technology Assistance | |||||||||

| Internet | 68% | 70% | 72% | 67% | 87% | 62% | * | ||

| Devices | 57% | 70% | † | 70% | 61% | 72% | 63% | ||

| Expectations | |||||||||

| One-on-one Contact | 64% | 79% | * | 79% | 70% | † | 77% | 73% | |

| Participation | 56% | 66% | 66% | 59% | 63% | 63% | |||

| Attendance | 21% | 35% | * | 36% | 25% | † | 24% | 34% | |

| Grading work | |||||||||

| Any grading | 63% | 69% | 68% | 66% | 72% | 65% | |||

| For performance | 25% | 34% | 31% | 32% | 32% | 31% | |||

| For completion | 37% | 34% | 37% | 34% | 40% | 33% | |||

Note: * indicates difference is statistically significant at the 95-percent confidence level; † indicates difference is statistically significant at the 90-percent confidence level.

Technology assistance was available in more schools in districts with higher percentages of minority students. These districts offered more assistance with internet access (87 percent of high-minority districts versus 62 percent of districts with lower percentages of minority students). There were no measurable differences in districts’ expectations for one-on-one contact, student participation, attendance, or grading policies between high- and low-minority districts.

Conclusion

The Covid-19 pandemic is arguably the largest disruption America’s K–12 education system has ever faced. For students, this disruption—and the differences in districts’ response to it—will harm academic achievement and likely exacerbate longstanding achievement gaps.

School and district leaders will have their hands full next school year, not only negotiating the continued uncertainty of coronavirus, but also assessing the progress students have made, or not made, since mid-March. They will soon have to implement sustainable instructional programs to make up lost ground.

Schools will also have to take practical measures to re-open, potentially forcing some to start the year with remote instruction. Adding insult to injury, districts face this continued challenge with significant projected revenue shortfalls and budget tightening.

C-ERLS data darken an already bleak picture. The educational recovery from the coronavirus pandemic, it appears, will be a long one. Should schools and districts not rise to the task ahead, students will be the ones to bear the heaviest load, especially those in low-achieving, higher-poverty districts.

Nat Malkus is resident scholar and deputy director of education policy studies at the American Enterprise Institute.

Read more from Education Next on coronavirus and Covid-19.