Education standards do not flop spectacularly. Their failure gives rise to nothing like the black-and-white films of early aeronautical experiments: no missiles exploding on launch pads or planes tumbling from the sky. But 10 years after 46 of the 50 states adopted the Common Core standards, the lack of evidence that they have improved student achievement is nonetheless remarkable. Despite the fact that Common Core enjoyed the bipartisan support of policy elites and commanded vast financial resources from both public and private sources, it simply did not accomplish what its supporters had intended. The standards wasted both time and money and diverted those resources away from more promising pursuits.

Three studies have now sought to examine the effects of Common Core and, more generally, “college- and career-ready” standards on student learning. The picture that emerges does not inspire confidence. The most recent study, conducted in 2019 by the federally funded Center on Standards, Alignment, Instruction, and Learning, or C-SAIL, found that college- and career-ready standards had negative effects on student performance on the National Assessment of Educational Progress, or NAEP, in both 4th-grade reading and 8th-grade math. A series of analyses that I conducted over several years revealed mixed effects from Common Core in states defined as “strong implementers” of the standards. And a 2017 study showed that adoption of Common Core standards did prompt many states to raise their performance benchmarks—that is, the minimum score at which students are judged as attaining “proficiency” on state tests. These higher proficiency bars, however, have not translated into higher student achievement. It is time to accept that Common Core didn’t fulfill its promise.

C-SAIL Study

C-SAIL’s 2019 study examined states’ average NAEP scores in 2010, the year in which most states adopted the standards, and in 2017. Researchers theorized that, among the states adopting Common Core, those that had weak standards before 2010 stood to incur the greatest gains, while those that had more rigorous standards in place before Common Core would experience the least change because they already had high expectations for students. Based on the Fordham Institute’s 2010 evaluations of state English language arts and math standards, the C-SAIL research team created a Prior Rigor Index, assigning states with weak standards to the “treatment” group and states with strong standards to the comparison group. (States scoring in the middle of Fordham’s rating scale were excluded from the analysis, to provide a sharper contrast, as were states adopting standards in any year other than 2010.)

C-SAIL’s 2019 study examined states’ average NAEP scores in 2010, the year in which most states adopted the standards, and in 2017. Researchers theorized that, among the states adopting Common Core, those that had weak standards before 2010 stood to incur the greatest gains, while those that had more rigorous standards in place before Common Core would experience the least change because they already had high expectations for students. Based on the Fordham Institute’s 2010 evaluations of state English language arts and math standards, the C-SAIL research team created a Prior Rigor Index, assigning states with weak standards to the “treatment” group and states with strong standards to the comparison group. (States scoring in the middle of Fordham’s rating scale were excluded from the analysis, to provide a sharper contrast, as were states adopting standards in any year other than 2010.)

In this analysis, researchers detected statistically significant negative effects in both 4th-grade reading and 8th-grade math.

The C-SAIL team conducted a second analysis using what they dubbed a Prior Similarity Index. A 2009 study by researchers at Michigan State University had determined that some states’ 2009 math standards were similar to Common Core in terms of focus and coherence, while other states’ standards were inferior on those qualities. The states with the “less similar” standards comprised the treatment group, since researchers assumed that Common Core imposed a substantial change in those places. States with prior math standards that were similar to Common Core’s were assigned to the comparison group.

This second analysis uncovered no statistically significant effects.

All of the estimated effects from both analyses are negative, with losses ranging from about 1.5 to 4 NAEP scale score points. The effects are also small, especially considering that they represent a policy unfolding over seven years. Consider these results in the context of the history of NAEP scores for the nation as a whole. Losses on NAEP are rare, but relatively large gains are common. NAEP advances of four or more points have been registered during short periods: 4th-grade reading (6 points, 2000–02), 8th-grade reading (4 points, 1994–98), 4th-grade math (9 points, 2000–03), and 8th-grade math (5 points, 2000–03).

Common Core supporters were understandably disappointed by these findings, but a particularly disheartening discovery was that the losses did not abate, and in fact, were still accumulating in 2015–17. It became harder for these advocates to urge patience and argue that Common Core’s positive impact would eventually emerge: the negative effects of Common Core were larger in 2017 than in any previous year.

Impact on State Proficiency Standards

While the evidence indicates that Common Core failed to improve academic achievement, the standards did prompt states to raise their benchmarks for student learning. In 2017, Jaekyung Lee and Yin Wu of the University of Buffalo-SUNY investigated the effects of Common Core on state proficiency standards for reading and math (that is, the minimum scores set for students to be identified as “proficient” on state tests) and student achievement. They found that Common Core states raised the proficiency bar more than non-adopting states during this period. Raising this standard makes it more difficult for students to score as proficient and thereby raises expectations. Echoing previous research, though, the researchers found that raising or lowering the proficiency bar was not associated with gains in student achievement on NAEP from 2009 to 2015. The authors caution: “Although it is premature to make any verdict on the impact of the CCSS [Common Core] on student achievement, the findings of this study as well as previous studies raise concerns about implementation challenges and limitations of the current CCSS-based education policies.”

Brown Center Report Studies

In 2014–16, I conducted a series of correlational analyses of Common Core, published by the Brookings Institution’s Brown Center Report on American Education. In 2018, I released a follow-up study. The goal of these studies was to take a look at whether Common Core was more effective in states that took implementation of the standards seriously.

My method was to compare test results in the states that rejected Common Core (non-adopters), with those in states that were “strong implementers” of the standards. I conducted two sets of comparisons with different criteria for identifying states as strong implementers.

The first group of strong implementers comprised states that in 2011 reported spending federal stimulus funds on three activities to support standards implementation: professional development, new instructional materials, and joining a testing consortium.

For the second set of comparisons, I designated as “strong implementers” the states with ambitious timelines for fully implementing Common Core “in classrooms.” These 11 states planned on full implementation by the end of the 2012–13 academic year. These criteria were designed to be dynamic. The composition of the groups changed over time with changes in state policy toward Common Core. After 2013, states that formally rescinded the standards were re-categorized as non-adopters for the

NAEP period in which the policy change occurred. Non-adopters grew to 10 states in 2017 from 5 states in 2013, and strong implementers declined to 8 states in 2017 from 11 states in 2013.

For this essay, I developed a third strategy for identifying strong implementers, based on whether in 2017 a state used either of the two assessments that were specifically developed to align with the Common Core standards: the Partnership for Assessment of Readiness for College and Careers test or the Smarter Balanced test. (I counted the three states that used some items from these tests in a hybrid state assessment—Louisiana, Massachusetts, and Michigan—as among those using a “Common Core” test.)

For this essay, I developed a third strategy for identifying strong implementers, based on whether in 2017 a state used either of the two assessments that were specifically developed to align with the Common Core standards: the Partnership for Assessment of Readiness for College and Careers test or the Smarter Balanced test. (I counted the three states that used some items from these tests in a hybrid state assessment—Louisiana, Massachusetts, and Michigan—as among those using a “Common Core” test.)

The premise of this strategy is that states using a prominent Common Core–aligned test in 2017 were publicly indicating a strong commitment to the standards. This model has the advantage of producing larger comparison groups than the other two—with 23 states using a Common Core test and 27 not.

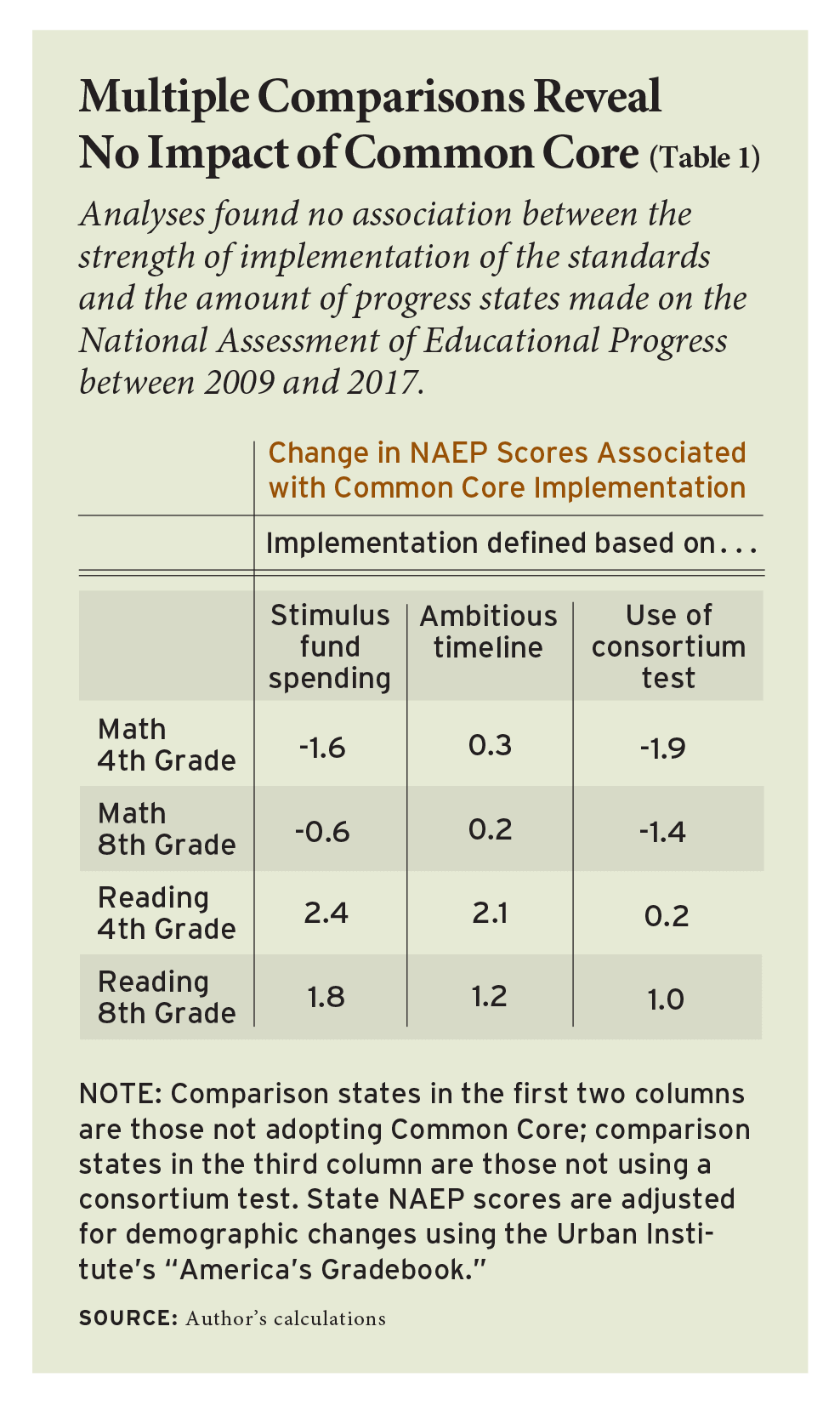

Results of the comparisons are mixed (see Table 1). Some of the changes in NAEP performance associated with Common Core are positive and some are negative. These effects are also quite small—plus or minus about 2 NAEP scale score points. The results are more favorable toward Common Core than those of the C-SAIL study, especially in reading: the improvement in 4th-grade reading ranged from 0.2 scale score points to 2.4 points—but these findings agree with C-SAIL’s conclusion that only minimal changes in NAEP scores are associated with states embracing or rejecting Common Core.

Time to Cut Bait?

A decade after the release of the Common Core standards, the accumulated evidence reveals no meaningfully positive result. A limitation of this research is the difficulty of pinpointing precisely when Common Core should be considered fully implemented and of evaluating the fidelity of that implementation. Self-selection could also be a problem if unknown factors influenced states in adopting or rejecting Common Core and those factors subsequently influenced state NAEP scores. Yet the research to date on Common Core reinforces a larger body of evidence suggesting that academic-content standards bear scant relevance to student learning. In a recent blog post, Robert Slavin of Johns Hopkins University observes that “plentiful evidence from rigorous studies” indicates that adopting one set of standards over another “makes little difference in student achievement.” Slavin notes that of the dozens of favorable reviews of curricula posted by EdReports.org, a curriculum-evaluation organization that was founded to support Common Core implementation, only two programs with high ratings have any empirical evidence of effectiveness. Alignment with Common Core, not evidence of boosting student learning, is the first screen in the EdReports review process.

A curriculum-review process that gives greater weight to adherence to standards than to impact on learning is not identifying high-quality curricula; it is identifying conforming curricula. An example rich with irony can be found in the textbook series Math in Focus, which is based on the math standards of Singapore. Students in that nation consistently score near the top of international math assessments, and the authors of Common Core touted it as one of the countries whose standards they consulted in developing Common Core. In the early days of implementation, Common Core supporters pointed to Singapore math as ideal for implementing their vision of high-quality mathematics instruction. Math in Focus produced impressive learning gains in three rigorous studies of effectiveness that involved about 3,000 children.

But Math in Focus failed the EdReports review. How can that be? The textbook series moves students more quickly through elementary math than Common Core dictates. A common refrain in the EdReports reviews is that topics from later grades are introduced, taking the program out of alignment with the standards. A program with rigorous evidence of effectively teaching math is vetoed while programs with no evidence of boosting learning are endorsed because they are compatible with Common Core.

In short, the evidence suggests student achievement is, at best, about where it would have been if Common Core had never been adopted, if the billions of dollars spent on implementation had never been spent, if the countless hours of professional development inducing teachers to retool their lessons had never been imposed. When will time be up on the Common Core experiment? How many more years must pass, how much more should Americans spend, and how many more effective curricula must be pushed aside before leaders conclude that Common Core has failed?

This piece is part of a forum, “A Decade On, Has Common Core Failed?” For alternate takes, please see “Common Standards Aren’t Enough” by Morgan S. Polikoff, and “Stay the Course on National Standards” by Michael J. Petrilli.

This article appeared in the Spring 2020 issue of Education Next. Suggested citation format:

Polikoff, M.S., Petrilli, M.J., and Loveless, T. (2020). A Decade On, Has Common Core Failed? Assessing the impact of national standards. Education Next, 20(2), 72-81.