Way back in the late 1960s, when federal officials and eminent psychologists were first designing the National Assessment of Educational Progress, they probably never contemplated testing students younger than nine. After all, the technology for mass testing at the time—bubble sheets and No. 2 pencils—only worked if students could read the instructions and the questions, hold a pencil, and fill in their answers. Yes, there have been early-childhood assessments available for decades, instruments like the Peabody Picture Vocabulary Test, but they require teachers to sit down one-on-one with students while students sound out words, identify numbers, and demonstrate other skills in person. Using those sorts of tests for a nationally representative examination system would have been logistically complex, prohibitively expensive, and politically untenable.

Furthermore, in those days, most young children were not enrolled in school. Public education didn’t start until 1st grade in most places. It wasn’t until the 1970s, when most U.S. states offered grants to help school districts start kindergarten programs, that attending school at age five became commonplace (see “What Happened When Kindergarten Went Universal?”, research, Spring 2010). And while preschools existed, only 28 percent of four-year-olds attended them, and their focus was almost entirely on exploration, socialization, and play. With school not starting in earnest until age six or so, testing at age nine made sense.

But how times have changed. Forty-one states now require districts to offer kindergarten, and half of them mandate that students attend. Nationwide, 85 percent of five-year-olds are enrolled in pre-K or kindergarten, with 77 percent in full-day programs. Furthermore, the vast majority of America’s four-year-olds are in some kind of formal preschool program—68 percent, at last count. While there is still vigorous debate about what children should be doing in preschool, there is also a broadly shared expectation that students spend at least part of their time learning pre-literacy and pre-numeracy skills, so they can hit the ground running in kindergarten.

The other big change, of course, is technology. The choice is no longer between cheap bubble tests and expensive, one-to-one batteries. Modern assessments, given over Chromebooks, iPads, and other devices, can accurately assess student skills and understanding even before students can decode words on a page. Questions can be read aloud and speech-recognition software can record students’ verbal responses, which artificial intelligence can comprehend. Animated graphics and engaging videos can make the whole experience feel more like a game than a test.

This is why commercial test providers Curriculum Associates and NWEA have done what the NAEP designers may not have considered: created standardized tests for students as young as five. Banish from your head images of kindergarteners filling in bubble sheets. Instead, imagine kids playing an interactive game, much as they would on an educational app or website, during short testing sessions with plenty of “brain breaks.” The i-Ready and MAP Growth fall kindergarten assessments may look like games, but they also work to gather data that thousands of school districts use to identify student needs, spot trends, and target instruction.

Now that almost all NAEP exams are given on devices, too, there’s little reason to think that officials couldn’t design and offer a kindergarten exam as well.

Assessing Early Elementary

The rationale for testing academic skills in the early elementary grades is powerful. Consider the federal law behind NAEP, which defines its purpose as “to provide, in a timely manner, a fair and accurate measurement of student academic achievement and reporting of trends in such achievement in reading, mathematics, and other subject matter.” In its current form, NAEP leaves an enormous gap in our knowledge about what’s going on in schools. Most kids in this country are starting their education at age three or four, yet we don’t test them until age nine or until the 4th grade (using either the Long-Term Trend Assessment administered every four years or the newer “main NAEP” tests given every other year). That’s a long time for policymakers and researchers to be left in the dark about what’s going on and what students may or may not be learning.

Grades K–3 are arguably the most critical years of a child’s education, given what we know about the importance of early-childhood development and early elementary-school experiences. This is when children are building the foundational skills they’ll need in the years ahead. One report found that kids who don’t read on grade level by 3rd grade are four times more likely to drop out of high school later on. Why do we wait until after the most important instructional and developmental years to find out how students are faring?

Gaining access to valid and reliable kindergarten data would give us a much better chance at solving some of the mysteries that have stumped the field in recent years, especially about the trends we’ve seen in past decades. Those trends show big gains on NAEP for most students from the late 1990s until about 2010 or so, followed by a plateau and then a more recent, pre-pandemic decline in the achievement of the lowest-scoring students. Since we only have scores from the 4th, 8th, and 12th grades, most of the conversation naturally focuses on what might be happening in schools that accounts for the trends. But if we had kindergarten data, we might learn that it’s what’s happening before kids ever get to elementary school that is responsible for what we are seeing. What if kindergarten readiness has changed over time, and that’s what’s causing the ups and downs in pupil achievement? We might also gain valuable information about the foundational instruction that young students are receiving in kindergarten from the teacher surveys that go along with the tests. These surveys

can tell us, for example, what young students are learning, which instructional materials schools are using, and whether teaching methods are well aligned with the science of reading.

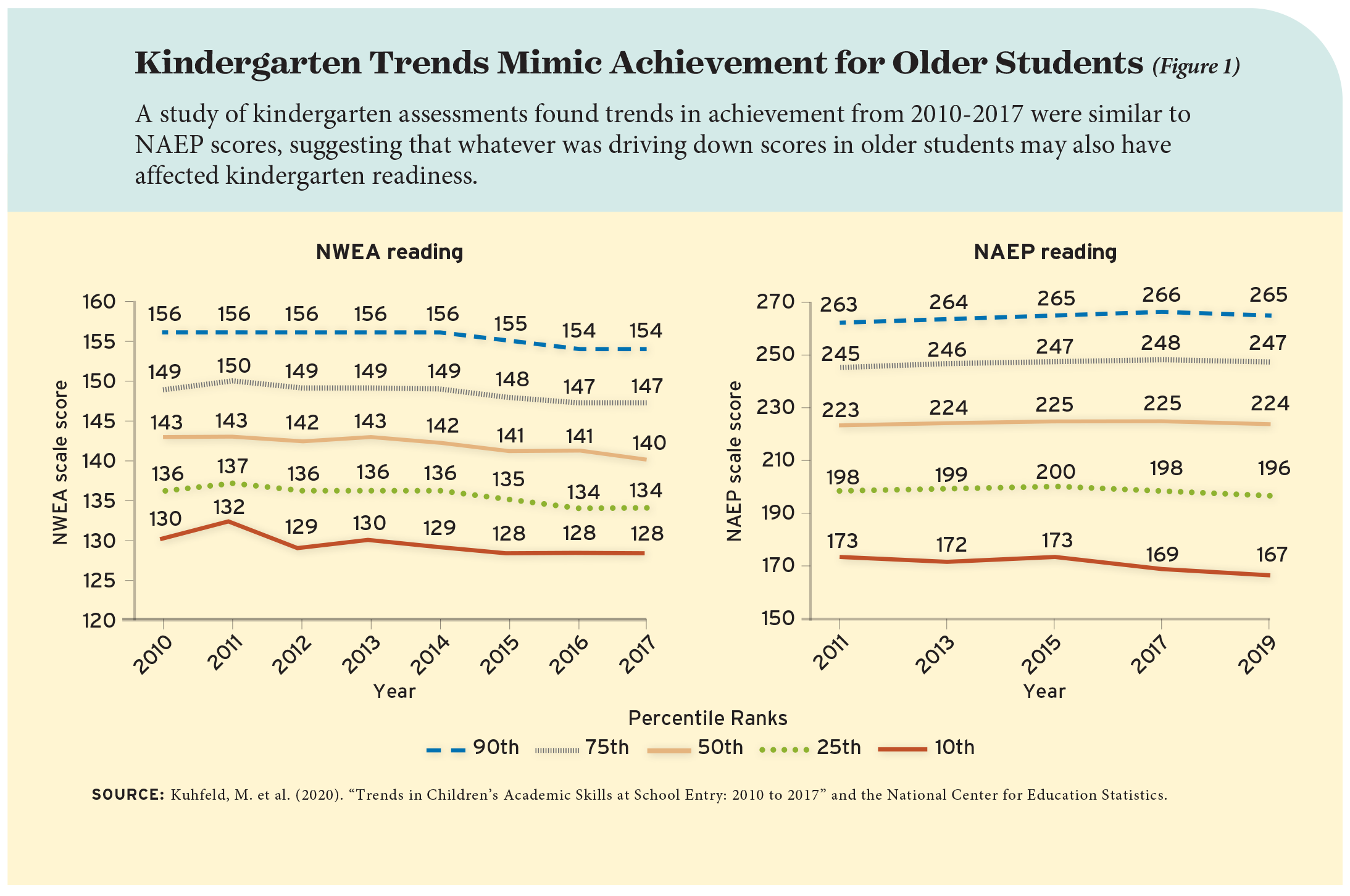

Even the test scores alone would be a huge contribution to our understanding. To get a glimpse of what we might see, consider a recent study by a quartet of scholars (see Figure 1). Using NWEA’s fall kindergarten MAP Growth assessment results from thousands of schools, weighted to represent the national population, they found a similar pattern to the one we see in NAEP data for older children: from 2010 to 2014, scores were mostly flat, followed by a significant decline through 2017 (when their time series ended).

This is intriguing but also frustrating, since we don’t have data from before 2010—NWEA wasn’t testing enough kindergartners back then—or for more recent years. But it indicates that whatever was driving down scores for older students, starting in the mid-2010s, might also have been reducing kindergarten readiness. And it also indicates how much more we could gain from the current NAEP administration with kindergarten data. Testing students in kindergarten could also give the public and policymakers a better understanding of how much students are learning in grades K–4, by establishing a baseline against which growth can be measured.

A Worthy Challenge

To be sure, the wizards who oversee the NAEP would have to figure out a number of technical and design challenges. For example, should the test focus on kindergarten readiness, and therefore be given in the fall? Or should officials focus on a spring assessment, to align with tests for the 4th, 8th, and 12th grades? How will they make sure that all test takers have access to similar devices and connectivity, so the testing conditions are the same from school to school? What if some kindergarteners are more familiar with technology than others? And when it comes to literacy, should the assessment focus solely on fluency, or the skill of sounding out words and making sense of them, or should it also focus on comprehension, even if students aren’t actually reading yet themselves? How will NAEP’s sampling work given that kindergarten, while ubiquitous, is not universal?

Even with modern technologies, kindergarten assessments aren’t quite as valid and reliable as those for older students. At least that’s the case for i-Ready Assessment and MAP Growth, partly because their vertical scales start at grade K and there’s always more statistical noise at the bottom and the top of such scales. Officials would need to figure out how to make a kindergarten NAEP as trustworthy as its other assessments.

And then there are the financial and political headwinds. As it stands, NAEP doesn’t have enough money to implement all of the assessments officials would like to give. So if we were to add kindergarten testing, Congress would either have to provide more money, or tests in other subject areas or grade levels would need to be cut.

None of these challenges should be insurmountable. If NAEP were being designed today from scratch, it’s hard to imagine that kindergarten assessments would not be included in the package. We’ve been operating in the dark around early childhood long enough. It’s time to turn on the lights.

Michael J. Petrilli is president of the Thomas B. Fordham Institute, research fellow at Stanford University’s Hoover Institution, co-editor of How to Educate an American, and executive editor of Education Next.

This article appeared in the Spring 2022 issue of Education Next. Suggested citation format:

Petrilli, M.J. (2022). The Case for Kindergarten Tests: Starting NAEP in 4th grade is much too late. Education Next, 22(2), 76-79.